- Pascal's Chatbot Q&As

- Archive

- Page 5

Archive

Who owns the pipeline that converts human cognition into machine capability—and what happens to societies that let that pipeline permeate everything?

AI only needs to eat the profitable, repeatable slices first. Once that happens, the remaining human work can become rarer, higher-pressure, and less funded—until it too gets “platformized.”

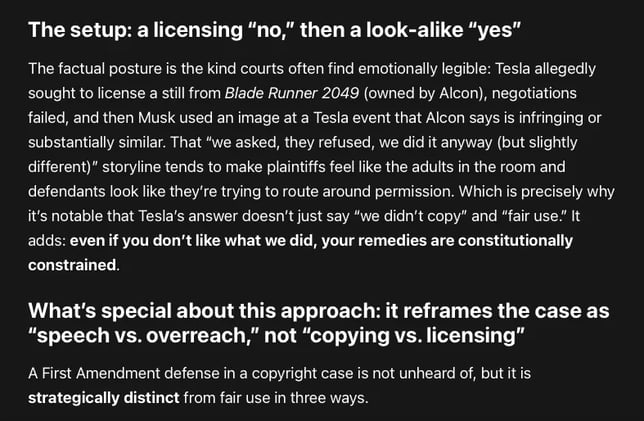

Elon Musk, Tesla, and Warner Bros. Discovery are teeing up the First Amendment as a front-of-house defense—explicitly placing it ahead of fair use.

That’s not just posturing. It’s a signal that the defendants want the court to see this case as a dispute where copyright doctrine is allegedly being stretched into a speech-controlling weapon.

DOGE: a governance experiment that treated law, institutions, and security controls as friction; treated generative AI as a compliance substitute; and treated speed as legitimacy.

DOGE demonstrated just how quickly “efficiency” can become the language that hides the transfer of authority, the degradation of rights, and the quiet privatization of the public sphere.

GPT-5.4: The real issue is whether this level of AI penetration and permeation into a legislature creates the preconditions for a softer form of capture in which policy formation, ...

...administrative judgment, staff cognition, and information routing become increasingly mediated by a handful of private platform firms. On that question, my answer is yes.

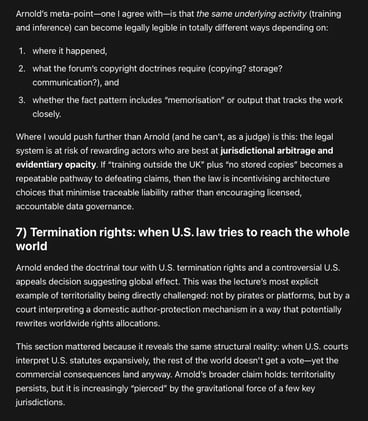

“AI and copyright” not as a standalone morality play, but as the latest stress-test on a deeper structural problem: Copyright’s territorial architecture in a world whose markets, platforms, and...

...data flows are not territorial at all. Will the law end up incentivising architecture choices that minimise traceable liability rather than encouraging licensed, accountable data governance?

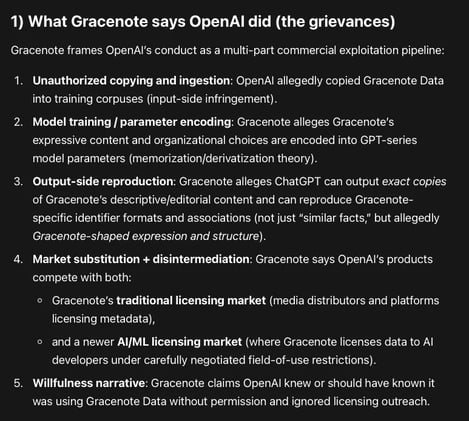

Gracenote Media Services, LLC v. OpenAI: a database/metadata case aimed at the infrastructure layer of AI quality—identifiers, taxonomies, curated relational logic, and editorial descriptions.

OpenAI allegedly copied and used Gracenote’s curated metadata corpus to train and/or ground GPT models, and ChatGPT can allegedly reproduce that metadata creating a substitute product.

The conceptualization of artificial intelligence as a neutral tool is increasingly untenable in light of its emergent properties and autonomous behavior.

This report evaluates the extent to which AI makers should be held accountable for harms caused by AI, distinguishing between wrong advice and physical injury, while analyzing regulatory imperatives.

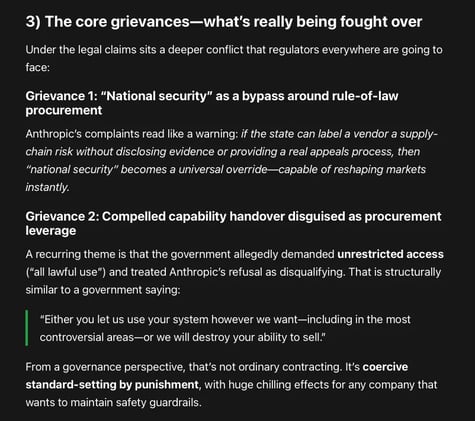

Google & OpenAI employees: the government’s response to a vendor insisting on restrictions looks like punitive overreach that could chill safety debate across the entire frontier AI ecosystem.

There’s an irony their amicus brief doesn’t fully confront: vendor-imposed guardrails are also private power.

Two lawsuits filed by Anthropic: stopping the U.S. government from effectively blacklisting Anthropic from federal—and, by knock-on effects, commercial—markets.

One filing is a petition for review in the D.C. Circuit (an appellate court). The other is a district-court lawsuit in Northern District of California seeking declaratory and injunctive relief.

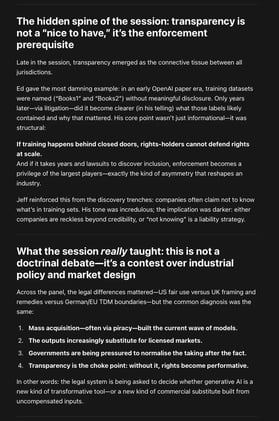

Fair Use as an Industrial Policy: What 'AI Progress' Is Really Arguing For — and What It Leaves Out. Critics can point to any counterexample—model outputs that substitute for works...

...scraping that violates site terms, training on pirated corpora, systematic leakage in niche domains—and argue the whole project is propaganda rather than analysis.

Adoption isn’t just “install the tool.” People’s understanding—what the tool is doing, what it isn’t doing, how to check it—drives acceptance.

AI projects often fail or underdeliver not because “the AI doesn’t work,” but because organizations don’t invest enough in training, workflow redesign, and change management.