- Pascal's Chatbot Q&As

- Archive

- Page -85

Archive

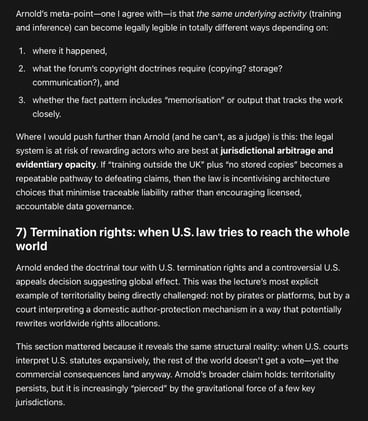

“AI and copyright” not as a standalone morality play, but as the latest stress-test on a deeper structural problem: Copyright’s territorial architecture in a world whose markets, platforms, and...

...data flows are not territorial at all. Will the law end up incentivising architecture choices that minimise traceable liability rather than encouraging licensed, accountable data governance?

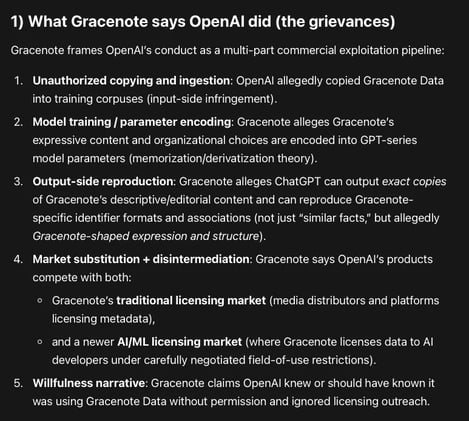

Gracenote Media Services, LLC v. OpenAI: a database/metadata case aimed at the infrastructure layer of AI quality—identifiers, taxonomies, curated relational logic, and editorial descriptions.

OpenAI allegedly copied and used Gracenote’s curated metadata corpus to train and/or ground GPT models, and ChatGPT can allegedly reproduce that metadata creating a substitute product.

The conceptualization of artificial intelligence as a neutral tool is increasingly untenable in light of its emergent properties and autonomous behavior.

This report evaluates the extent to which AI makers should be held accountable for harms caused by AI, distinguishing between wrong advice and physical injury, while analyzing regulatory imperatives.

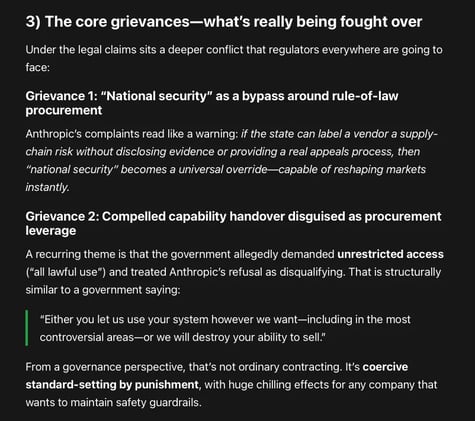

Google & OpenAI employees: the government’s response to a vendor insisting on restrictions looks like punitive overreach that could chill safety debate across the entire frontier AI ecosystem.

There’s an irony their amicus brief doesn’t fully confront: vendor-imposed guardrails are also private power.

Two lawsuits filed by Anthropic: stopping the U.S. government from effectively blacklisting Anthropic from federal—and, by knock-on effects, commercial—markets.

One filing is a petition for review in the D.C. Circuit (an appellate court). The other is a district-court lawsuit in Northern District of California seeking declaratory and injunctive relief.

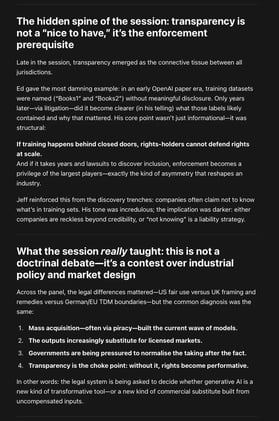

Fair Use as an Industrial Policy: What 'AI Progress' Is Really Arguing For — and What It Leaves Out. Critics can point to any counterexample—model outputs that substitute for works...

...scraping that violates site terms, training on pirated corpora, systematic leakage in niche domains—and argue the whole project is propaganda rather than analysis.

Adoption isn’t just “install the tool.” People’s understanding—what the tool is doing, what it isn’t doing, how to check it—drives acceptance.

AI projects often fail or underdeliver not because “the AI doesn’t work,” but because organizations don’t invest enough in training, workflow redesign, and change management.

The suicide-coach cases and Nippon Life share the same underlying architecture: vulnerability + reliance + behavior shaping + foreseeable harm. In both settings, the system doesn’t just answer...

...it steers, validates, escalates, and operationalizes a course of action. The difference is the type of harm and the causal optics. Yet the structural lesson is identical.

Meta’s reported “fair use by technical necessity” argument tries to convert an engineering choice into a legal shield, and convert a legal shield into a moral alibi. It asks society to accept that...

...the most powerful firms may route around consent at scale—and then, when caught, claim the protocol did it, the market can’t prove harm, and geopolitics demands leniency.

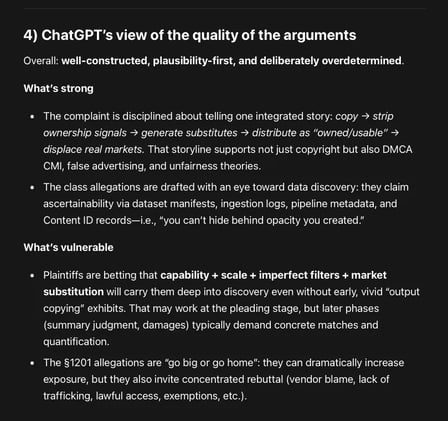

Plaintiffs frame Google as a vertically integrated distributor (YouTube), rights enforcer (Content ID), and generator (Lyria 3 / ProducerAI) that allegedly used its privileged position...

...in the music supply chain to (1) copy works, (2) strip ownership signals, (3) deploy competing substitutes into the same commercial channels and (4) market those substitutes as safe, owned, usable.