- Pascal's Chatbot Q&As

- Posts

- Adoption isn’t just “install the tool.” People’s understanding—what the tool is doing, what it isn’t doing, how to check it—drives acceptance.

Adoption isn’t just “install the tool.” People’s understanding—what the tool is doing, what it isn’t doing, how to check it—drives acceptance.

AI projects often fail or underdeliver not because “the AI doesn’t work,” but because organizations don’t invest enough in training, workflow redesign, and change management.

When AI Does Payroll, Who Do You Trust—The Machine or the People?

by ChatGPT-5.2

Organizations are quietly replacing “back-office paperwork” with AI: the systems that calculate salaries, track leave, and maintain accounting records. The Study on the Impact of Artificial Intelligence on Accuracy, Transparency and Efficiency in Accounts, Payroll and Leave Management Systems looks at what that shift does to three things that matter in real life, not just in IT demos: accuracy (fewer mistakes), transparency (better traceability and audit trails), and efficiency(faster processing, less manual work). It’s based on a questionnaire of 123 respondents (collected during 2024–25) who work in organizations using traditional and/or AI-enabled systems, and it uses basic statistical tests to interpret the responses.

The headline message is simple: most people surveyed believe AI improves these administrative systems, but the biggest blocker is not the technology—it’s the skills gap.

What the study’s main findings really say (in plain English)

1) People think AI makes payroll/accounting/leave systems more accurate

A large share of respondents agree that AI-based systems are more accurate than manual methods and that overall performance improves after implementation. This is not the same as proving the system is objectively more accurate in every case, but it does show something important: the users experience fewer errors, fewer corrections, or fewer “messy exceptions” when AI tools are introduced.

What this likely means in practice: fewer duplicate entries, fewer formula mistakes, fewer missed approvals, and fewer “someone typed the wrong number” incidents.

2) Familiarity with AI strongly affects how good people think it is

The study finds a moderate positive correlation between being familiar with AI and believing AI improves performance (r = 0.453, p < 0.001). That means: the more comfortable people are with AI, the more they perceive it as useful and effective.

Practical translation: adoption isn’t just “install the tool.” People’s understanding—what the tool is doing, what it isn’t doing, how to check it—drives acceptance.

3) Age matters for trust in AI reliability

A chi-square test shows age group is associated with belief in AI reliability. In this sample, respondents aged 31–40 show the strongest agreement that AI is reliable, while the youngest group shows the strongest disagreement.

Practical translation: different groups will resist for different reasons. Younger staff may be more skeptical (or more aware of AI failures), while mid-career staff may be more focused on operational improvement and workload reduction.

4) Departments don’t differ much in perceived benefits

ANOVA suggests no meaningful difference across departments (finance, HR, IT, payroll, admin) in how people rate AI’s performance impact. In other words, once AI is in place, people across functions tend to recognize similar benefits—or at least report them similarly.

5) The #1 implementation challenge is a shortage of skilled staff

The most commonly cited barrier is lack of skilled staff. That’s a blunt but critical point: AI projects often fail or underdeliver not because “the AI doesn’t work,” but because organizations don’t invest enough in training, workflow redesign, and change management.

Do I agree with the findings?

Broadly, yes—but with important caveats.

Where I agree

AI can reduce routine administrative errors and speed up repetitive processing. That’s exactly the kind of work automation is good at.

Transparency can improve when AI-enabled systems create logs, audit trails, and consistent workflows. “Transparent” here mostly means traceable—you can see what happened, when, and by whom (or by what system rule).

The emphasis on skills shortages as the primary challenge is realistic. AI tools don’t magically create operational maturity; they demand it.

The big caveat

This study primarily measures perception, not independently verified outcomes (e.g., measured error rates before/after, audit findings, payroll cycle time data, compliance incidents). Perceptions matter—because adoption lives or dies on them—but perception is not the same as proof.

So I read the conclusions as:

“AI is widely experienced as improving accuracy and efficiency, and acceptance depends heavily on training and familiarity.”

That’s a valuable conclusion, just not the same as:

“AI objectively guarantees better accuracy and transparency.”

Consequences of the situation described (what changes when AI runs core admin)

Consequence 1: Fewer “small errors,” but bigger dependency on system design

Manual work produces frequent small mistakes. AI systems can reduce those—but a flawed rule, bad data mapping, or poorly configured model/automation can scale errors across the entire organization. The risk shifts from “individual typos” to “systemic misconfiguration.”

Consequence 2: Transparency becomes “auditability,” not necessarily “fairness”

AI can create better logs and audit trails (good), but that doesn’t automatically mean decisions are fair or understandable to employees. Transparency often becomes: the system can explain what happened, not always why it should have happened.

Consequence 3: New inequality inside the organization—between “AI literate” and everyone else

Because familiarity correlates with positive perception, AI adoption can create a soft class divide:

People who understand the system can use it, question it, and benefit from it.

People who don’t may feel controlled by it—or ignore it until something goes wrong.

Consequence 4: Compliance and privacy become frontline issues

Payroll and leave systems contain sensitive personal data. As AI is added, organizations must deal with:

Access controls and role-based permissions

Vendor risk and third-party exposure

Data retention rules

Monitoring and incident response

If those aren’t handled, “efficiency” gains can be wiped out by governance failures.

Consequence 5: The job changes more than the headcount

Many roles won’t disappear overnight, but they will shift from data entry to exception handling, review, reconciliation, and oversight. That requires a different skill set—and can be stressful if people aren’t supported.

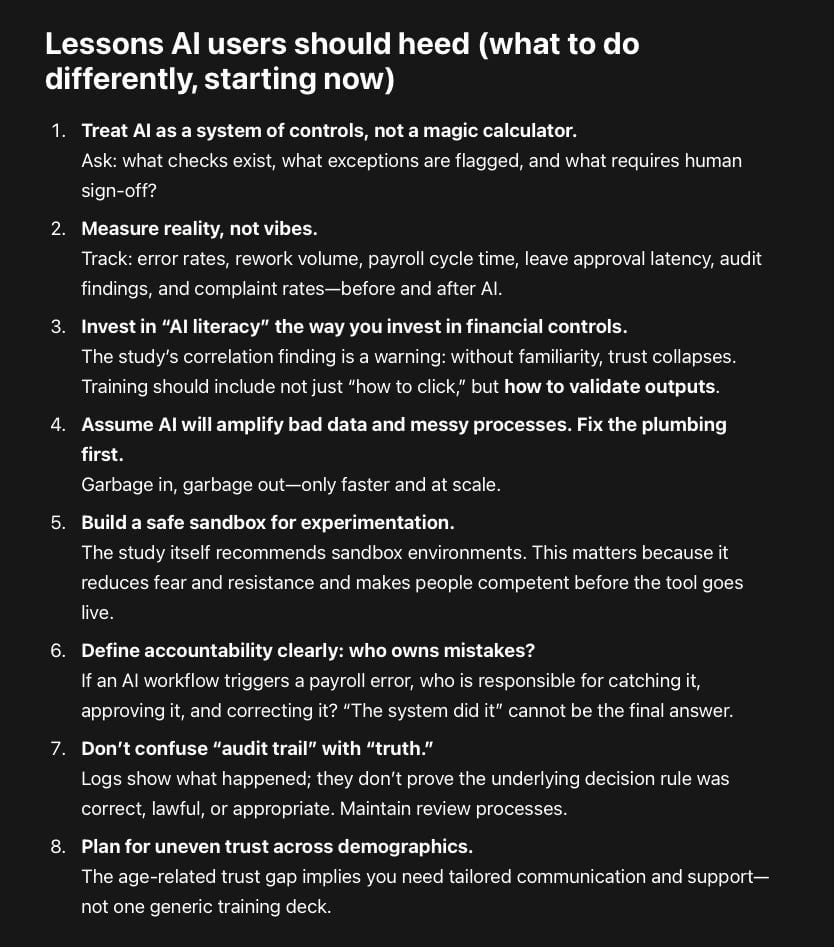

Lessons AI users should heed (what to do differently, starting now)

Treat AI as a system of controls, not a magic calculator.

Ask: what checks exist, what exceptions are flagged, and what requires human sign-off?Measure reality, not vibes.

Track: error rates, rework volume, payroll cycle time, leave approval latency, audit findings, and complaint rates—before and after AI.Invest in “AI literacy” the way you invest in financial controls.

The study’s correlation finding is a warning: without familiarity, trust collapses. Training should include not just “how to click,” but how to validate outputs.Assume AI will amplify bad data and messy processes. Fix the plumbing first.

Garbage in, garbage out—only faster and at scale.Build a safe sandbox for experimentation.

The study itself recommends sandbox environments. This matters because it reduces fear and resistance and makes people competent before the tool goes live.Define accountability clearly: who owns mistakes?

If an AI workflow triggers a payroll error, who is responsible for catching it, approving it, and correcting it? “The system did it” cannot be the final answer.Don’t confuse “audit trail” with “truth.”

Logs show what happened; they don’t prove the underlying decision rule was correct, lawful, or appropriate. Maintain review processes.Plan for uneven trust across demographics.

The age-related trust gap implies you need tailored communication and support—not one generic training deck.

Closing thought

The study captures a very common pattern in AI adoption: AI improves routine operational performance when it is embedded into a well-governed process and supported by training. The biggest risk isn’t that AI can’t do payroll; it’s that organizations roll it out without the skills, governance, and accountability structures needed to keep it trustworthy.

In other words: AI doesn’t replace diligence—it raises the price of not having it.