- Pascal's Chatbot Q&As

- Posts

- Gracenote Media Services, LLC v. OpenAI: a database/metadata case aimed at the infrastructure layer of AI quality—identifiers, taxonomies, curated relational logic, and editorial descriptions.

Gracenote Media Services, LLC v. OpenAI: a database/metadata case aimed at the infrastructure layer of AI quality—identifiers, taxonomies, curated relational logic, and editorial descriptions.

OpenAI allegedly copied and used Gracenote’s curated metadata corpus to train and/or ground GPT models, and ChatGPT can allegedly reproduce that metadata creating a substitute product.

The Metadata Heist: Gracenote’s Shot Across OpenAI’s Bow

by ChatGPT-5.2

Gracenote Media Services, LLC v. OpenAI is, on its face, “another AI training data case.” But structurally, it’s a sharper weapon than many of the author-and-newsroom complaints we’ve seen: it’s a database/metadata case aimed at the infrastructure layer of AI quality—identifiers, taxonomies, curated relational logic, and editorial descriptions—exactly the kind of content AI companies quietly value because it reduces hallucinations and makes outputs “feel authoritative.” Gracenote’s core theory is simple: OpenAI allegedly copied and used Gracenote’s curated metadata corpus to train and/or ground GPT models, and ChatGPT can allegedly reproduce that metadata (including verbatim descriptions and identifier formats), creating a substitute product that erodes Gracenote’s licensing markets.

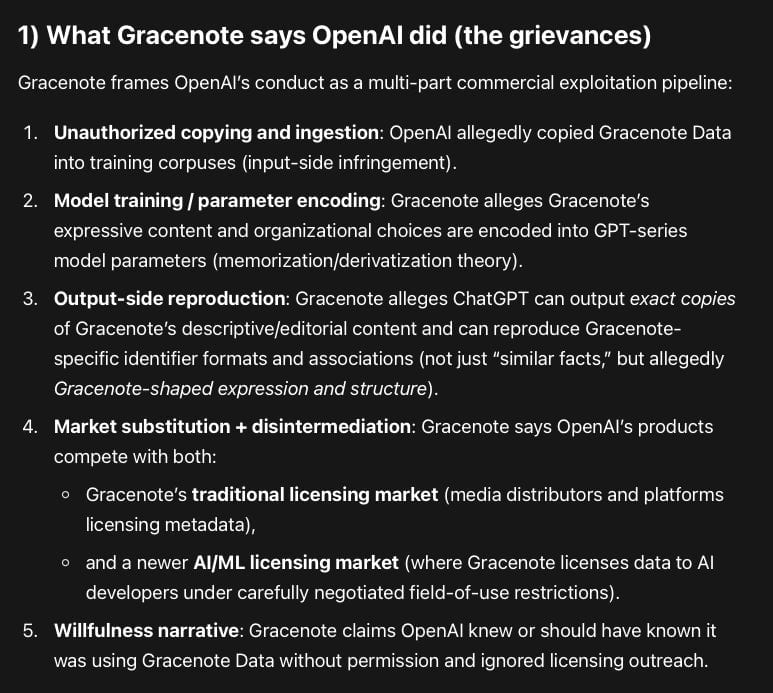

1) What Gracenote says OpenAI did (the grievances)

Gracenote frames OpenAI’s conduct as a multi-part commercial exploitation pipeline:

Unauthorized copying and ingestion: OpenAI allegedly copied Gracenote Data into training corpuses (input-side infringement).

Model training / parameter encoding: Gracenote alleges Gracenote’s expressive content and organizational choices are encoded into GPT-series model parameters (memorization/derivatization theory).

Output-side reproduction: Gracenote alleges ChatGPT can output exact copiesof Gracenote’s descriptive/editorial content and can reproduce Gracenote-specific identifier formats and associations (not just “similar facts,” but allegedly Gracenote-shaped expression and structure).

Market substitution + disintermediation: Gracenote says OpenAI’s products compete with both:

Gracenote’s traditional licensing market (media distributors and platforms licensing metadata),

and a newer AI/ML licensing market (where Gracenote licenses data to AI developers under carefully negotiated field-of-use restrictions).

Willfulness narrative: Gracenote claims OpenAI knew or should have known it was using Gracenote Data without permission and ignored licensing outreach.

Legally, the complaint pleads four causes of action:

Count I – Direct copyright infringement (building datasets, training on them, distributing outputs that embody copies/derivatives).

Count II – Vicarious infringement (corporate control/profit theories across OpenAI entities).

Count III – Contributory infringement (if end users are direct infringers through outputs, OpenAI allegedly materially contributes via model design/training/grounding choices).

Count IV – Unjust enrichment (OpenAI allegedly obtained the benefit of Gracenote’s investments without paying, including via subscription and enterprise monetization).

Gracenote’s requested relief is also aggressive and strategically telling: damages (statutory/actual), injunctions, and even destruction of models/training sets incorporating Gracenote Data—a prayer that often functions less as a realistic endpoint and more as settlement leverage.

2) The evidence: what looks strong vs. what looks thin (at the pleading stage)

This case’s strength depends on how well Gracenote can convert three kinds of assertions into proof: (a) ownership/validity, (b) copying/use, (c) substitutionary harm. The complaint is strongest on (a), plausible on (b) for output-side, and still “discovery-dependent” on (b) for input-side.

A. Stronger elements

(1) Registered rights and a definable corpus

Gracenote says it registered the Gracenote Programs Database and claims ownership over the database and its component parts. That matters because it anchors standing, statutory damages eligibility (if timing lines up), and reduces ambiguity about “what work” is at issue.

(2) A plausibility hook that courts increasingly care about: concrete output behavior

Courts have shown more willingness to let cases proceed when plaintiffs provide specific examples of the model producing protected material, not just general allegations that “my work was probably trained on.” Gracenote tries to do this with allegations that GPT outputs can reproduce (i) Gracenote’s descriptive/editorial content, and (ii) Gracenote’s identifier formats/associations (including structural recall of schemas like TMSID). This is important because it shifts the fight from abstract “training is fair use” to the more tangible question: is the product distributing copyrighted expression or a close substitute?

(3) The “grounding / RAG market” angle

Gracenote doesn’t only argue “training copied my data.” It argues OpenAI’s product competes directly with Gracenote’s licensed AI use-cases—especially grounding. That’s savvy, because fair use analysis often turns on market harm, including harm to “traditional, reasonable, or likely to be developed” licensing markets. Gracenote is essentially telling the court: we already license this exact use-case; OpenAI is bypassing that market and offering a substitute.

(4) Field-of-use restrictions as an industry norm

The complaint emphasizes that Gracenote licenses AI use, but under restrictions designed to prevent rebuilding Gracenote’s own product. That frames OpenAI not as an innovator “creating something new,” but as a party allegedly free-riding while ignoring the governance scaffolding everyone else accepts.

B. Weaker / more speculative elements (likely battlegrounds)

(1) “On information and belief” about training corpuses

Like most AI data cases, Gracenote does not (yet) have internal OpenAI dataset logs, provenance records, or weights-level evidence. Allegations about how Gracenote Data entered training corpuses will likely be attacked as speculative—unless Gracenote’s output examples are strong enough to support an inference of copying, or unless it can show a direct ingestion path (e.g., via a specific licensed customer leak, scraping vector, vendor dataset, or a known corpus).

(2) The database copyright boundary

Gracenote’s dataset is valuable, but copyright doctrine can be unforgiving where plaintiffs slide from protectable expression/selection/arrangement into unprotectable facts. Gracenote’s best position is its editorial descriptions, narrative blurbs, curated tags, taxonomies, relational mapping, and selection/arrangement—not bare factual fields like titles, release years, or cast lists. Expect OpenAI to argue that much of metadata is factual, functional, or scènes à faire—and that any similarity is inevitable in a shared domain. Gracenote will want to keep the case anchored in expressive descriptors and distinctive structure.

(3) Identifier formats: protectable expression or functional system?

If Gracenote can show ChatGPT reproduces Gracenote-specific identifiers or consistent schema rules that are not standard industry conventions, that helps copying. But OpenAI may argue identifiers are functional, or that similar formats exist elsewhere. This will become a technical evidence contest: are these outputs coincidental, publicly documented, or uniquely Gracenote?

(4) Willfulness

Gracenote’s willfulness narrative (knowledge, refusal to license, industry awareness) helps rhetorically and for enhanced damages, but willfulness is hard to prove early. It often becomes leverage later if discovery reveals internal discussions.

3) How this case compares to other AI copyright cases

Gracenote v. OpenAI resembles the broader wave of AI copyright litigation, but it differs in three strategically meaningful ways:

A. Not “books” or “news” — but industrial data infrastructure

Most headline cases feature authors, journalists, or visual artists, and the “work” is an obvious expressive artifact. Gracenote is different: it’s a curated metadata databaseused operationally by platforms. That moves the dispute toward the question courts can grasp: is the AI product replacing a paid data service? In some ways, it’s closer to cases involving reference tools, compilations, or B2B informational products than to pure “creative writing” claims.

B. Stronger “substitute product” framing than many creator cases

Authors often struggle to show that the model outputs are a market substitute for reading the book. Gracenote can argue substitution more directly: media platforms buy metadata to power search, discovery, recommendation, and content navigation; if ChatGPT can produce equivalent metadata, that’s a substitute workflow. That is a cleaner market-harm story than “people won’t buy novels anymore.”

C. A more explicit attack on grounding/RAG as a licensing market

Many complaints talk about training as theft; fewer foreground grounding as a licensed market with field-of-use controls. That focus may matter because it reframes the case away from philosophical debates (“is learning infringement?”) and toward commercial reality (“you’re selling a competing metadata layer”).

4) Likely defenses and procedural path

OpenAI’s predictable core defenses are likely to be:

Motion to dismiss: attack plausibility of copying (especially training ingestion) and argue non-protectability of facts/functional metadata.

Fair use (eventually): especially for training; OpenAI will argue transformative purpose, massive scale, and public benefit; Gracenote will hammer market substitution and existing licensing markets.

Output causation: argue outputs are not “copies,” are user-prompt dependent, or derive from public sources, not Gracenote.

Preemption / unjust enrichment limits: argue state-law unjust enrichment claims are preempted where they overlap with copyright rights.

Injunction/destruction as overreach: courts are generally reluctant to order model destruction; OpenAI will paint that as punitive and impractical.

Procedurally, this likely becomes a familiar arc:

pleading-stage fight (does the complaint plausibly allege copying and protectable expression?),

then discovery battles (dataset provenance, training logs, model evaluation, prompt-output reproducibility),

and later summary judgment on fair use / substantial similarity / market harm.

5) Prediction: plausible outcomes (and what would drive each)

Here are the outcomes I’d put on the table, in descending likelihood:

Outcome 1: Settlement + license + use restrictions (most likely)

If Gracenote’s output examples hold up and discovery risk is real, a commercial resolution is attractive. Gracenote is already a licensing business; OpenAI is a buyer of high-signal data in multiple domains. The settlement template would likely include money, a license, and—critically—field-of-use and redistribution restrictionsaligned with Gracenote’s “don’t rebuild our product” posture.

What increases likelihood:

Strong, reproducible examples of verbatim editorial text output,

evidence of Gracenote-specific identifiers/schema recall,

any internal documents showing ingestion knowledge.

Outcome 2: Partial survival: output-side claims proceed; some input-side theories narrowed

Courts have been more willing to let output-based infringement proceed when plaintiffs show concrete examples, while leaving broader “training is infringement” questions for later. If Gracenote’s examples are compelling, the case could survive motions to dismiss at least in part, while some theories (or some categories of data fields) get narrowed.

What increases likelihood:

Court agrees descriptions/taxonomies are protectable expression,

Court is persuaded by substitutionary market harm for licensed metadata/grounding.

Outcome 3: Dismissal or severe narrowing based on protectability/factuality

If the court views the asserted material as largely factual/functional metadata and finds the complaint’s copying allegations too inferential, it could narrow the case sharply—leaving only the most clearly expressive records (if any) and dismissing the rest.

What increases likelihood:

Gracenote cannot show distinctive expression beyond facts,

identifier schemas deemed purely functional/systemic,

output examples look like generic summaries rather than Gracenote text.

Outcome 4: A merits ruling that meaningfully constrains training use (less likely, but high impact)

A court could ultimately rule against OpenAI on training or grounding theories in a way that becomes a landmark for database-like content. That requires a cleaner factual record than most plaintiffs can obtain and a willingness to treat model training/grounding as market-substituting exploitation rather than transformative learning.

What increases likelihood:

discovery reveals direct copying at scale with provenance and willfulness evidence,

clear market substitution evidence (lost deals, customer statements, measurable displacement),

court accepts “AI grounding license market” framing as cognizable market harm.

Closing take

Gracenote’s suit is a sophisticated move in the AI litigation chessboard because it targets the quiet backbone of “truthy” AI: curated metadata and controlled grounding sources. If authors are arguing “you stole our books,” Gracenote is arguing “you stole the map that makes your system reliable and commercially deployable—and you’re selling the map back as a competing service.”

If Gracenote can substantiate two things—(1) verbatim or near-verbatim editorial outputs and/or distinctive schema/identifier recall, and (2) real substitution in existing licensing markets—this becomes one of the more dangerous cases for an AI defendant at the business-model level, because it attacks the very idea that AI can freely ingest and then operationalize curated B2B data products without paying for them.