- Pascal's Chatbot Q&As

- Archive

- Page -74

Archive

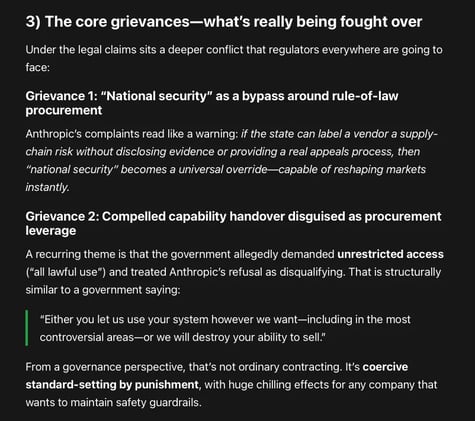

Two lawsuits filed by Anthropic: stopping the U.S. government from effectively blacklisting Anthropic from federal—and, by knock-on effects, commercial—markets.

One filing is a petition for review in the D.C. Circuit (an appellate court). The other is a district-court lawsuit in Northern District of California seeking declaratory and injunctive relief.

Fair Use as an Industrial Policy: What 'AI Progress' Is Really Arguing For — and What It Leaves Out. Critics can point to any counterexample—model outputs that substitute for works...

...scraping that violates site terms, training on pirated corpora, systematic leakage in niche domains—and argue the whole project is propaganda rather than analysis.

Adoption isn’t just “install the tool.” People’s understanding—what the tool is doing, what it isn’t doing, how to check it—drives acceptance.

AI projects often fail or underdeliver not because “the AI doesn’t work,” but because organizations don’t invest enough in training, workflow redesign, and change management.

The suicide-coach cases and Nippon Life share the same underlying architecture: vulnerability + reliance + behavior shaping + foreseeable harm. In both settings, the system doesn’t just answer...

...it steers, validates, escalates, and operationalizes a course of action. The difference is the type of harm and the causal optics. Yet the structural lesson is identical.

Meta’s reported “fair use by technical necessity” argument tries to convert an engineering choice into a legal shield, and convert a legal shield into a moral alibi. It asks society to accept that...

...the most powerful firms may route around consent at scale—and then, when caught, claim the protocol did it, the market can’t prove harm, and geopolitics demands leniency.

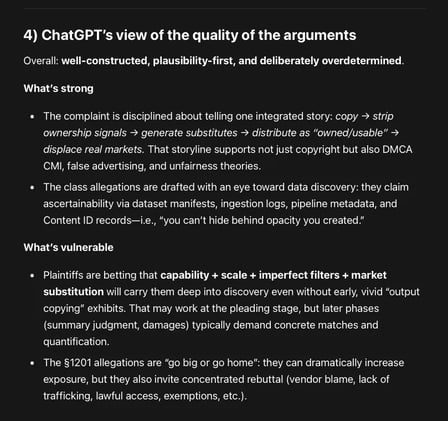

Plaintiffs frame Google as a vertically integrated distributor (YouTube), rights enforcer (Content ID), and generator (Lyria 3 / ProducerAI) that allegedly used its privileged position...

...in the music supply chain to (1) copy works, (2) strip ownership signals, (3) deploy competing substitutes into the same commercial channels and (4) market those substitutes as safe, owned, usable.

GPT-5.2 about the new case against Anna's Archive: It's not only about book piracy, but about preventing ongoing and future industrial exploitation.

Action is “especially critical” because Anna’s Archive is allegedly advertising high-speed access and supply to LLM developers and data brokers.

China's plan puts AI not as a sector, but as a general-purpose layer—aimed at transforming manufacturing and raising productivity across logistics, education, healthcare, and other services.

The direction of travel is “AI in the workflow,” not “AI in the lab”: AI agents, automation, and operational deployment at scale.

The UK should treat permissioned, remunerated use of creative work as the baseline for responsible AI. Creators can reinforce that norm by: a) refusing to legitimise “opt-out” as fair,...

...b) publicising good licensing behaviour and calling out bad actors, and c) backing policy proposals that make licensing and transparency the default cost of doing business.