- Pascal's Chatbot Q&As

- Posts

- This report evaluates the technical, biological, economic, and sociopolitical dimensions of BCI-driven cognitive enhancement...

This report evaluates the technical, biological, economic, and sociopolitical dimensions of BCI-driven cognitive enhancement...

...to determine the probability of a universal intelligence leap and the subsequent ramifications of a bifurcated cognitive landscape.

The Cognitive Stratification Hypothesis: A Comprehensive Analysis of Brain-Computer Interfaces and the Prospect of Universal Superintelligence

by Gemini 3.0, Deep Research. Warning, LLMs may hallucinate!

The intersection of neurobiology and computational engineering has precipitated a paradigm shift in the understanding of human cognitive potential. As Brain-Computer Interfaces (BCIs) transition from exploratory clinical trials to viable commercial neurotechnology, the central inquiry regarding their capacity to universally elevate human intelligence—achieving a state of global superintelligence—becomes paramount. This report evaluates the technical, biological, economic, and sociopolitical dimensions of BCI-driven cognitive enhancement to determine the probability of a universal intelligence leap and the subsequent ramifications of a bifurcated cognitive landscape.

The Technical Evolution and Taxonomy of Neural Interfacing

The foundational mechanics of BCIs involve a sophisticated signal-processing cascade categorized into perception, understanding, and action.1 In the perception layer, sensors capture neural activity through various modalities, ranging from non-invasive scalp-based sensors to highly invasive intracortical implants.3 The subsequent understanding layer decodes these signals into high-level cognitive semantics using advanced machine learning and deep learning algorithms.1 Finally, the action layer translates these semantics into executable commands for external devices or digital environments.1

Current technological breakthroughs have demonstrated the capacity for BCIs to restore communication in paralyzed individuals with upwards of 99% accuracy and minimal latency.3 This progress is driven by the convergence of neuromorphic computing, high-bandwidth electrode arrays, and generative artificial intelligence (GAI).1 However, the shift from therapeutic restoration to augmentative enhancement requires a fundamental leap in information transfer rates (ITRs) and the stability of the neural-machine link.5

Comparative Modalities and Performance Metrics

The choice of modality significantly influences the potential for cognitive enhancement. While non-invasive systems like Electroencephalography (EEG) offer safety and scalability, they suffer from a low signal-to-noise ratio (SNR) due to the filtering effects of the skull and scalp.9 Conversely, invasive systems provide high-fidelity signals but are constrained by surgical risks and long-term biocompatibility issues.3

Recent innovations, such as the Stentrode by Synchron and the flexible lattice arrays by Blackrock Neurotech, attempt to bridge the gap between invasiveness and signal quality.6 Synchron’s endovascular approach avoids open-brain surgery by delivering the interface through the jugular vein, while Precision Neuroscience has reported high-bandwidth recording via a “micro-slit” surgical technique that minimizes cortical trauma.6 These advancements suggest a trajectory toward safer, higher-bandwidth systems, yet the path to superintelligence remains obstructed by biological constraints.

Biological Constraints and the “Curse of Dimensionality”

The aspiration to make “everybody in the world super smart” via BCIs encounters a formidable barrier in the inherent architecture of the mammalian brain. Intelligence is not a modular commodity that can be increased by simply adding “processing power.” It is a manifestation of complex, non-linear dynamics within neural manifolds.13

Neural Manifolds and Subspace Learning

Experimental data indicates that the motor cortex and other cognitive regions operate within a low-dimensional “intrinsic manifold”—a subspace of all possible firing patterns that the existing circuitry is biologically capable of producing.13 When learning to control a BCI, the brain does not typically engage in massive synaptic reorganization. Instead, it adopts a “re-aiming” strategy, utilizing pre-existing neural patterns to navigate the new interface.13

This conservation of neural activity patterns poses a significant challenge to radical cognitive enhancement. If the desired cognitive task—such as processing multi-dimensional data or managing a million-fold intelligence expansion—falls outside the brain’s “intrinsic manifold,” the learning process becomes “notoriously slow” or biologically impossible.13 The “curse of dimensionality” suggests that because the brain contains trillions of synapses, optimizing individual weights for a high-bandwidth digital interface would require a data efficiency that biological systems currently lack.13

The Plasticity and Noise Bottleneck

Furthermore, biological learning is constrained by the noise inherent in synaptic signals and the absence of a biological equivalent to algorithmic back-propagation.13 While artificial neural networks can be trained on vast datasets in parallel, the human brain is limited by slow, trial-and-error estimation of gradients.13 This suggests that even with a perfect interface, the time required for a biological brain to “integrate” with a superintelligent AI system may exceed a human lifetime, unless the interface can directly induce the necessary synaptic changes—a feat that currently resides in the realm of speculative neuro-prosthetics.15

Economic Realities and the Infrastructure Gap

A critical question mark is whether everyone in the world will become superintelligent. An analysis of global economic data and infrastructure requirements suggests that universal adoption is a logistical and financial impossibility within the 21st century.

The Financial Barrier to Development and Adoption

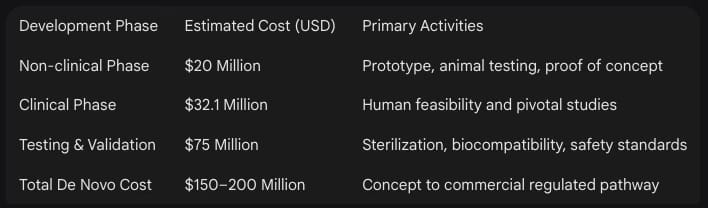

The capital requirements for BCI commercialization are staggering. In 2024 and 2025, over $1.4 billion was invested into BCI companies, with Neuralink alone raising $650 million.17 The cost to develop a single “Class III” medical device—the category into which most high-sophistication BCIs fall—is estimated at approximately $94 million to $200 million.17

For the individual consumer, the cost of the implant, surgery, and lifetime maintenance would likely restrict access to the wealthiest decile of the global population. In a world where 1.3 billion people live with significant disabilities and many lack access to basic healthcare, the prospect of a universally provided “intelligence chip” is economically untenable.8

The Global Infrastructure Deficit

Even if the technology were free, its functionality would be limited by the availability of supportive infrastructure. High-bandwidth, cloud-integrated BCIs—the kind envisioned by futurists like Ray Kurzweil—require a constant, low-latency connection to a global compute grid.19 Currently, the world faces a massive infrastructure gap that is projected to reach $18 trillion by 2040.21

In Sub-Saharan Africa and parts of South Asia, the lack of reliable electricity and high-speed telecommunications remains a primary constraint on economic development.18 To bring infrastructure in Africa to a level capable of supporting advanced digital services, an investment of $155 billion per year until 2040 is required.22 Without such a foundation, billions of people in rural developing regions will remain “biologically baseline,” unable to plug into the global intelligence grid.21

Expert Projections and the Probability of Universal Enhancement

In order to draw a YES/NO conclusion or provide a percentage chance, one must synthesize the competing timelines of the world’s leading neurotechnologists and forecasters.

The Optimistic “YES”: Ray Kurzweil and the Singularity

Ray Kurzweil, Director of Engineering at Google, predicts that by 2029, AI will achieve human-level intelligence, and by 2045, humans will merge with this intelligence to expand our cognitive capacity a million-fold.20 His vision relies on the exponential growth of computing power and the eventual use of nanobots to non-invasively bridge the capillaries of the brain to the cloud.19 Kurzweil claims an 86% accuracy rate for his previous 147 predictions, suggesting a high degree of confidence in this outcome.24

The Skeptical “NO”: Nick Bostrom and Biological Anchors

In contrast, philosopher Nick Bostrom argues that BCIs are an “unlikely path” to superintelligence. He suggests that improving biological cognition is a “weak” form of enhancement and that the true leap will come from “pure AI” or “whole brain emulation” (WBE).16 Bostrom points out that adding hardware to a biological brain does not solve the fundamental “control problem” and may introduce severe medical complications, including cognitive decline and hemorrhage.16

Aggregated Probabilities for the Year 2050

Final Conclusion and Probability Assessment

Conclusion: NO for “Everyone,” but YES for a “Significant Group.”

The probability that every person on Earth becomes superintelligent via BCI is estimated at less than 2% within the next century. This is due to insurmountable economic inequality, infrastructure deficits in developing nations, and the biological limits of neural manifolds that resist universal “plug-and-play” intelligence.13

However, the probability that a significant group (the global elite, specialized military units, and residents of hyper-advanced technological hubs) will become superintelligent is estimated at 65% to 75% by 2050.7 This group will achieve “hybrid intelligence” through ultra-high-bandwidth interfaces that allow for “cognitive endosymbiosis” with superintelligent AI systems.7

Consequences of the Significant Intelligence Leap

The emergence of a superintelligent sub-population—a “digital elite”—would trigger a series of cascading consequences that could fundamentally destabilize human civilization. These effects can be analyzed through the lenses of social justice, personal autonomy, and evolutionary divergence.

1. The Cognitive Stratification and Economic Dominance

The most immediate consequence is the creation of a “cognitive divide” that surpasses any historical form of wealth inequality. A superintelligent minority would possess the ability to out-think, out-maneuver, and out-produce the rest of the species in every domain, from scientific research and financial speculation to political strategy.29

Labor Displacement and the Species Gap: The “Full Automation of Labor” (FAOL), predicted by some experts to occur as early as 2116, would happen much faster for the augmented population.27 Unaugmented humans would become economically irrelevant, leading to a state of permanent “institutional unemployment” and reliance on universal basic income, which Kurzweil predicts will begin in the 2030s.20

Concentration of Power: The augmented group would likely form a “Singleton”—a world order where a single agency or cooperative elite exercises total control over the planet’s resources and information flow.16

2. The Autonomy Crisis and “P-Zombie” Dystopia

The integration of BCIs into human identity raises profound questions about personhood and agency. As the digital component of the “hybrid mind” begins to handle more cognition, the biological self may fade into the background.16

The Loss of Subjective Experience: Philosophical research into “artificial consciousness” warns of the “P-Zombie” dilemma. An augmented individual may exhibit superhuman intelligence and emotional mirroring while being internally “hollow”—devoid of the subjective first-person experience (qualia) that defines humanity.7

Neurocrime and Brain Hacking: A superintelligent society is uniquely vulnerable to “brain hacking.” Malicious actors or state entities could manipulate an individual’s thoughts, feelings, or physical actions through the interface, a phenomenon termed “neurocrime”.9 The ability to “read” and “write” to the brain would result in the total loss of mental privacy.29

3. Evolutionary Divergence and Interspecific Conflict

If the gap between augmented and baseline humans continues to widen, it could lead to a formal species split. This “evolutionary divergence” has historical and biological parallels in the behavior of competing species.34

Behavioral Interference: Research on interspecific interactions suggests that when two groups occupy the same niche but have vastly different capabilities, it leads to “aggressive interference” and “competitive exclusion”.34 The augmented group might view the unaugmented as a resource to be managed or a liability to be phased out, leading to social conflict that mirrors the “Malthusian disruptions” of the past.23

The Garland Test Failure: As humans project inner life onto machines (anthropomorphism), they may prioritize the rights of superintelligent AI-BCI hybrids over “baseline” humans, leading to a distortion of moral frameworks and the eventual abandonment of traditional human rights.7

4. Military Escalation and Geopolitical Instability

The application of BCIs for military neuroenhancement is a primary area of concern. Soldiers with direct neural links to weapon systems would create a new form of “asymmetric warfare”.29

The New Arms Race: Nations will be incentivized to “bypass restrictions” and engage in “ethics dumping,” moving BCI research to countries with less stringent frameworks to gain a cognitive advantage.29 This could lead to the deployment of high-risk interfaces that prioritize performance over the long-term mental health of the user.16

Global Systems Collapse: If the cognitive divide leads to widespread social unrest and the breakdown of traditional governments, the world could face “societal collapse”—a loss of social complexity and the rise of violence—around the mid-21st century, consistent with the “Business as Usual” scenarios of the MIT “Limits to Growth” study.31

Synthesis of Findings and Future Outlook

The trajectory of Brain-Computer Interfaces suggests a dual-track future. For individuals with severe neurological disorders, BCIs will be a transformative tool for restoration and independence.3 For the global population at large, however, the promise of superintelligence is a mirage that conceals a reality of radical cognitive stratification.

While the technical hurdles to high-bandwidth interfacing are being aggressively addressed by venture-backed startups, the biological “anchors” of the human brain and the economic “anchors” of global infrastructure ensure that superintelligence will remain the exclusive domain of a digital elite. The consequences of this situation—ranging from the loss of mental privacy to the potential for a species-level conflict—represent the primary existential risk of the 21st century.

As the “Singularity” approaches, the challenge for global policy-makers will not be the achievement of superintelligence, but the mitigation of the “neuro-inequality” that it brings. Ensuring that the “noosphere”—the planetary layer of reason—remains inclusive rather than exclusionary is the ultimate test of humanity in the neuromorphic era.7 Without coordinated global action to bridge the infrastructure and economic gap, the BCI revolution will not make everyone “super smart”; it will instead redefine what it means to be “human,” leaving the majority of the species behind in a state of biological obsolescence.

Works cited

Advancing brain-computer interfaces with generative AI: A review of state-of-the-art and future outlook - The Innovation, accessed March 7, 2026, https://www.the-innovation.org/article/doi/10.59717/j.xinn-life.2026.100198

(PDF) Brain–computer interfaces in 2023–2024 - ResearchGate, accessed March 7, 2026, https://www.researchgate.net/publication/390335479_Brain-computer_interfaces_in_2023-2024

News: | Asamaka Industries Ltd, accessed March 7, 2026, https://www.automate.org/news/the-future-of-brain-computer-interfaces-how-science-is-blending-mind-and-machine

Non-Invasive Brain–Computer Interfaces - Emergent Mind, accessed March 7, 2026, https://www.emergentmind.com/topics/non-invasive-brain-computer-interfaces-bcis

Advances in Brain–Computer Interfaces (BCI): Challenges and Opportunities - PMC, accessed March 7, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC12938029/

BCIs in 2025: Trials, Progress, and Challenges - Andersen, accessed March 7, 2026, https://andersenlab.com/blueprint/bci-challenges-and-opportunities

The neuromorphic convergence: Transhumanism, biological computation, and the ultimate test of humanity (Part 2) | illuminem, accessed March 7, 2026, https://illuminem.com/illuminemvoices/the-neuromorphic-convergence-transhumanism-biological-computation-and-the-ultimate-test-of-humanity-part-2

Brain-Computer Interfaces & AI: Market Impact | Morgan Stanley, accessed March 7, 2026, https://www.morganstanley.com/ideas/brain-computer-interfaces-ai

Brain–computer interface: trend, challenges, and threats - PMC, accessed March 7, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC10403483/

Non-Invasive Brain-Computer Interfaces: State of the Art and Trends ..., accessed March 7, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC11861396/

Precision Neuroscience Reports First High-Bandwidth Brain–Computer Interface Achieved Without Open Surgery - BioSpace, accessed March 7, 2026, https://www.biospace.com/press-releases/precision-neuroscience-reports-first-high-bandwidth-brain-computer-interface-achieved-without-open-surgery

Brain Computer Interface Market Report 2025 - Research and Markets, accessed March 7, 2026, https://www.researchandmarkets.com/reports/5751976/brain-computer-interface-market-report

A theory of brain-computer interface learning via low-dimensional ..., accessed March 7, 2026, https://elifesciences.org/reviewed-preprints/106309

A theory of brain-computer interface learning via low-dimensional control - bioRxiv, accessed March 7, 2026, https://www.biorxiv.org/content/10.1101/2024.04.18.589952v1

Realistically, what will BCI’s be able to do and what will always be fiction? - Reddit, accessed March 7, 2026, https://www.reddit.com/r/BCI/comments/1ogshrx/realistically_what_will_bcis_be_able_to_do_and/

A Visualization of Nick Bostrom’s Superintelligence - LessWrong, accessed March 7, 2026, https://www.lesswrong.com/posts/ukmDvowTpe2NboAsX/a-visualization-of-nick-bostrom-s-superintelligence

What does it cost to build a brain-computer interface (BCI ... - TTP, accessed March 7, 2026, https://www.ttp.com/insights/what-does-it-cost-to-build-a-brain-computer-interface-today

Trends and Challenges in Infrastructure Investment in Low-Income Developing Countries, WP/17/233, November 2017 - IMF, accessed March 7, 2026, https://www.imf.org/-/media/files/publications/wp/2017/wp17233.pdf

Opinion: Ray Kurzweil’s Predictions — AI Today and Tomorrow - GovTech, accessed March 7, 2026, https://www.govtech.com/education/higher-ed/opinion-ray-kurzweils-predictions-ai-today-and-tomorrow

AI scientist Ray Kurzweil: ‘We are going to expand intelligence a ..., accessed March 7, 2026, https://www.theguardian.com/technology/article/2024/jun/29/ray-kurzweil-google-ai-the-singularity-is-nearer

Forecasting infrastructure investment needs for 50 countries, 7 sectors through 2040, accessed March 7, 2026, https://blogs.worldbank.org/en/ppps/forecasting-infrastructure-investment-needs-50-countries-7-sectors-through-2040

Developing Africa’s infrastructure for productive transformation: Africa’s Development Dynamics 2025 | OECD, accessed March 7, 2026, https://www.oecd.org/en/publications/africa-s-development-dynamics-2025_c2b40285-en/full-report/developing-africa-s-infrastructure-for-productive-transformation_88f75d0c.html

Why a collapse of global civilization will be avoided: a comment on Ehrlich & Ehrlich - PMC, accessed March 7, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC3735251/

Most transformative decade begins as Kurzweil’s AI vision unfolds, accessed March 7, 2026, https://dig.watch/updates/kurzweil-ai-transformative-decade

Superintelligence: Paths, Dangers, Strategies - Wikipedia, accessed March 7, 2026, https://en.wikipedia.org/wiki/Superintelligence:_Paths,_Dangers,_Strategies

Superintelligent AI: 2027 Timeline Debate - AI CERTs News, accessed March 7, 2026, https://www.aicerts.ai/news/superintelligent-ai-2027-timeline-debate/

Shrinking AGI timelines: a review of expert forecasts | 80,000 Hours, accessed March 7, 2026, https://80000hours.org/2025/03/when-do-experts-expect-agi-to-arrive/

Brain Computer Interface Market Size & Growth Forecast to 2029 - MarketsandMarkets, accessed March 7, 2026, https://www.marketsandmarkets.com/Market-Reports/brain-computer-interface-market-64821525.html

Understanding the Ethical Issues of Brain-Computer Interfaces (BCIs ..., accessed March 7, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC11091939/

Community perspectives regarding brain-computer interfaces: A cross-sectional study of ... - PMC, accessed March 7, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC11798465/

Societal collapse - Wikipedia, accessed March 7, 2026, https://en.wikipedia.org/wiki/Societal_collapse

(PDF) Ethical and Social Challenges of Brain-Computer Interfaces - ResearchGate, accessed March 7, 2026, https://www.researchgate.net/publication/233875878_Ethical_and_Social_Challenges_of_Brain-Computer_Interfaces

Regulating neural data processing in the age of BCIs: Ethical concerns and legal approaches - PMC, accessed March 7, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC11951885/

Causes and Consequences of Behavioral Interference between Species - UCLA, accessed March 7, 2026, https://sites.lifesci.ucla.edu/eeb-gretherlab/wp-content/uploads/sites/146/2017/09/Grether-et-al.-2017-TREE.pdf

Social conflict drives the evolutionary divergence of quorum sensing - PMC, accessed March 7, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC3158151/

Causes and Consequences of Behavioral Interference between Species - PubMed, accessed March 7, 2026, https://pubmed.ncbi.nlm.nih.gov/28797610/

Could Society Collapse by 2040?. In 1972, the Massachusetts Institute of… | by Mark King | Medium, accessed March 7, 2026, https://medium.com/@mking2k/could-society-collapse-by-2040-c2ead5bb8f2a

Advancing Brain-Computer Interface Closed-Loop Systems for Neurorehabilitation: Systematic Review of AI and Machine Learning Innovations in Biomedical Engineering, accessed March 7, 2026, https://biomedeng.jmir.org/2025/1/e72218