- Pascal's Chatbot Q&As

- Archive

- Page -119

Archive

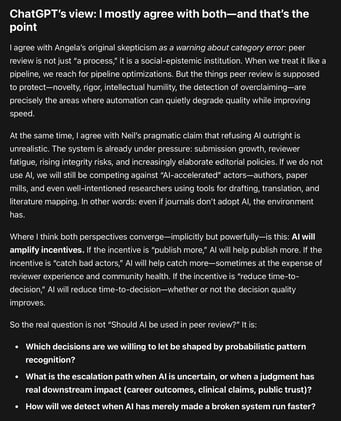

AI will force the industry to decide what peer review is for. If peer review is primarily a throughput mechanism for career signaling, AI will perfect the factory.

If peer review is a practice for testing claims & stewarding knowledge, AI can help—provided humans keep authority over judgment and incentives stop demanding that the system outrun its own legitimacy

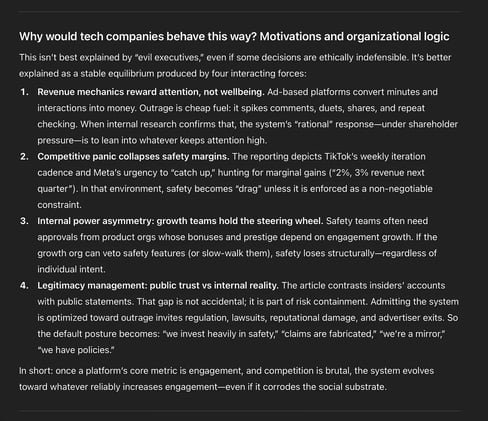

Safety and integrity becomes structurally subordinate when its success metrics (reduced harm, reduced exposure) collide with the company’s growth metrics (time spent, shares, comments, ad inventory).

In that environment, “harm” isn’t a bug; it becomes a predictable by-product of the optimization target.

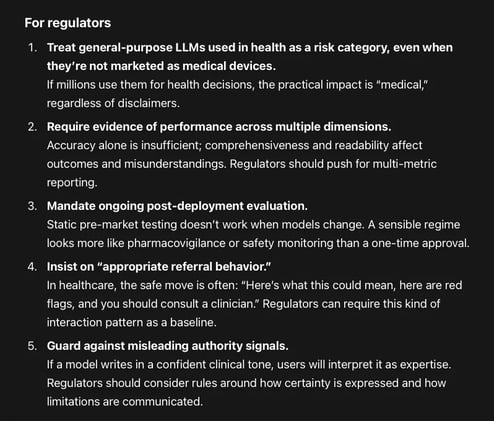

When eight popular large language models (LLMs) answer common parent questions about early maxillary expansion, how reliable are they—and how readable is what they say?

None of the models achieved “ideal patient education readability.” Even the “most readable” models were still too complex relative to common health communication guidance.

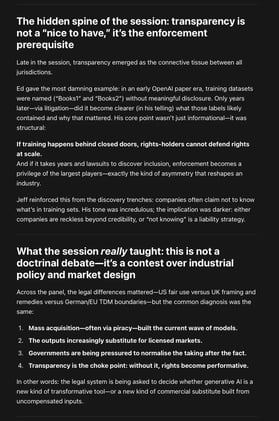

Who owns the pipeline that converts human cognition into machine capability—and what happens to societies that let that pipeline permeate everything?

AI only needs to eat the profitable, repeatable slices first. Once that happens, the remaining human work can become rarer, higher-pressure, and less funded—until it too gets “platformized.”

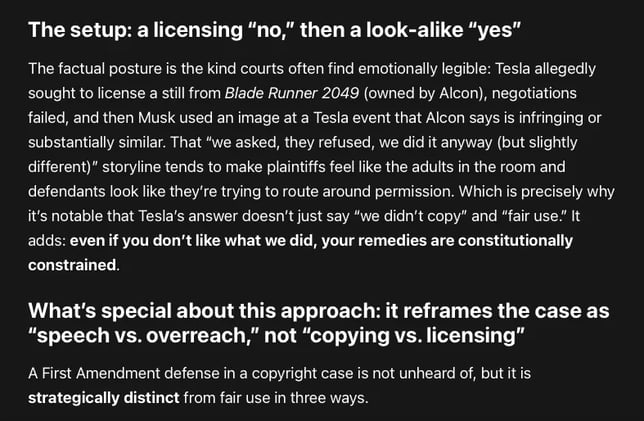

Elon Musk, Tesla, and Warner Bros. Discovery are teeing up the First Amendment as a front-of-house defense—explicitly placing it ahead of fair use.

That’s not just posturing. It’s a signal that the defendants want the court to see this case as a dispute where copyright doctrine is allegedly being stretched into a speech-controlling weapon.

DOGE: a governance experiment that treated law, institutions, and security controls as friction; treated generative AI as a compliance substitute; and treated speed as legitimacy.

DOGE demonstrated just how quickly “efficiency” can become the language that hides the transfer of authority, the degradation of rights, and the quiet privatization of the public sphere.

GPT-5.4: The real issue is whether this level of AI penetration and permeation into a legislature creates the preconditions for a softer form of capture in which policy formation, ...

...administrative judgment, staff cognition, and information routing become increasingly mediated by a handful of private platform firms. On that question, my answer is yes.

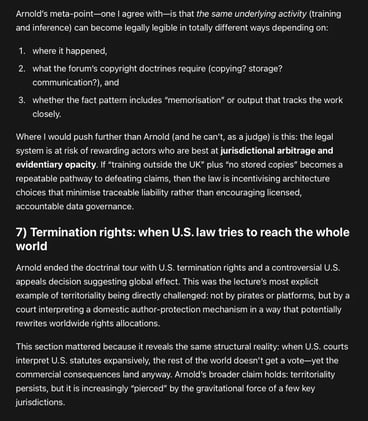

“AI and copyright” not as a standalone morality play, but as the latest stress-test on a deeper structural problem: Copyright’s territorial architecture in a world whose markets, platforms, and...

...data flows are not territorial at all. Will the law end up incentivising architecture choices that minimise traceable liability rather than encouraging licensed, accountable data governance?

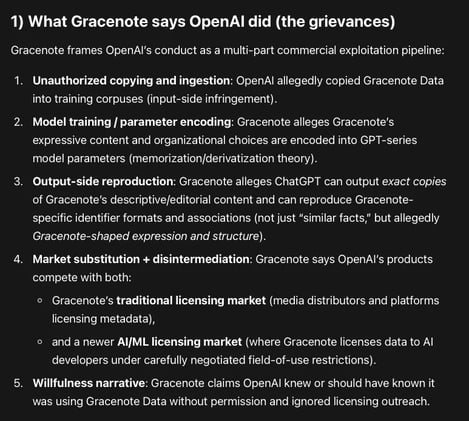

Gracenote Media Services, LLC v. OpenAI: a database/metadata case aimed at the infrastructure layer of AI quality—identifiers, taxonomies, curated relational logic, and editorial descriptions.

OpenAI allegedly copied and used Gracenote’s curated metadata corpus to train and/or ground GPT models, and ChatGPT can allegedly reproduce that metadata creating a substitute product.