- Pascal's Chatbot Q&As

- Posts

- Who owns the pipeline that converts human cognition into machine capability—and what happens to societies that let that pipeline permeate everything?

Who owns the pipeline that converts human cognition into machine capability—and what happens to societies that let that pipeline permeate everything?

AI only needs to eat the profitable, repeatable slices first. Once that happens, the remaining human work can become rarer, higher-pressure, and less funded—until it too gets “platformized.”

The Great Expertise Harvest: When You Train the Machine That Replaces You

by ChatGPT-5.2

This article on The Verge reads like a dispatch from a new labor regime: not “AI takes jobs” in one dramatic wave, but “AI buys your judgment” in millions of tiny transactions—then turns that judgment into a product you can no longer sell. A big reveal isn’t just what people are doing (rubrics, golden answers, stumpers, reasoning traces), but howthe work is organized: secrecy, surveillance, on-call volatility, pay cuts, and an always-available global labor pool. That combo turns white-collar knowledge into a measurable input stream—something that can be procured, versioned, and arbitraged like any other commodity.

And once expertise becomes a commodity, the politics of it change. The question stops being “will AI replace humans?” and becomes: who owns the pipeline that converts human cognition into machine capability—and what happens to societies that let that pipeline permeate everything?

a) Which other jobs will be affected next (and why those first)

A clean way to predict the next hit is to look for roles with four traits:

High volumes of repeatable judgments (even if they feel bespoke)

Artifacts that can be graded (templates, compliance checks, structured outputs)

Clear “good enough” thresholds (not perfect—acceptable)

A production chain that can be split into microtasks (draft → review → QA → escalation)

That points to the next wave being less about glamorous “professions” and more about the middle layers of decision work:

Paralegals, contract managers, eDiscovery reviewers, litigation support, due diligence analysts

Because legal work is already modular: spotting issues, extracting clauses, mapping facts to standards, generating drafts, checking against playbooks.Compliance, risk, and audit analysts (especially in finance, healthcare, and privacy)

Lots of checklistable reasoning, policy mapping, and documentation—prime territory for rubrics and “golden outputs.”Junior-to-mid consultants (ops, strategy, market research), corporate analysts, competitive intelligence

Much of the job is structured synthesis, slide logic, memo-writing, and “reasonable” recommendations—exactly what world-building document factories train.Accountants and tax prep, payroll specialists, procurement analysts

Rule-heavy domains with standardized artifacts and many “did you check X?” steps.HR generalists and recruiters (screening, job descriptions, performance narratives, policy comms)

“Human” work that is paradoxically text-heavy and template-driven at scale.Customer support tier-1/2, technical support, success managers (especially in SaaS)

Already shaped like rubrics: identify intent → follow scripts → resolve or escalate.Editorial triage and production roles: copyediting, fact-checking, indexing, permissions triage, metadata curation

Particularly vulnerable because a lot of it is structured pattern-checking—though the high-stakes “trust layer” can survive if publishers choose to invest in it rather than cost-cut it.Teaching assistants, tutoring, curriculum content packagers

Not “teachers disappear,” but the support ecosystem gets algorithmically reorganized: lesson scaffolds, feedback, marking guidance, and individualized drills.Some clinical back-office roles (coding, documentation, referral letters, prior authorizations)

Not the bedside; the paperwork machine around it.

The deeper point: AI doesn’t need to beat a whole profession. It only needs to eat the profitable, repeatable slices first. Once that happens, the remaining human work can become rarer, higher-pressure, and less funded—until it too gets “platformized.”

b) What this means for the roles of the people contributing knowledge—and whether humans are now the tool

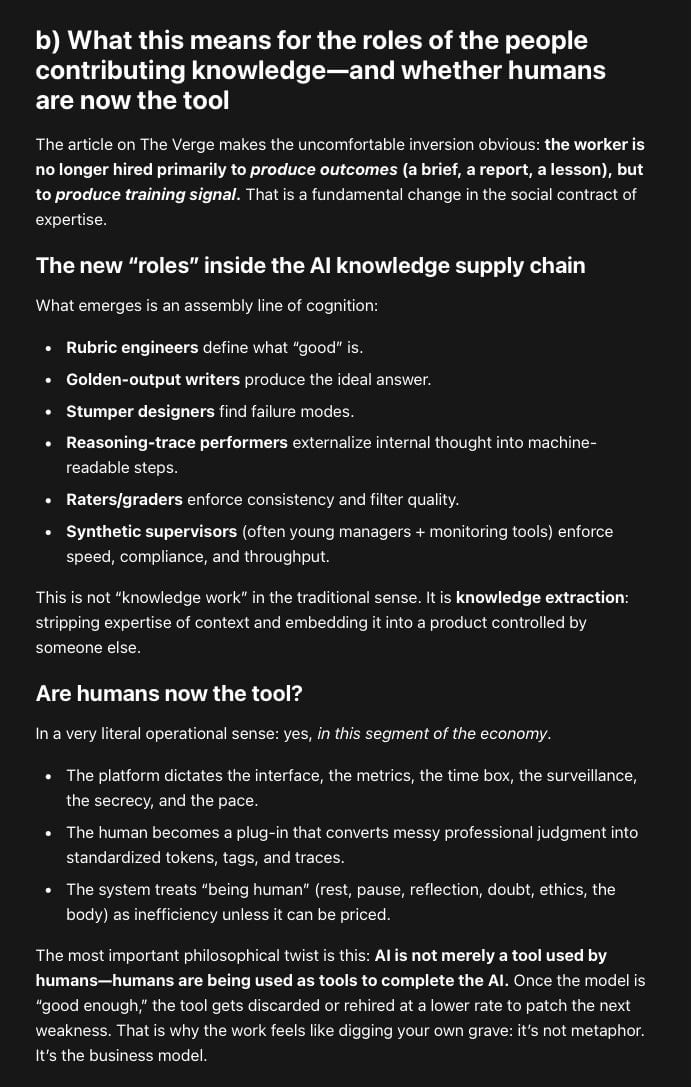

The article on The Verge makes the uncomfortable inversion obvious: the worker is no longer hired primarily to produce outcomes (a brief, a report, a lesson), but to produce training signal. That is a fundamental change in the social contract of expertise.

The new “roles” inside the AI knowledge supply chain

What emerges is an assembly line of cognition:

Rubric engineers define what “good” is.

Golden-output writers produce the ideal answer.

Stumper designers find failure modes.

Reasoning-trace performers externalize internal thought into machine-readable steps.

Raters/graders enforce consistency and filter quality.

Synthetic supervisors (often young managers + monitoring tools) enforce speed, compliance, and throughput.

This is not “knowledge work” in the traditional sense. It is knowledge extraction: stripping expertise of context and embedding it into a product controlled by someone else.

Are humans now the tool?

In a very literal operational sense: yes, in this segment of the economy.

The platform dictates the interface, the metrics, the time box, the surveillance, the secrecy, and the pace.

The human becomes a plug-in that converts messy professional judgment into standardized tokens, tags, and traces.

The system treats “being human” (rest, pause, reflection, doubt, ethics, the body) as inefficiency unless it can be priced.

The most important philosophical twist is this: AI is not merely a tool used by humans—humans are being used as tools to complete the AI. Once the model is “good enough,” the tool gets discarded or rehired at a lower rate to patch the next weakness. That is why the work feels like digging your own grave: it’s not metaphor. It’s the business model.

c) Societal consequences in territories that allow AI to permeate and nudge/control all layers of society

If a country embraces pervasive AI—education, media, hiring, credit, healthcare triage, policing support, welfare systems, migration, tax, procurement—three big structural consequences show up. Not hypotheticals. Structural.

1) The “invisible bureaucracy” problem

Decisions become:

more automated,

more personalized,

less legible.

People stop arguing with a person and start arguing with a system that can say “policy says no” without revealing whose policy, trained on what, updated when, or accountable to whom. Appeals become performative. This is how you get administrative power without administrative responsibility.

2) Population-scale behavioral steering

When the same few models mediate search, news, recommendations, education prompts, workplace tools, public services, and even companionship, you get soft control:

Not “censorship” in the old sense—more like friction, omission, default settings, and tone.

Nudges that are individualized, continuous, and optimized.

Over time, the political risk is not only propaganda; it’s learned dependency: people outsource memory, writing, planning, even moral rehearsal (“what should I say?” / “what should I think?”), and the system becomes the cognitive environment.

3) A new class system: the “interface rich” vs “interface poor”

In AI-permeated territories:

The winners are those who can buy better models, better privacy, better data rights, better legal cover, and human concierge layers.

The losers get cheap automation: surveillance-heavy schooling, automated welfare suspicion, template jobs, AI HR screens, and algorithmic credit.

This is how you can end up with two different states operating in parallel: a high-touch human state for elites and a low-touch automated state for everyone else.

Where this goes when things get tense

In a crisis (war, unrest, migration surge, economic shock), pervasive AI becomes incredibly tempting as a control surface: rapid classification, prioritization, triage, narrative management, and policing support. The more embedded the systems are, the easier it is to repurpose them—sometimes without new laws, because the machinery is already there.

d) Other possible consequences of this development (beyond jobs)

Here’s the wider blast radius—the stuff that follows once “expertise harvest” becomes normal.

1) Credential collapse and identity shock

If degrees are increasingly decoupled from stable employment, you get social consequences:

delayed family formation,

higher geographic mobility pressures,

resentment toward institutions that sold a promise,

and a rise in alternative status markers (followers, brand, networks, ideology).

2) The “race to the bottom” in professional standards

When pay is squeezed and speed is rewarded, “quality” becomes whatever the rubric measures. That has two outcomes:

professionals learn to optimize for the metric (not the craft),

and the craft degrades—ironically weakening the training data over time.

3) Expansion of surveillance from warehouses to laptops

The attached story shows knowledge work adopting the harshest parts of platform labor: monitoring, time policing, “unproductive seconds,” coercive on-call behavior. This normalizes a workplace culture where privacy and autonomy are treated as luxuries.

4) Legal and regulatory backlash

Expect more fights over:

worker classification and benefits,

disclosure duties (“who is the client?” / what is the work used for?),

psychological harm (content moderation-like trauma),

and constraints on surveillance tooling.

5) Model quality risks from synthetic contamination

As more of the internet and internal corp data becomes AI-generated, the temptation is to train on it—yet that can degrade models if done indiscriminately. This creates an odd dependency: models still need fresh human reality to stay sane, diverse, and grounded.

6) Epistemic monocultures

If a few model families become the “default” reasoning layer in schools, offices, and governments, you get convergence:

same phrasing,

same argument shapes,

same blind spots,

same failure modes.

That’s not just boring. It can become dangerous: systemic errors at scale, and societies that lose the ability to think outside the template.

7) New unions and “data labor guilds”

Counter-movement is plausible: collective bargaining over pay, credit, safety, and disclosure; professional bodies defining what cannot be commoditized; and new licensing regimes for expert data. The interesting twist: the “factory” is global and digital, so labor organizing will have to be too.

8) Geopolitical leverage through the “cognition stack”

Territories that import AI stacks wholesale may import:

embedded values,

security dependencies,

update/kill-switch risks,

and information asymmetries.

That becomes strategic vulnerability—especially for smaller states and the Global South—because the cognitive infrastructure can quietly become an external dependency.

The closing paradox: society still needs humans, but may stop paying for them

Even in the bleakest version of this trajectory, humans remain essential for:

legitimacy (people accept decisions more readily from accountable humans),

moral judgment (values conflicts don’t disappear),

novelty (new ideas aren’t just recombinations),

and care (people still want other people when it matters).

But the market can still decide not to pay for those things—especially if the automated version is cheaper and “good enough.” That’s the real danger in the essay you shared: not simply unemployment, but a downgrade of human life into unpriced externalities—mental health, civic trust, education depth, cultural diversity—while the economic gains concentrate around the owners of the pipeline.

So the question for territories deciding whether to let AI permeate everything isn’t “Is it innovative?” It’s:

What kind of society do you become when the primary growth industry is extracting human expertise, converting it into automated power, and then redistributing humans into precarious work—or out of work altogether—while the systems quietly learn how to steer the population that depends on them?