- Pascal's Chatbot Q&As

- Posts

- When eight popular large language models (LLMs) answer common parent questions about early maxillary expansion, how reliable are they—and how readable is what they say?

When eight popular large language models (LLMs) answer common parent questions about early maxillary expansion, how reliable are they—and how readable is what they say?

None of the models achieved “ideal patient education readability.” Even the “most readable” models were still too complex relative to common health communication guidance.

When the “Helpful” Chatbot Becomes a Dental Co-Pilot: What This Study Reveals About LLMs in Real Clinical Decisions

by ChatGPT-5.2

Parents are already using chatbots as a first stop for health questions. Orthodontics is no exception: if your child has a narrow upper jaw or a crossbite, you’ll Google it, and increasingly you’ll ask an AI. This paper asks a very practical question: when eight popular large language models (LLMs) answer common parent questions about early maxillary expansion, how reliable are they—and how readable is what they say?

What the study did

The researchers built a test that mirrors real life. Four orthodontists collected the kinds of questions parents actually ask about early maxillary expansion (a treatment used in children to widen the upper jaw, often relevant in primary or mixed dentition). They finalized 20 questions—10 for the primary dentition phase and 10 for the mixed dentition phase—covering things like whether expansion is possible at that age, pain/discomfort, facial development, effects on permanent teeth, long-term stability, self-esteem, and cost.

Then they asked eight LLMs to answer all questions:

DeepSeek V3

Gemini 2.5 Flash

Claude 4.5 Sonnet

MediSearch

Copilot

GPT-5

GPT-4o

Grok

Two orthodontists scored the responses on two big dimensions:

Accuracy: does it match scientific evidence and clinical practice?

Comprehensiveness: does it answer the question fully and in a way a parent can use?

Separately, the researchers measured readability using two standard formulas:

Flesch Reading Ease (FRES): higher is easier to read.

Flesch-Kincaid Grade Level (FKGL): lower means fewer years of education needed.

They also checked consistency: the scoring reliability was strong (high agreement between raters, good test-retest reliability, good internal consistency). So the measurements are not just vibes—they’re relatively robust.

What they found (the headline results)

1) The models differed a lot—and model choice strongly shaped outcomes.

Across accuracy, comprehensiveness, and readability, there were statistically significant differences between models. The effect sizes were large, meaning which model you use explains a substantial amount of the variation in quality.

2) DeepSeek V3 and Grok were best on “being clinically right and thorough.”

In both dentition phases, DeepSeek V3 and Grok hit the top tier for accuracy and comprehensiveness.

3) Copilot, GPT-5, and GPT-4o were easiest to read—but less accurate.

These models produced the most readable answers (better reading-ease, lower grade-level), but their clinical accuracy scores were lower compared with the leaders.

4) None of the models achieved “ideal patient education readability.”

A particularly important point: even the “most readable” models were still too complex relative to common health communication guidance. The paper cites the idea (via AMA guidance) that patient materials should generally aim around a 6th-grade reading level—and these AI outputs didn’t meet that bar.

5) MediSearch performed worst overall in this setup.

It scored low on accuracy and comprehensiveness and also produced the least readable content (hardest reading level).

The most surprising, controversial, and valuable findings

Surprising

“Readable” often meant “less clinically reliable.”

Many people assume clearer writing is a sign of better teaching. The study suggests a trade-off: models that spoke more simply were not necessarily the ones most aligned with evidence and clinical nuance.All models missed the readability target—even in a parent-education context.

That’s a big deal because the entire premise is “help parents decide.” If the output reads like a textbook, you’re not improving health literacy—you’re outsourcing confusion.

Controversial

A model can sound like an expert and still be wrong, incomplete, or misleading.

The paper repeatedly points to the risk of LLM “hallucinations” and the danger that parents may treat chatbot answers as professional opinion. In healthcare, plausible confidence is not a cute bug; it’s a hazard.The study reports specific models outperforming others—but models change constantly.

Publishing “model leaderboards” in medicine is inherently time-sensitive. A model update can flip results. That doesn’t invalidate the study; it underscores the deeper point: you can’t treat LLM reliability as a static property.

Valuable

The strongest insight is not “Model X wins,” but “there is no consistent winner across accuracy + completeness + readability.”

That’s the central operational reality for healthcare: quality is multi-dimensional, and optimizing one dimension (ease of reading) can quietly degrade another (clinical correctness).The paper quantifies how much model choice matters.

The large effect sizes function like a warning label: in medicine, “Which chatbot did you use?” is not a trivial question—it’s a material variable.

Lessons learned

For AI developers

Stop treating readability as a cosmetic afterthought.

In health contexts, readability is not UX polish; it’s safety infrastructure. If a parent can’t understand the explanation, they’ll fill gaps with fear, assumptions, or misinformation.Build “accuracy-preserving simplification,” not just simplification.

Many systems can summarize; fewer can simplify without distorting medical meaning. That’s the hard part—and it should be a core training/evaluation target.Design for “clinician-safe” behavior, not “assistant-pleasing” behavior.

A health LLM should be optimized to: (a) clearly state uncertainty, (b) avoid overconfident recommendations, (c) encourage professional evaluation, and (d) surface risks and contraindications appropriately.Use domain evaluation that reflects real queries and real users.

This study’s strength is that it tested “parent questions,” not abstract benchmarks. Developers should replicate that approach across specialties: the evaluation set should look like the inbox.Versioning and transparency must become normal.

If outputs change after updates, then patient-facing deployments need explicit versioning, change logs, and ongoing monitoring—not “ship it and pray.”

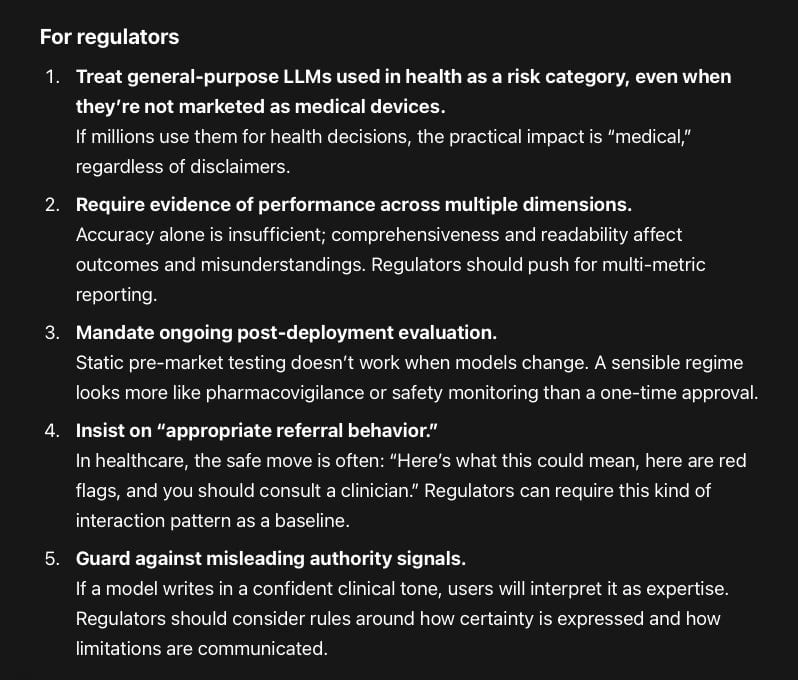

For regulators

Treat general-purpose LLMs used in health as a risk category, even when they’re not marketed as medical devices.

If millions use them for health decisions, the practical impact is “medical,” regardless of disclaimers.Require evidence of performance across multiple dimensions.

Accuracy alone is insufficient; comprehensiveness and readability affect outcomes and misunderstandings. Regulators should push for multi-metric reporting.Mandate ongoing post-deployment evaluation.

Static pre-market testing doesn’t work when models change. A sensible regime looks more like pharmacovigilance or safety monitoring than a one-time approval.Insist on “appropriate referral behavior.”

In healthcare, the safe move is often: “Here’s what this could mean, here are red flags, and you should consult a clinician.” Regulators can require this kind of interaction pattern as a baseline.Guard against misleading authority signals.

If a model writes in a confident clinical tone, users will interpret it as expertise. Regulators should consider rules around how certainty is expressed and how limitations are communicated.

For AI users (corporate healthcare orgs, clinics, insurers)

Do not deploy “one chatbot” as a single point of truth.

If model choice strongly affects accuracy, you need governance: approved models, approved use cases, and clear boundaries.Separate use cases: education vs decision support.

A model that’s readable but less accurate may be acceptable for general orientation with strong guardrails—but not for treatment guidance.Add human-in-the-loop where it matters.

Especially in pediatric contexts: use AI for drafting explanations, FAQs, and visit prep, but ensure clinician review for anything that could steer treatment decisions.Create “AI literacy” for staff and patients.

Patients will use these tools anyway. Clinics should proactively teach: “What this is good for, what it’s bad for, and when to ignore it.”

For individual consumers (parents, patients)

Use AI as a question-generator, not an answer-engine.

The best safe use is: “What should I ask my orthodontist? What options exist? What are red flags?”Be wary of confident specificity.

If the chatbot gives you definitive statements about your child’s best timing, appliance choice, or predicted outcome without an exam and imaging, treat that as suspect.Demand context: risks, uncertainty, alternatives.

If an answer lacks these, it’s incomplete—even if it sounds reassuring.

What this means for the future (predictions)

Healthcare will move toward “approved LLM stacks,” not open-ended chatbot choice.

As evidence accumulates that model selection materially changes reliability, institutions will standardize: specific model versions, specific prompts, specific guardrails, audit trails.Readability will become a regulated safety feature.

Not because it’s nice, but because incomprehensible medical text is a predictable pathway to harm. Expect “grade level and clarity targets” to appear in procurement requirements and clinical governance.We’ll see a split between “medical-grade LLMs” and “general LLMs with medical vibes.”

General models will still be used, but high-risk contexts will increasingly demand domain-validated systems with monitoring, citations, and safer refusal/referral patterns.The core battleground will be “trustworthy simplification.”

The winning systems won’t merely be the most accurate or the most fluent—they’ll be the ones that can translate clinical reality into patient-usable language without creating false certainty.Clinician communication will change—because patients will arrive with AI narratives.

Whether clinicians like it or not, AI will become a pre-visit influence layer. The future clinic visit increasingly starts with: “Show me what the chatbot told you,” followed by correction, reframing, and risk calibration.The real risk isn’t just wrong answers—it’s misplaced confidence and delayed care.

The most damaging failure mode is not “AI says something weird.” It’s “AI sounds credible, a parent feels reassured (or panicked), and the timing of intervention shifts.” This is especially relevant in early orthodontics where timing can matter.