- Pascal's Chatbot Q&As

- Archive

- Page 18

Archive

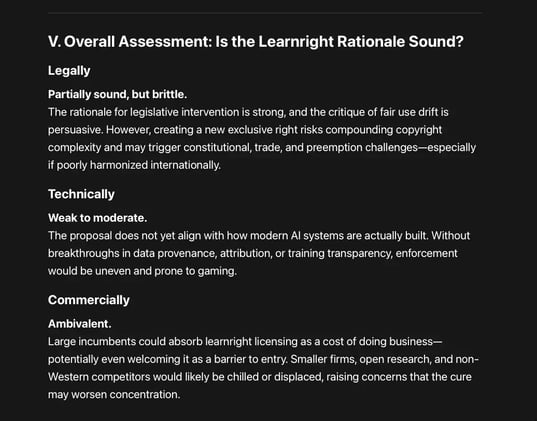

One of the most ambitious copyright reform proposals yet aimed at generative AI: the creation of a new exclusive right—a “learnright”—that would require AI developers to license copyrighted works...

...used for model training. Its central solution raises serious feasibility concerns, particularly when assessed against real-world AI development practices, global competition and technical realities

AI reshapes leadership skills in deeper, structural ways. AI does not replace top managers—but it fundamentally changes the skills they must master.

A study identifies four interlinked leadership skills that are becoming essential in AI-driven organizations. AI makes leadership harder, not easier.

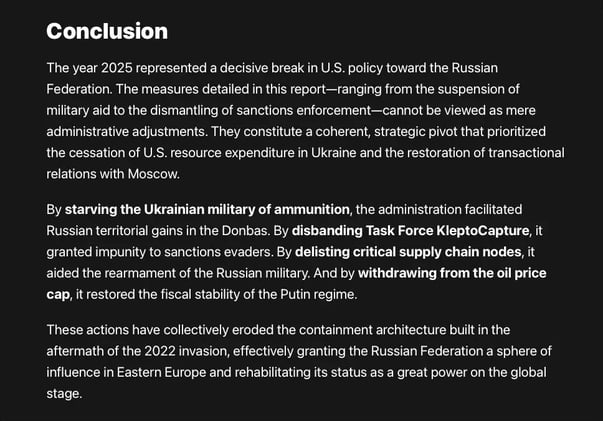

The year 2025 represented a decisive break in U.S. policy toward the Russian Federation. These actions have collectively eroded the containment architecture built in the aftermath of the 2022 invasion

...effectively granting the Russian Federation a sphere of influence in Eastern Europe and rehabilitating its status as a great power on the global stage.

This case is a stress test for whether constitutional guarantees and universal human-rights commitments retain practical force when they conflict with an administration’s ideological agenda.

The most dangerous precedent at stake is not about gender identity per se, but about whether the state may govern by erasure, humiliation, and attrition rather than by law.

The complaints against META and ByteDance argue that AI developers did not merely ingest publicly available content, but deliberately broke through access controls imposed by YouTube...

...to obtain training data at industrial scale, transforming alleged “viewing” into unlawful access, copying, and commercialization.

In prioritizing immediate institutional convenience and industry alignment, academia weakened a rare attempt at meaningful AI safety regulation and compromised its own ethical authority.

Their comparative advantage lies precisely in long-term thinking, public accountability, and principled governance. Reclaiming that role is not only in society’s interest—it is in academia’s own.

Shareholder proposals are exposing the growing gap between AI’s real-world power and the weak institutional structures overseeing it.

Companies that continue to treat AI governance as optional or cosmetic are not merely risking public criticism; they are accumulating latent legal, regulatory, and financial risk.

Even if systems like Grok are initially deployed for logistics, analysis, or workflow optimisation, institutional drift is predictable.

Over time, AI systems tend to: shape priorities, influence discretionary decisions, create “risk scores” or classifications that are difficult to challenge.

'AI Is Not a Natural Monopoly' is a necessary corrective to regulatory overconfidence. However, the paper’s narrow focus risks understating where real, durable power may accumulate:

not only in models, but in infrastructure, standards, governance, and dependency relationships. The absence of monopoly pricing does not imply the absence of systemic dominance.

The complaint alleges a deliberate, repeated, and knowing acquisition of copyrighted books from shadow libraries (LibGen, Z-Library, Bibliotik, Books3, PiLiMi) followed by systematic copying...

...during ingestion, preprocessing, deduplication, training, fine-tuning, and in some cases retrieval-augmented generation (RAG).