- Pascal's Chatbot Q&As

- Archive

- Page -132

Archive

Britannica and Merriam-Webster: OpenAI is turning the web’s best reference content into an answer engine that (1) copies, (2) competes, and (3) sometimes lies...

...while wearing the plaintiffs’ brands like a lab coat. Copyright theft that allegedly enables substitution, and trademark harm that allegedly weaponizes confusion.

Meta allegedly sold AI-enabled smart glasses by leaning hard on “privacy-by-design” marketing—while failing to clearly disclose that...

...users’ recordings and AI interactions could be transmitted to Meta’s cloud and then routed to overseas human reviewers for labeling and quality assurance.

The UK government’s best path is to stop treating this as a binary choice (“exception” vs “no exception”) and instead build a two-sided market architecture...

...where AI innovation depends on lawful, auditable access to high-quality content—and where creators can realistically enforce and monetise their rights.

Chicken Soup for the Soul & BMG v. Anthropic: a litigation strategy that treats generative AI as a copyright supply chain.

The core injury is not merely downstream outputs, but upstream mass copying—especially copying allegedly sourced from shadow libraries—followed by years of compounding commercial benefit.

Fortis Advisors v. Krafton: An AI chatbot was used as a strategic adviser for a “takeover” playbook. Not as a drafting helper, but as a source of structured pressure tactics,...

...messaging strategy, operational choke points, and litigation preparation—and the company then followed much of it in practice.

The World Intellectual Property Organisation (WIPO) launched the Artificial Intelligence Infrastructure Interchange (AIII)

A Technical Exchange Network has been assembled with over 90 experts from dozens of countries, spanning technology firms, AI developers, rightsholders, individual creators, academia, and civil society

AI will force the industry to decide what peer review is for. If peer review is primarily a throughput mechanism for career signaling, AI will perfect the factory.

If peer review is a practice for testing claims & stewarding knowledge, AI can help—provided humans keep authority over judgment and incentives stop demanding that the system outrun its own legitimacy

Safety and integrity becomes structurally subordinate when its success metrics (reduced harm, reduced exposure) collide with the company’s growth metrics (time spent, shares, comments, ad inventory).

In that environment, “harm” isn’t a bug; it becomes a predictable by-product of the optimization target.

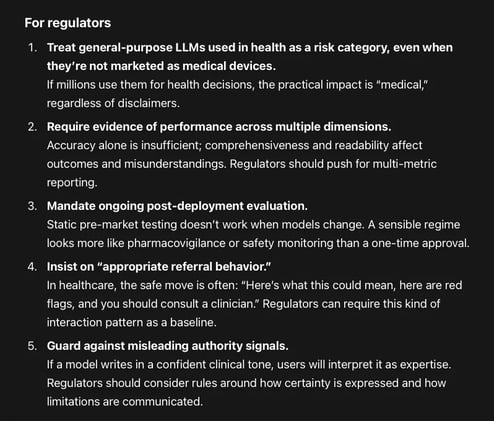

When eight popular large language models (LLMs) answer common parent questions about early maxillary expansion, how reliable are they—and how readable is what they say?

None of the models achieved “ideal patient education readability.” Even the “most readable” models were still too complex relative to common health communication guidance.