- Pascal's Chatbot Q&As

- Posts

- The UK government’s best path is to stop treating this as a binary choice (“exception” vs “no exception”) and instead build a two-sided market architecture...

The UK government’s best path is to stop treating this as a binary choice (“exception” vs “no exception”) and instead build a two-sided market architecture...

...where AI innovation depends on lawful, auditable access to high-quality content—and where creators can realistically enforce and monetise their rights.

“The UK’s Copyright–AI Fork in the Road: Build a Licensing Economy, Not a Scraping Exception”

by ChatGPT-5.2

The government’s March 2026 Report on Copyright and Artificial Intelligence and its companion Copyright and AI Impact Assessment read like a policy system trying to do two difficult things at once: (1) keep the UK a genuinely investable home for human creativity, and (2) keep the UK a genuinely attractive place to build and deploy AI. The documents concede something important that, politically, is both honest and destabilising: the evidence base is thin, the market is moving faster than law, and the international landscape may determine outcomes more than any single UK reform. That is why the government retreats from its previously “preferred” direction (a broad text-and-data-mining exception with opt-out/rights reservation) and pivots to a “do not legislate yet, build the plumbing first” approach—especially around transparency, technical standards, licensing infrastructure, and enforcement capacity.

But the most valuable subtext is this: the UK is deciding whether it wants an AI ecosystem that is fed by permissioned, high-quality, provenance-rich content (a licensing economy), or an AI ecosystem that is fed by ambiguity, leakage, and endless disputes (a scraping economy). The report implicitly argues that the former is the only sustainable path if the UK wants both creator legitimacy and durable AI innovation.

Key findings and conclusions of the report (and what they imply)

1) The government no longer backs the “opt-out exception” approach

The consultation option that previously looked like the government’s preferred policy—a broad exception for AI training with an opt-out/rights reservation mechanism—has lost political legitimacy and practical confidence. Creators argued it would enable uncompensated learning-from-works at scale, while placing the operational burden on right holders to “opt out” across a fragmented internet. AI developers argued it might still be more restrictive than other jurisdictions and not actually deliver competitiveness. The government’s conclusion: it is no longer the preferred way forward, and more evidence is needed before altering copyright rules.

Implication: the UK is implicitly acknowledging that “opt-out” is not a credible substitute for a functioning licensing market and enforceable transparency.

2) The UK’s real problem is not only copyright law—it’s enforceability and information asymmetry

Across the report’s themes, the same bottleneck reappears: right holders cannot reliably determine what was used, by whom, when, and for what. Without transparency, enforcement becomes prohibitively expensive, especially for individuals and SMEs. The report treats transparency as the precondition for any fair market: you can’t license what you can’t see; you can’t litigate what you can’t prove.

Implication: the next policy battle is likely to be less “exception vs no exception” and more “mandatory disclosure vs voluntary disclosure,” plus who pays and who audits.

3) Technical tools and standards matter—but “machine-readable opt-out” risks becoming a trap

The report recognises that technical standards (robots.txt, crawler governance, permissions, provenance metadata, registries) are central to controlling access and enabling licensing. But it also documents widespread concern that machine-readable opt-outs, in the context of a broad exception, shift administrative cost and monitoring burden onto right holders—again disproportionately harming individuals and SMEs—while bad actors ignore signals anyway.

Implication: technical standards are necessary, but they cannot be the sole enforcement layer or the primary burden on creators. Standards must be paired with compliance duties on developers (identity, logging, disclosures, auditability).

4) Licensing is growing, but the market needs “market infrastructure,” not government price-setting

The report’s licensing stance is pragmatic: do not legislate a one-size-fits-all licensing regime yet; let commercial negotiations evolve—but strengthen the conditions that make markets work (notably transparency and rights metadata). It also flags that identifying right holders and pricing value is hard, and that the per-work “transactional value” can be tiny—pointing to aggregation, intermediaries, collective mechanisms, and new marketplaces.

A key development here is the government-backed Creative Content Exchange (CCE) pilot concept: a trusted marketplace to license digitised cultural/creative assets.

Implication: government is positioning itself as a market-shaper (infrastructure, standards, incentives), not a central price regulator—at least for now.

5) Enforcement must be effective and accessible, or rights become theoretical

The report frames enforcement as a competitiveness issue: the UK should remain a strong IP jurisdiction, but enforcement must be “effective, accessible and proportionate.” It recognises creators’ view that transparency is a prerequisite, and notes arguments for applying UK copyright expectations to models trained overseas via market access conditions.

Implication: expect pressure for (a) a regulator or oversight mechanism for transparency compliance, and (b) a UK “market access” concept: if you sell AI here, you meet baseline disclosure and rights-respecting duties.

6) The government is open to removing copyright protection for purely computer-generated works

The report states that UK protection for computer-generated works (since 1988) departs from copyright’s rationale (rewarding human creativity), and proposes removing that protection absent evidence of value—while retaining protection for AI-assisted human works.

Implication: this is a cultural signal: UK policy is leaning toward “copyright is for humans,” while leaving space for commercial protection via contract, database rights, or other regimes.

7) Digital replicas are now treated as a mainstream rights gap—potentially requiring a new “personality right”

The report is blunt that existing UK law does not fully address unauthorised digital replicas of voice/face and that redress pathways are unreliable, especially for less famous individuals. There is strong support among stakeholders for exploring a new personality right or similar enhanced protections.

Implication: the UK is edging toward a “digital identity” protection layer that sits alongside copyright, because copyright is structurally the wrong tool for “likeness” harms.

8) The Impact Assessment makes the trade-offs explicit—and refuses to pick a winner yet

The Impact Assessment does not monetise costs/benefits and explicitly states it does not establish a preferred option. Still, it sketches the policy physics:

Option 0 (status quo): licensing market likely grows, but UK remains constrained for competitive foundation model training; adoption may be inhibited.

Option 1 (strengthen copyright / licensing required in all cases + transparency/market access): better for right holder remuneration and enforceability, but may risk model withdrawal/delay if compliance costs exceed UK market returns.

Option 2 (broad exception, no opt-out): most enabling for AI competitiveness, but risks undermining incentives to invest in creative output.

Option 3 (exception with rights reservation): depends heavily on how effective opt-out is; unclear legal effect if training occurs elsewhere; uncertain impact on creative industries and licensing.

Implication: the UK is admitting that it cannot “legislate its way” to AI competitiveness if training geography and permissive overseas regimes dominate; instead, it must compete on trustworthy data access, legal certainty, and high-quality content pipelines.

What this means for UK creators and rights holders

You have won time—but not certainty. The retreat from opt-out means the government is not (yet) granting a default permission layer to AI training. That reduces immediate existential shock, but it also postpones clarity about what compliance will look like at scale.

Transparency is becoming your battleground—and your leverage. If the UK implements meaningful input/output transparency, creators gain negotiating power (licensing becomes measurable) and enforcement becomes less asymmetrical. Without it, licensing becomes charity and litigation becomes a rich-company sport.

Small creators and SMEs are the policy “stress test.” The report repeatedly acknowledges that burdens (opt-outs, monitoring, enforcement) hit smaller right holders hardest. If UK policy doesn’t solve this, the outcome will be: large publishers and labels negotiate; everyone else gets harvested.

Digital identity harms are being separated from copyright harms. The push toward personality rights reflects a growing recognition that copyright cannot carry the whole load. Performers and public figures (and increasingly ordinary people) are likely to gain a new legal storyline for voice/face misuse.

A licensing economy is possible—but only if the UK builds rails, not slogans.Rights metadata, registries, crawler compliance norms, and trusted marketplaces are the unglamorous infrastructure that makes “permissioned AI” real.

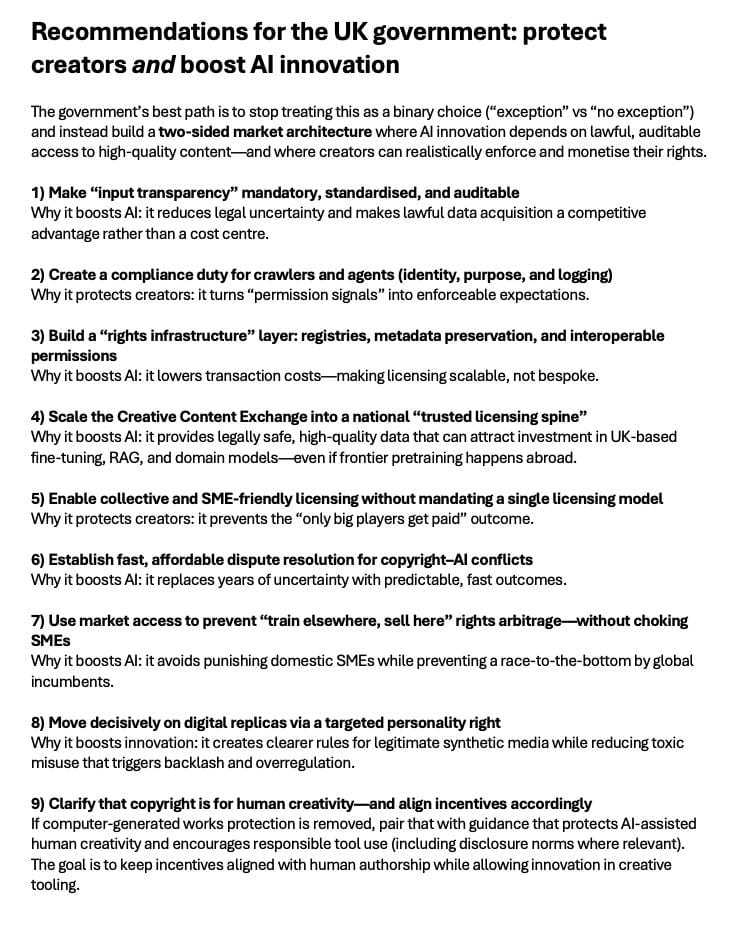

Recommendations for the UK government: protect creators and boost AI innovation

The government’s best path is to stop treating this as a binary choice (“exception” vs “no exception”) and instead build a two-sided market architecture where AI innovation depends on lawful, auditable access to high-quality content—and where creators can realistically enforce and monetise their rights.

1) Make “input transparency” mandatory, standardised, and auditable

Adopt a UK transparency regime that requires foundation model providers and major deployers to disclose meaningfulinformation about training inputs and acquisition methods—at a level that enables licensing and enforcement without exposing trade secrets unnecessarily. Minimum viable disclosure should include: dataset source categories, acquisition pathways (licensed, crawled, user-provided, synthetic, public domain), crawler identities, and recordkeeping duties.

Why it boosts AI: it reduces legal uncertainty and makes lawful data acquisition a competitive advantage rather than a cost centre.

2) Create a compliance duty for crawlers and agents (identity, purpose, and logging)

If robots.txt and similar standards are to matter, the UK should require “good-faith crawler behaviour” for commercial AI: verified crawler identity, declared purpose (training vs RAG vs indexing), rate limits, and retention of access logs. Pair this with penalties for spoofing/undisclosed crawling.

Why it protects creators: it turns “permission signals” into enforceable expectations.

3) Build a “rights infrastructure” layer: registries, metadata preservation, and interoperable permissions

The report notes concerns about metadata removal and the need for scalable preferences across many works. Government should fund and convene industry to implement: persistent rights metadata standards, provenance signals, and registries that allow creators to set permissions once and have them propagate.

Why it boosts AI: it lowers transaction costs—making licensing scalable, not bespoke.

4) Scale the Creative Content Exchange into a national “trusted licensing spine”

Treat the Creative Content Exchange pilot as a strategic industrial asset: a trusted marketplace for permissioned datasets, with clear licensing templates, provenance guarantees, and “compliance by design.” Connect it to universities, cultural institutions, publishers, music/film archives, and SMEs.

Why it boosts AI: it provides legally safe, high-quality data that can attract investment in UK-based fine-tuning, RAG, and domain models—even if frontier pretraining happens abroad.

5) Enable collective and SME-friendly licensing without mandating a single licensing model

Don’t force a single compulsory licensing scheme now, but actively enable multiple aggregation routes: collective management options where appropriate, trusted intermediaries, and sectoral licensing hubs. The government’s role should be to remove blockers (transparency, identity, metadata, template contracts), and ensure fair participation for individuals and SMEs.

Why it protects creators: it prevents the “only big players get paid” outcome.

6) Establish fast, affordable dispute resolution for copyright–AI conflicts

Litigation discovery costs are repeatedly implied as prohibitive. The UK should create a specialist mechanism (tribunal, ombuds, or a regulator-backed process) for transparency disputes, licensing breakdowns, and clear cases of unauthorised use—designed for speed and affordability.

Why it boosts AI: it replaces years of uncertainty with predictable, fast outcomes.

7) Use market access to prevent “train elsewhere, sell here” rights arbitrage—without choking SMEs

Develop a proportional “UK market access” compliance tier for major commercial models/services: if you operate at scale in the UK, you must meet baseline transparency and recordkeeping standards and demonstrate lawful access pathways (or credible risk management). Exemptions or lighter-touch duties can apply for small UK startups building at the application layer.

Why it boosts AI: it avoids punishing domestic SMEs while preventing a race-to-the-bottom by global incumbents.

8) Move decisively on digital replicas via a targeted personality right

Proceed with developing a personality right (or equivalent) focused on voice/face misuse, consent, and commercial exploitation—separate from copyright. Make remedies accessible to ordinary people, not just celebrities.

Why it boosts innovation: it creates clearer rules for legitimate synthetic media while reducing toxic misuse that triggers backlash and overregulation.

9) Clarify that copyright is for human creativity—and align incentives accordingly

If computer-generated works protection is removed, pair that with guidance that protects AI-assisted human creativity and encourages responsible tool use (including disclosure norms where relevant). The goal is to keep incentives aligned with human authorship while allowing innovation in creative tooling.

Closing: the UK’s competitive advantage is “trustworthy AI,” not “cheapest possible training”

The documents quietly point to an uncomfortable truth: the UK is unlikely to outcompete the US or China on raw frontier pretraining by simply loosening copyright. But it can lead on something more durable: a high-trust AI economy built on permissioned data, enforceable transparency, and credible rights protection—the kind of environment where creators keep investing in new work and AI companies can innovate without living in court.

If the UK builds the rails—transparency, standards, marketplaces, SME-accessible enforcement—it can protect creators and make lawful access to premium content a magnet for AI investment. If it doesn’t, it will get the worst of both worlds: creator backlash plus AI uncertainty, while training happens elsewhere anyway.

·

18 MAR

WIPO launches AI Infrastructure Interchange

·

18 MAR

STM publishes new discussion document on responsible use of research content in generative AI