- Pascal's Chatbot Q&As

- Archive

- Page 15

Archive

The same structural and psychological forces that mandate human presence in the office are likely to serve as a bulwark against the total roboticization of society,

...as the desire for human social hierarchy, prestige, and the maintenance of a visible “entourage” remains a fundamental driver of those who control capital and decision-making.

A popular new model release makes dazzling output easy; users flood the internet with impressive fan-adjacent creations; rightsholders see their franchises reproduced at industrial scale;

Lawyers send letters; the AI companies respond with a mix of hurried safeguards, selective blocking, and carefully worded statements that reveal as much about incentives as they do about compliance.

Individuals in high-level leadership positions are frequently influenced by the same psychological mechanisms that fuel mass movements, leading to a staunch refusal to process sound evidence...

...or execute on expert advice. Any external criticism of their leader is interpreted as a direct assault on the individual’s own intelligence, judgment, and character.

AI changes what customers perceive as value. If the perceived value becomes “time-to-draft” and “time-to-decision,” the customer may accept higher error rates for many tasks...

...using premium sources only for escalation. In other words: publishers may still own the “source of truth,” while someone else owns the “place where truth is consumed.”

The following analysis details the twenty-five primary frictions governing the success of AI scaling, followed by an examination of the systemic consequences of this institutional impasse.

The future will not be decided by the intelligence of the models, but by the resilience and adaptability of the societies they inhabit.

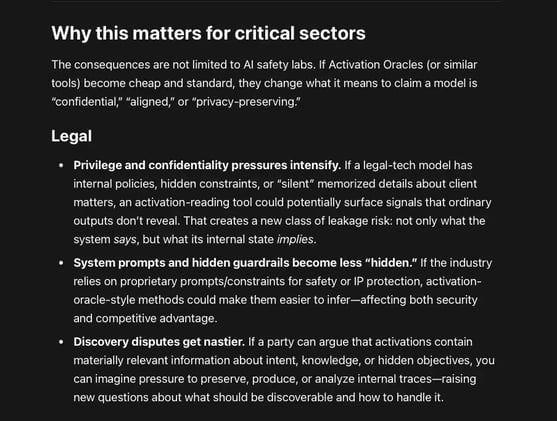

Activation Oracles (AOs): large language models that are trained to “read” another model’s internal activations (the huge arrays of numbers produced inside the network while it thinks)

A model can refuse to disclose something in its outputs, yet still carry that information in an internal form that another model can translate into words. Controls must not only cover outputs...

EPSTEIN CASE: The total volume of data available to the FBI and DOJ exceeds 14.6 terabytes, yet the public repository released on January 30, 2026, encompasses only about 300 gigabytes.

This raises critical questions regarding institutional transparency, the integrity of the release process, and the potential concealment of high-profile co-conspirators.

AI as a Justice-System Risk Multiplier: (1) implementation mistakes, (2) questionable “fit” to the real-world population, and (3) legal/ethical fragility around data and proxies.

The model becomes a quiet policy lever, nudging outcomes across thousands of cases while leaving only a faint trace of how much it influenced each decision.

If a client (or potentially a lawyer) runs facts, theories, timelines, or “talking points for counsel” through a third-party AI tool...

...a court may treat the resulting materials less like private draft communications and more like ordinary third-party research—discoverable by the other side.