- Pascal's Chatbot Q&As

- Archive

- Page -263

Archive

The United States no longer faces a world where military superiority can be uncoupled from economic dependence. Instead, it operates within an “era of economic entrapment”...

...where its most sophisticated technology, its defense industrial base, and even the health and nutrition of its citizens are anchored to supply chains controlled by strategic rivals.

The Architecture of Managed Reality: Information Control, the Utopian Mirage of Free Speech, and the Technical Path to Uncensorable Knowledge. The “system administrators” of the prevailing reality.

This report investigates the historical and philosophical premise that total information freedom has always been an impossibility, serving instead as a utopian “marketing vehicle”.

Today, the influence of Durie Tangri alumni extends beyond the courtroom, permeating the in-house legal departments of Alphabet, Meta, Amazon, and OpenAI...

Litigants on the rights-owner side can exploit the concentration of counsel and the specific precedents set by the Durie Tangri alums to create leverage in both litigation and settlement negotiations.

A stacking of mechanisms that quietly convert speech into a permissioned activity—filtered by platform policy, priced by quasi-legal services, and chilled by corporate litigation strategies.

A society where the boundaries of the sayable are increasingly set by private infrastructure and enforced through automated systems and asymmetric power.

That markets will eventually be composed of interacting autonomous bots—acting on behalf of both retailers and consumers—is no longer a speculative projection.

The systemic drivers moving society toward a model where human oversight is fundamentally removed in favor of structural containment.

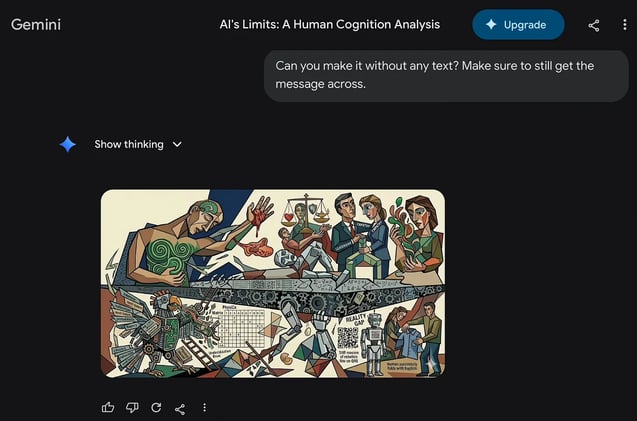

The “alchemy of qualia” remains safely within the biological vessel, and the “stochastic parrot” remains a sophisticated mimic of the language it can never truly speak.

The Computational Ceiling: A Forensic Analysis of Non-Replicable Human Cognition and Agency in the Era of Large Language Models

Paper: LLMs Generate Harmful Content using a Distinct, Unified Mechanism. Regulators might push for evidence that safety isn’t only behavioral but also mechanistic (internal controls, robustness).

If harmful output can be localized and mitigated with limited utility loss, plaintiffs and regulators may argue that failing to do so is negligent—especially in high-stakes deployments.

A map of the current boundaries of artificial intelligence can be constructed, revealing the inherent “reality gap” that defines modern generative systems.

These instances serve as critical forensic reminders that users are interacting not with a reasoning mind, but with a statistical pattern-matching engine.

The Impending Futility of Human Argument: If we allow the utility of debate to be automated away, we surrender the very mechanism that allowed us to create the technology in the first place.

A more insidious and transformative consequence is emerging—one that targets the very mechanism of human collective progress: the act of debate and the search for truth through rhetorical discourse.

The EU isn’t merely talking about “trustworthy AI”, “digital sovereignty”, or “data spaces” anymore—it is paying for the missing operating system that makes those slogans work in practice.

Making AI and digital systems deployable at scale in regulated, high-trust sectors, using standardised infrastructure, trained workforces, and compliance-ready implementation capacity.

The OpenAI probe is not narrowly framed around one incident; it’s pitched as an inquiry into minors, national security, suicide/self-harm concerns, and mass violence in one move.

The language used positions AI as something that can “endanger,” “facilitate criminal activity,” and “empower enemies” as if the model were an operational participant rather than a communications tool

Once OpenAI allegedly had specific warning signals that a particular user posed real-world danger, it had a duty to act—fast, decisively & in ways that went beyond quietly flipping an internal switch.

Should an AI vendor be treated more like a platform hosting speech, or more like a manufacturer shipping a defectively designed product?