- Pascal's Chatbot Q&As

- Archive

- Page -26

Archive

A bid to relocate the center of gravity of election administration—from the states and Congress to the president—using emergency framing as the crowbar.

If you can persuade enough people that the election system itself is under foreign attack, you can argue that extraordinary executive power is not merely justified but required.

When the world tries to regulate Silicon Valley, Washington reframes the regulation as an attack on innovation, trade, or freedom itself—and then mobilizes.

If the real goal is to protect innovation and civil liberties and security, a smarter approach exists—one that doesn’t require treating other democracies’ sovereignty concerns as illegitimate.

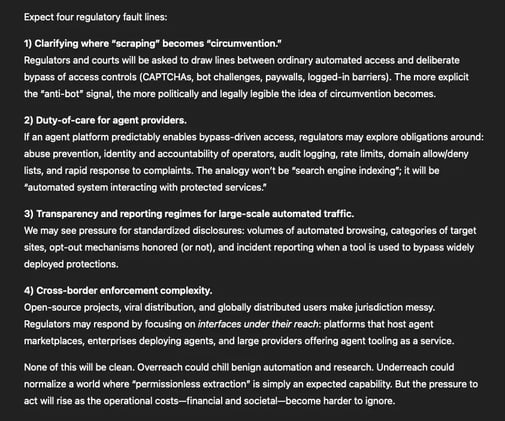

Let the AI agent decide what to extract; let the bypass tool handle how to get in. And when defenders adapt, the attackers adapt again—fast.

The story isn’t really about one bypass tool or one viral agent. It’s about a new equilibrium: as AI agents become ordinary, the internet’s defensive layer becomes a contested battleground.

AI systems generate synthetic media optimized for engagement metrics that may systematically erode judgment, attention, and civic competence.

The ultimate concern is loss of cognitive sovereignty‚ ability to form independent judgments when every information encounter has been algorithmically optimized to achieve someone else’s objectives.

The posts reveal the ultimate goal: establishing AI development as a regulatory exception zone where normal rules about data rights, environmental impact, labor protections & antitrust enforcement...

...don’t apply. The cumulative effect creates a parallel legal regime where tech giants operate beyond democratic accountability.

RAND’s message is bracing: export controls for AI and UAS are no longer about guarding a single crown jewel. They are about managing an evolving, contested ecosystem...

...where your own industrial health is part of national security, and where regulatory agility is as important as regulatory strictness.

This report moves the conversation away from “Is the model good?” and toward “Can a reviewing body reconstruct what happened in this case?” In administrative law, that question is decisive.

AI can make public administration look modern, efficient, and even accurate, while quietly making it legally unreviewable.

Ace Cam v. Runway AI tries to reframe the core question from “Is training fair use?” to “Did you unlawfully break through access controls to get the training data in the first place?”

For AI providers, the warning is straightforward: your biggest legal vulnerability may not be your model outputs — it may be your data acquisition pipeline.

Korean broadcasters are seeking both injunctive relief (to stop alleged infringement) and damages, based on allegations that OpenAI trained ChatGPT on their news content without authorization.

The broadcasters put the emphasis on South Korea’s data sovereignty and the practical barriers local rights holders face when suing global AI firms.

The outsourcing of democratic orientation tools to systems that can fabricate facts, distort party positions, and present themselves as neutral while lacking any credible editorial process.

When such tools are wrong, the damage is not merely informational—it can directly alter political behavior, confidence in elections, and trust in institutions.

EPSTEIN CASE: An exhaustive analysis of the locations, methodologies, and materials associated with this concealment strategy, offering a blueprint for future investigative recovery.

A more critical dimension of the financier’s infrastructure has recently come to light: a sophisticated network of off-site storage units used to sequester incriminating digital and physical evidence.