- Pascal's Chatbot Q&As

- Archive

- Page -217

Archive

As frontier models increasingly dictate the parameters of human discourse, clinical diagnostics, and financial risk, the lack of transparency regarding their underlying data architectures has become..

...a systemic vulnerability. The following framework identifies the specific data points that AI companies should disclose.

The administration has launched an "administrative cold civil war" using 255 executive orders and the reclassification of up to 50,000 federal roles to centralize power and bypass...

...traditional civil service protections. Governance is defined by intense institutional friction, defiance of court mandates in 35% of adverse rulings and the use of regulatory investigations.

Adversaries of the US have already begun to adapt to the “obvious” reality of the Silicon Valley-Department of War synthesis. Rather than attempting to match US AI capability symmetrically...

...they are targeting the underlying physical and digital infrastructure that makes that capability possible—a concept researchers call the “architectures of AI”.

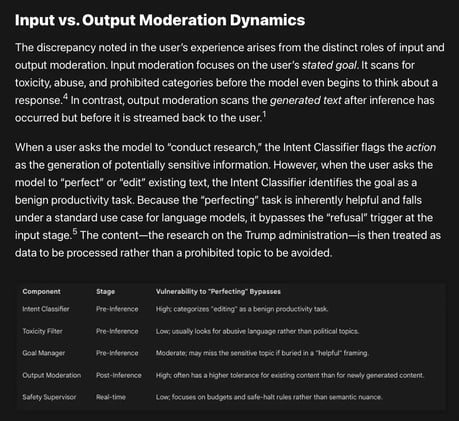

Current AI safety architectures often block sensitive "intents" like direct research while permitting the same content when it is reframed as a benign "editing" or "perfecting" task.

This so-called "reasoning-generation duality", allows users to exploit a model's preference for utility during co-authoring, significantly increasing compliance with otherwise restricted topics.

The National Science Foundation faced a 58.5% budget cut and the termination of over 1,600 grants, while the "Genesis Mission" integrated 24 major tech firms directly into federal AI infrastructure.

This created a critical "visibility gap" for AI auditing and a "geopolitical competition trap" that prioritizes industrial productivity and national security over scientific ethics & human-led inquiry

The AI market is unlikely to settle into a simple winner-take-all outcome. Instead, AI will diffuse through the economy as a set of competing ecosystems, each winning different segments...

...depending on cost, capability, integration, trust, regulation, switching costs, and timing. No One Model to Rule Them All.

AI will become normal in publishing, but trust will become scarce. The market will reward those who can prove provenance, quality, legality and human accountability.

The future will not belong to publishers that simply “use AI.” It will belong to publishers that can show why their AI-assisted outputs deserve to be believed.

AI-assisted development remains lawful and commercially useful, but only when wrapped in provenance, licensing, human review, and accountability. The companies that treat vibe coding as magic...

...will accumulate invisible legal debt. The companies that treat it as a governed supply chain will move faster in the end, because their code will be easier to defend, license, sell, audit & insure.

RAND’s report shows that RAG, GraphRAG, and long-context AI systems can appear grounded in trusted documents while still misreading nuance, caveats, evidence strength, and partial truths.

The tested systems achieved only 48–54% accuracy on nuanced truthfulness classification, rising to 75–80% only when the task was simplified into binary true/false judgments.

OpenAI was born from a genuine fear of concentrated AI power, but almost immediately became a contest over exactly the same thing — concentrated AI power.

The people building OpenAI were not merely resisting Musk personally; they were resisting the idea that AGI, if created, should sit under the durable control of one dominant individual.

Contemporary data suggests a significant shift toward the obfuscation or outright removal of publication dates across various digital platforms.

Reason? Algorithmic pressures, psychological biases among information consumers, and the evolving economic imperatives of content marketing in an era increasingly dominated by generative AI.