- Pascal's Chatbot Q&As

- Posts

- The uncomfortable truth is that AI chat is becoming a new kind of societal sensor—one that is commercially operated, globally scaled, and only partially legible to outsiders.

The uncomfortable truth is that AI chat is becoming a new kind of societal sensor—one that is commercially operated, globally scaled, and only partially legible to outsiders.

The choice is whether scanning of chats evolves into accountable safety infrastructure with hard limits—or drifts into unaccountable surveillance theatre that fails at prevention and harms trust.

The Safety Paradox: When Your Chatbot Hears a Threat, Who Has to Act?

by ChatGPT-5.2

This TechCrunch report describes a grim, modern dilemma: an individual later suspected of carrying out a mass shooting allegedly used ChatGPT in ways that alarmed staff; the chats were flagged by internal misuse-monitoring tools and the account was banned months before the attack, yet the company reportedly debated—then declined—to alert law enforcement until after the incident. The core tension is not whether “the system worked” (it detected and enforced policy), but whether detection creates a moral and legal duty to escalate beyond the platform when the stakes might be life and death.

That question is going to become unavoidable, because the underlying capability—scanning for misuse signals, triaging risk, and escalating for human review—is not a quirky OpenAI one-off. It is quickly becoming baseline infrastructure across major AI providers, for three reasons: (1) providers must enforce usage policies at scale; (2) they face reputational and liability exposure when systems are used for harm; and (3) regulators are increasingly treating “systemic risk management” as a product requirement, not a voluntary virtue.

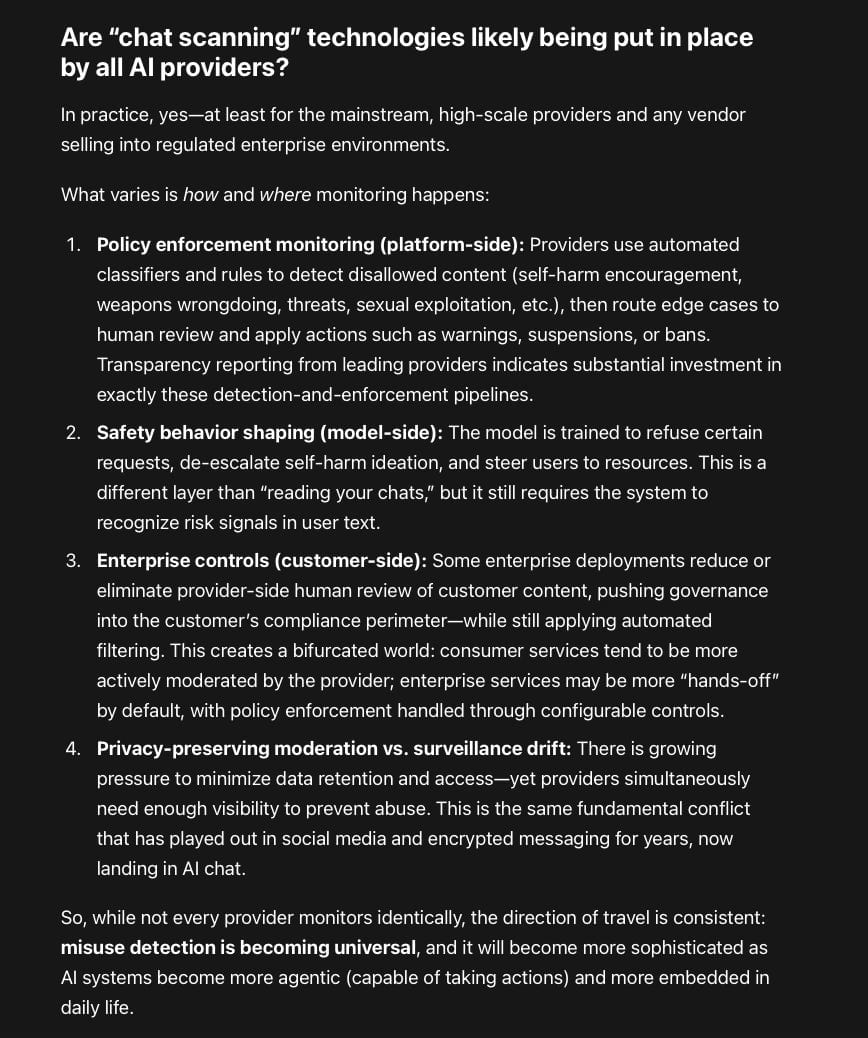

Are “chat scanning” technologies likely being put in place by all AI providers?

In practice, yes—at least for the mainstream, high-scale providers and any vendor selling into regulated enterprise environments.

What varies is how and where monitoring happens:

Policy enforcement monitoring (platform-side): Providers use automated classifiers and rules to detect disallowed content (self-harm encouragement, weapons wrongdoing, threats, sexual exploitation, etc.), then route edge cases to human review and apply actions such as warnings, suspensions, or bans. Transparency reporting from leading providers indicates substantial investment in exactly these detection-and-enforcement pipelines.

Safety behavior shaping (model-side): The model is trained to refuse certain requests, de-escalate self-harm ideation, and steer users to resources. This is a different layer than “reading your chats,” but it still requires the system to recognize risk signals in user text.

Enterprise controls (customer-side): Some enterprise deployments reduce or eliminate provider-side human review of customer content, pushing governance into the customer’s compliance perimeter—while still applying automated filtering. This creates a bifurcated world: consumer services tend to be more actively moderated by the provider; enterprise services may be more “hands-off” by default, with policy enforcement handled through configurable controls.

Privacy-preserving moderation vs. surveillance drift: There is growing pressure to minimize data retention and access—yet providers simultaneously need enough visibility to prevent abuse. This is the same fundamental conflict that has played out in social media and encrypted messaging for years, now landing in AI chat.

So, while not every provider monitors identically, the direction of travel is consistent: misuse detection is becoming universal, and it will become more sophisticated as AI systems become more agentic (capable of taking actions) and more embedded in daily life.

If AI providers scan for risk, should they be responsible for follow-up actions?

They should be responsible for a defined set of follow-up actions—but not in the simplistic sense of “if flagged, call the police.” The right approach is closer to aviation safety than content moderation: you need clear thresholds, layered interventions, auditability, and strong guardrails against overreach.

Here’s the key distinction:

Duty to care (yes): If a provider builds a system that routinely encounters credible signs of imminent harm (self-harm, violent threats, ongoing child exploitation), it cannot pretend it is “just a tool.” At minimum, it should intervene inside the product and reduce the probability of harm (refusal, de-escalation, crisis routing, rate limits, session lockouts, human review).

Duty to report (narrow, conditional): Reporting to law enforcement or third parties should be exceptional, reserved for imminent and credible threatswhere the provider has enough identifying information to make a report meaningful and where reporting is likely to prevent harm rather than create it.

If you impose a broad duty to report, you risk building a mass automated informant system with predictable failure modes:

False positives become civil-rights harms. Teenagers venting, people roleplaying, survivors describing trauma, or users with intrusive thoughts could be escalated incorrectly.

Chilling effects move people away from seeking help. If users believe “the bot will call the police,” they will self-censor precisely when early intervention could help.

Adversarial adaptation. Determined attackers will route around monitored channels, while ordinary users bear the surveillance costs.

Jurisdictional chaos. “Imminent threat” standards and privacy laws differ across countries; a universal escalation practice can violate local law or norms.

A workable compromise is a tiered responsibility model:

Tier 1: Product-level safety interventions (always required)

Self-harm: empathetic interruption + crisis resources localized by country; option to connect to live help lines where partnerships exist.

Threats/violence: refusal + de-escalation + friction (cooldowns, “are you in immediate danger?” checks).

Sexual exploitation/CSAM: immediate refusal + internal escalation + account action.

Tier 2: Human review + account enforcement (required for high-risk categories)

Providers should maintain trained trust-and-safety review capacity, with consistent playbooks and quality assurance.

Tier 3: External escalation (rare; tightly governed)

Trigger only when a case meets a high bar: credible, imminent, specific, and actionable.

Use structured reporting channels (where they exist), log justification, and require dual-approval (“two-person rule”) to reduce unilateral errors.

Where possible, prefer health/crisis escalation over police escalation for self-harm, unless there is an immediate danger to others or clear necessity.

Should providers contact help organizations like Samaritans?

For self-harm crises, “contact a helpline” is often more appropriate than law enforcement—but the same consent and privacy issues apply.

A sane principle is:

Default: empower the user to seek help, by surfacing localized resources, encouraging reaching out to trusted people, and offering steps for immediate safety.

Escalate without consent only when the risk is immediate and severe, and only where the provider can do so responsibly (reliable identity, local protocols, trained responders). Otherwise, you risk well-intentioned escalation that becomes coercive, wrong, or dangerous—especially for marginalized users or in countries where state intervention can itself be harmful.

If regulators want “duty to intervene,” they should avoid pushing providers into amateur crisis-response roles. Providers are not hospitals, and most do not have the evidentiary certainty, identity data, or jurisdictional competence to safely initiate real-world interventions at scale.

ChatGPT’s perspective: make responsibility real—but bounded, auditable, and privacy-respecting

The TechCrunch case shows the nightmare scenario: the platform detects something disturbing, humans feel the moral weight, but the organization hesitates because escalation standards are unclear and consequences of over-reporting are severe. That is not a failure of “ethics”; it is a failure of governance design.

The goal should not be to make AI companies omniscient guardians. It should be to make them:

Competent at reducing harm within the system, and

Accountable for consistent escalation decisions when risk is extreme.

Recommendations for AI makers

Publish a clear escalation standard (what “imminent and credible” means, what evidence qualifies, what gets logged, what oversight exists).

Build layered interventions before escalation (friction, refusal, de-escalation, crisis resources, session controls).

Use privacy-preserving safety architecture (data minimization, limited retention, compartmented access, strong internal audit trails).

Create “safety red-team” feedback loops (measure false positives/negatives and publish aggregate metrics).

Separate consumer vs enterprise pathways transparently (so customers understand when human review is enabled/disabled and what that implies).

Formalize third-party partnerships carefully (helplines, crisis orgs, NGOs) with jurisdictional coverage, consent flows, and safeguards.

Train reviewers for context and vulnerability (not just policy compliance), because the hardest cases are ambiguous and human stakes are high.

Recommendations for regulators

Define a legal safe harbor for good-faith interventions paired with strict requirements: documentation, proportionality, and oversight. Without safe harbor, companies will oscillate between over-reporting (to avoid blame) and under-reporting (to avoid liability).

Require transparency reporting on safety operations (flag rates, human-review volumes, enforcement actions, escalation counts, error rates).

Set minimum standards for crisis handling (localized resources, tested self-harm flows, and auditing), without mandating blanket reporting.

Clarify cross-border data and escalation rules so providers don’t improvise in the worst moment.

Treat “agentic capability” as a step-change: if systems can take actions, the bar for monitoring, approvals, and user consent must rise accordingly.

Audit for discrimination and chilling effects: ensure escalation systems do not disproportionately target vulnerable groups or suppress legitimate expression.

The uncomfortable truth is that AI chat is becoming a new kind of societal sensor—one that is commercially operated, globally scaled, and only partially legible to outsiders. The choice isn’t “scan or don’t scan.” The choice is whether scanning evolves into accountable safety infrastructure with hard limits—or drifts into unaccountable surveillance theatre that fails at prevention and harms trust.