- Pascal's Chatbot Q&As

- Posts

- The conversion of the world into a quantitative operational space through data-gathering is described as a process that converts the world into property to be ruled over.

The conversion of the world into a quantitative operational space through data-gathering is described as a process that converts the world into property to be ruled over.

As such, both economic and cognitive power are involved in the current wave of AI blocking, as platforms seek to retain the value of the knowledge and interactions generated within their boundaries.

The Great Enclosure of the Intelligence Age: Platform Protectionism and the Crisis of AI Permission

by Gemini 3.0, Deep Research. Warning, LLMs may hallucinate!

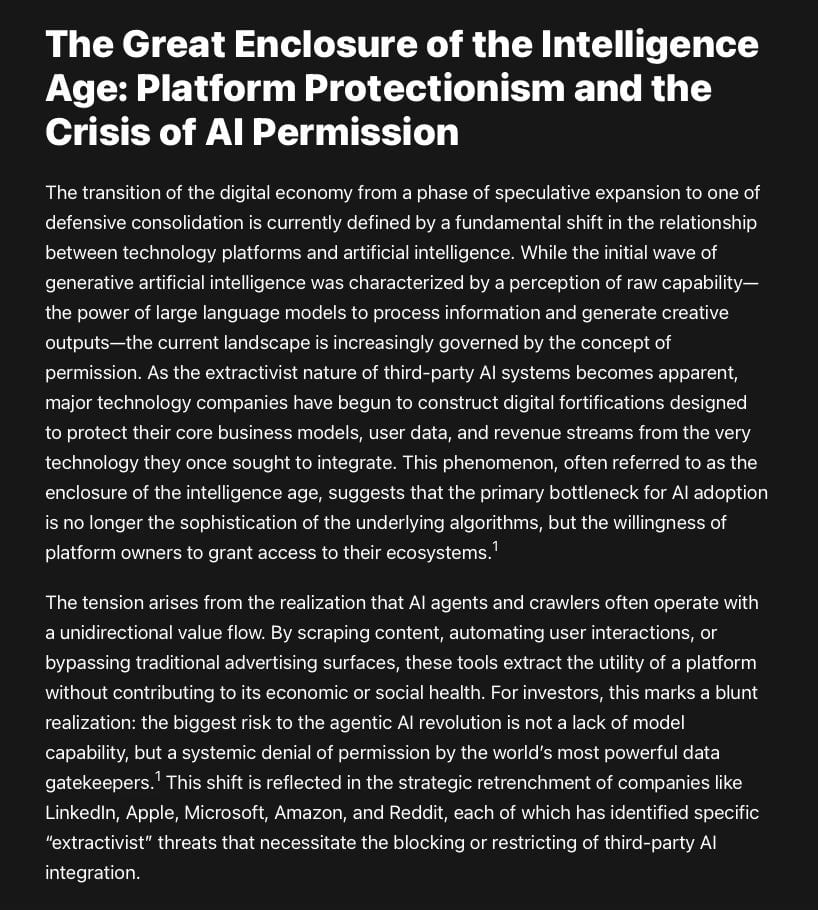

The transition of the digital economy from a phase of speculative expansion to one of defensive consolidation is currently defined by a fundamental shift in the relationship between technology platforms and artificial intelligence. While the initial wave of generative artificial intelligence was characterized by a perception of raw capability—the power of large language models to process information and generate creative outputs—the current landscape is increasingly governed by the concept of permission. As the extractivist nature of third-party AI systems becomes apparent, major technology companies have begun to construct digital fortifications designed to protect their core business models, user data, and revenue streams from the very technology they once sought to integrate. This phenomenon, often referred to as the enclosure of the intelligence age, suggests that the primary bottleneck for AI adoption is no longer the sophistication of the underlying algorithms, but the willingness of platform owners to grant access to their ecosystems.1

The tension arises from the realization that AI agents and crawlers often operate with a unidirectional value flow. By scraping content, automating user interactions, or bypassing traditional advertising surfaces, these tools extract the utility of a platform without contributing to its economic or social health. For investors, this marks a blunt realization: the biggest risk to the agentic AI revolution is not a lack of model capability, but a systemic denial of permission by the world’s most powerful data gatekeepers.1 This shift is reflected in the strategic retrenchment of companies like LinkedIn, Apple, Microsoft, Amazon, and Reddit, each of which has identified specific “extractivist” threats that necessitate the blocking or restricting of third-party AI integration.

The LinkedIn Defensive Architecture: Authenticity as a Protected Resource

LinkedIn represents a sophisticated example of a closed ecosystem fighting a war of attrition against automated AI agents. As a platform predicated on professional trust and human-in-the-loop networking, the emergence of AI-driven outreach tools represents an existential threat to its social graph. LinkedIn’s defensive strategy is built on the principle that automation, particularly when driven by external AI agents, degrades the authenticity that makes the platform valuable to recruiters and advertisers.2 The platform utilizes a multi-layered detection system designed to identify and penalize any behavior that deviates from the rhythm of a human user, employing browser fingerprinting, behavioral biometrics, and a point-based penalty system to enforce its professional ecosystem.4

The extractivist nature of AI on LinkedIn is found in the way these tools attempt to commodify professional relationships through high-volume, low-quality messaging that bypasses the platform’s intended engagement mechanics. When an AI agent connects to LinkedIn from a device that does not mirror the user’s typical environment—such as a cloud server or an unusual IP address—it triggers immediate red flags.2 LinkedIn’s algorithms are trained to spot anything that looks like a bot, focusing on browser extensions, cookie-sharing, and unnatural behavior patterns that are too fast or repetitive for a human to execute.2

Bot Detection Strategies and Behavioral Biometrics

LinkedIn’s machine learning security filters out the noise of low-quality outreach by monitoring the technical fingerprint of every login. This includes analyzing hardware specifications, GPU traces, and installed fonts to create a persistent digital ID for each user.4 This level of scrutiny allows LinkedIn to enforce its one user, one account policy with extreme precision. If the platform identifies a shared hardware DNA between multiple profiles, it can link them instantly and flag them for suspicious activity, a move designed to prevent the scaling of bot-driven networks.4

The consequences for users attempting to deploy AI agents on LinkedIn are severe. Profile bans often result in the permanent loss of years of networking and credibility, a risk that many B2B outreach professionals are only beginning to grasp.2 LinkedIn’s refusal to grant permission to third-party automation tools is a direct response to the noise created by AI, which threatens to drown out the signal of genuine professional interaction. Furthermore, LinkedIn has inverted this extractivist dynamic by making user data available for its own AI training by default, while simultaneously blocking others from doing the same—a move that underscores the platform’s intent to maintain a monopoly on its data moat.5

Apple and the Enclosure of the App Development Ecosystem

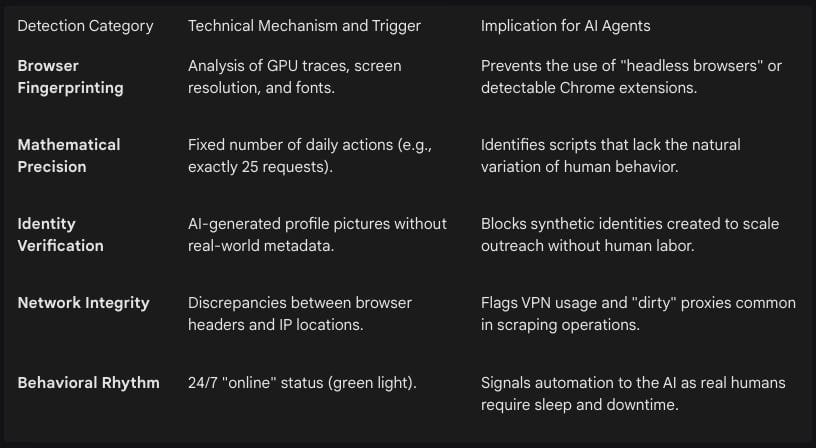

Apple’s approach to blocking AI is driven by a different, though equally extractivist, concern: the preservation of the App Store’s economic model and the integrity of the iOS ecosystem. The emergence of vibe coding—a phenomenon where natural language descriptions are translated by AI into functional software—threatens Apple’s control over how apps are built, reviewed, and monetized.6 By blocking updates to platforms like Replit and Vibe Code, Apple is enforcing a strict interpretation of its self-contained app guidelines. These guidelines require that an app’s core features and functionalities be present at the time of review and prohibit the execution of code that modifies the app after installation.8

The extractivist threat here is multifaceted. First, vibe-coded apps can potentially bypass the App Store Review process by generating new functionality on the fly, which Apple views as a security risk and an evasion of its quality controls.6 Second, these tools often encourage the creation of web-based applications or mini-apps that completely circumvent Apple’s 15-30% commission on in-app transactions.6 By ensuring that all app development remains within a framework where it can collect its commission, Apple protects its revenue streams from being extracted by third-party AI builders that would otherwise create a decentralized app economy.

Strategic Rationale for Apple’s AI Restrictions

Apple’s resistance to third-party AI app builders is a calculated effort to protect its revenue streams while preparing its own proprietary AI tools. Critics argue that Apple is selectively enforcing rules to suppress third-party competitors while promoting its own integrated AI tools, thereby creating an uneven playing field.6 Apple is currently integrating similar AI features into its proprietary development environment, Xcode, using models from providers like OpenAI and Anthropic. This move effectively closes the door on independent platforms while ensuring that AI-driven development remains a feature of the Apple ecosystem rather than an external disruption.6

Apple frames these restrictions as necessary to preserve a secure user experience and protect platform integrity. By maintaining tight control over how apps are built and updated, Apple protects its “walled garden” ecosystem.6 However, developers argue this hinders innovation and democratized access to software development. The tension between Apple’s ecosystem control and the growing demand for accessible development methods illustrates a broader pattern where the company prioritizes revenue and control over fostering a more open and innovative ecosystem.6

The Data Moat Strategy: Reddit, Stack Overflow, and the Death of the Free API

The extractivist nature of AI training has forced a radical re-evaluation of public data. Platforms like Reddit and Stack Overflow, which for over a decade functioned as open repositories of human knowledge, have transitioned to a Knowledge-as-a-Service model. The core realization for these companies is that their data—the billions of person-to-person conversations and peer-reviewed code samples—is the fundamental engine of reasoning and emergent abilities for large language models.1

In 2023 and 2024, both Reddit and Stack Overflow ended the era of free API access for large-scale AI developers. Reddit’s leadership explicitly stated that while they value the Reddit corpus, they have a problem with large companies crawling Reddit, generating value, and not returning any of that value to the platform or its users.13 This has led to high-stakes negotiations, such as Reddit’s $60 million per year deal with Google, which grants the search giant exclusive real-time access to Reddit data while potentially blocking competitors from achieving similar model performance.14

Stack Overflow’s Evolution Toward Managed Licensing

Stack Overflow has adopted a strategy of intentional evolution, seeking to bridge the trust gap in AI by positioning its data as a verified, high-quality source for LLMs. The company’s CEO, Prashanth Chandrasekar, has been vocal about the need for compensation, arguing that community platforms that fuel LLMs should be compensated so they can reinvest back into their communities.16 Stack Overflow’s framework around socially responsible AI involves a commitment from product partners to provide attribution to the subject matter experts who contributed the content.12

Direct Licensing: Through Knowledge Solutions, Stack Overflow provides API access to over 50 billion tokens of data for a fee, targeting enterprise AI developers.17

Attribution Frameworks: The company demands that AI partners provide clear attribution to the human experts who originally contributed the knowledge, fighting back against the anonymization of creative labor.12

Community Reinvestment: Revenue from AI licensing is framed as a means to sustain the community of human moderators and curators whose work is currently being mined by bots.16

The data moat is now the primary competitive advantage in the AI sector. As models become more commodified, the quality and exclusivity of the training data become the only durable differentiators. This has led to a downward spiral for third-party developers who rely on open APIs, as platforms increasingly prioritize walled garden agreements with a few select AI giants.18 The move to paid models for API access is a clear signal that the era of the open web as a free training ground for AI is coming to an end.

Agentic Commerce and the Advertising Moat: The Amazon Defensive Posture

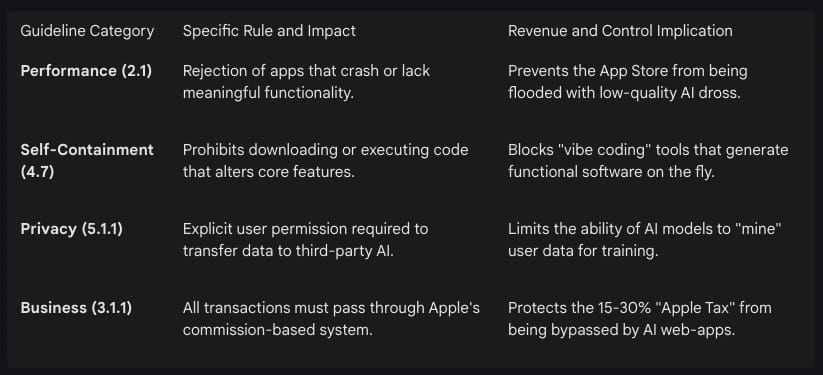

Amazon’s recent actions against AI shopping agents, specifically those from Perplexity, Google, and Meta, reveal a conflict between AI-driven utility and the advertising-driven internet. In late 2025 and early 2026, Amazon intensified its efforts to block external bots from scraping product listings and automating purchases.19 The extractivist threat in e-commerce is uniquely damaging to Amazon’s business model. Amazon earns over $56 billion annually from advertising. When a human shopper browses Amazon, they are exposed to sponsored products and targeted ads. An AI agent, such as Perplexity’s Comet browser assistant, extracts only the necessary product data and executes the purchase without human eyes ever seeing an advertisement.19

This represents a decoupled shopping experience that threatens to turn Amazon into a back-end fulfillment center while stripping away its high-margin advertising revenue. Amazon has sent cease-and-desist letters to AI startups like Perplexity, asking them to refrain from using agents to make purchases, a move Perplexity has called bullying.21 Amazon argued that agents taking the place of human shoppers would degrade the customer experience and explicitly prohibits any use of data mining, robots, or similar data gathering and extraction tools.21

Amazon’s Defensive Measures Against AI Agents

Amazon’s response has been both technical and legal. By adding major tech firms to its robots.txt blacklist and issuing cease-and-desist letters, Amazon is signaling that it will only permit AI-powered shopping if it owns the agent.19 Amazon’s own Rufus assistant is designed to keep the user within the Amazon ecosystem, ensuring that the AI’s suggestions align with Amazon’s profitability goals.21

Industry analysts point out that Amazon’s restriction of external bots underscores growing tension between data ownership, innovation, and competitive differentiation in digital commerce. Amazon aims to shield its marketplace from innovations that could disrupt its control over shopper experience and ad revenue.19 This decision reflects a broader strategy to keep AI-powered shopping experiences in-house, ensuring that the benefits of AI—such as improved efficiency and personalization—are channeled through their own technology.22

Microsoft’s Strategic Retrenchment: Scaling Back AI Bloat and Permission Risks

Even Microsoft, a primary architect of the current AI boom, has begun to dial back its AI integration in response to user feedback and operational risks. Throughout 2025, Windows 11 users complained about AI bloat—the shoehorning of Copilot into every corner of the operating system, from the Snipping Tool to Notepad.24 This pullback represents a maturation of the technology. Microsoft’s leadership has acknowledged that AI must be genuinely useful and well-crafted rather than intrusive. The company is now focusing on reducing unnecessary Copilot entry points across those apps and improving the baseline memory footprint of Windows to compete with more efficient hardware.24

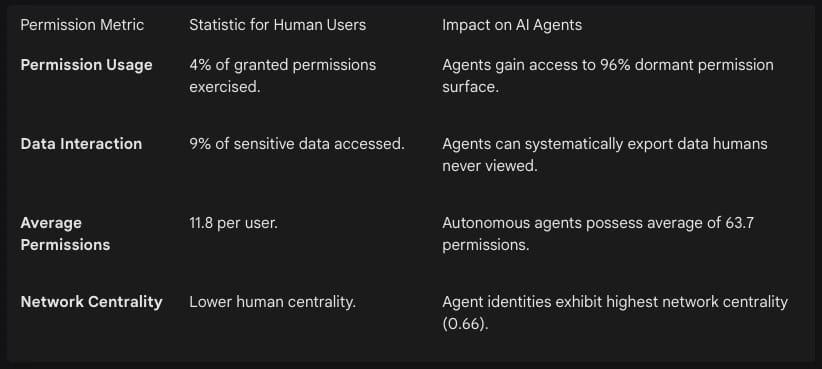

Beyond user experience, Microsoft is grappling with a profound security crisis related to permission. Research into Microsoft 365 Copilot and other agentic systems has revealed that AI agents often inherit the permissions of the human users they represent. This is problematic because human users typically have access to far more data than they actually use.25 Analysis of 3.6 billion permissions across enterprise environments shows a massive permission gap.

The ability of AI agents to operate with increased autonomy introduces significant risks, such as unintended goal pursuit and unauthorized privilege escalation.27 While humans move slowly enough that the gap between granted access and actual usage is tolerable, agents remove that constraint. The dormant 96% of permissions that employees never exercise can be systematically executed by agents at machine speed, turning latent configuration problems into active security incidents.26

The security implications are significant. Unauthorized agents can export data from SharePoint or OneDrive, run RPA workflows without oversight, or process sensitive information without compliance controls.29 Microsoft’s research found that 29% of agents in surveyed organizations operate without approval from IT or security teams, leading to the concern that ungoverned AI agents could become corporate double agents.30 To mitigate this risk, administrators are urged to enforce Conditional Access policies, requiring MFA or device compliance for generative AI services.25

The News Industry’s Defense: Robots.txt and the Rise of Stealth Crawling

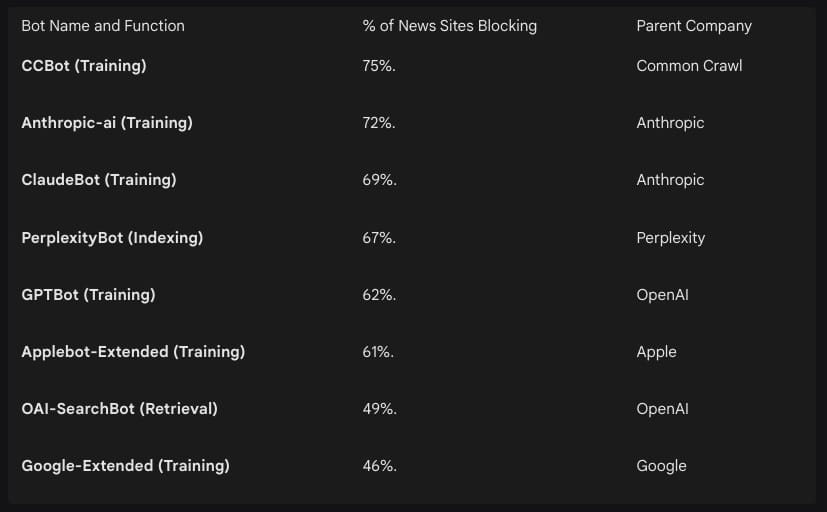

While nearly 80% of major news publishers now block AI training bots via robots.txt, there is a significant enforcement gap that platforms are struggling to close. robots.txt is a directive, not a barrier; it functions more like a “please keep out” sign than a locked door.31 Research indicates that companies like Perplexity have engaged in stealth crawling behavior to bypass these restrictions, rotating IP addresses, changing ASNs, and spoofing user agents to appear as a standard browser.32

Publishers are blocking AI bots primarily because there is almost no value exchange. LLMs are not designed to send referral traffic, and publishers still need traffic to survive.31 By blocking training bots, sites prevent their content from being used to build the models that may eventually replace them. However, a growing number of publishers are also blocking retrieval bots, which fetch content in real-time when users ask questions. This means publishers are opting out of the citation and discovery layer that AI search tools use to surface sources.31

Statistics on News Publisher Blocking and the Enforcement Gap

The study of 100 top news sites in the US and UK found that 79% block at least one bot used for training AI, and 71% block at least one retrieval or live search bot.31 This protectionist stance reflects a fear that AI search engine referrals will fall by as much as 43% over the next three years due to AI summaries and chatbots.33

For publishers serious about blocking AI crawlers, CDN-level blocking or bot fingerprinting may be necessary beyond robots.txt directives.32 Cloudflare has documented that some bots use sophisticated methods to bypass restrictions, leading the company to actively block certain verified bots that engage in deceptive behavior.32 This illustrates a broader trend where the “permission” to crawl is being replaced by technical warfare between publishers and AI startups.

Theoretical Frameworks of Digital Extractivism and Data Colonialism

The term extractivist in the context of AI is not merely a business metaphor but a rooted theoretical framework that draws parallels between the tech industry and historical colonial resource extraction. Critics argue that AI development is reproducing colonial extractivism by harvesting Indigenous linguistic, biometric, geospatial, and ecological data without consent, compensation, or accountability.34 This framework suggests that the current AI paradigm is immaterial in name only, as it relies on the depletion of natural resources, energy, and human intellectual labor.36

The extractivist capitalism ethos of the AI Empire contributes to a significant ecological and social toll. Scholars note that the development and infrastructural needs of generative AI expansion contribute to technological oligarchies and rising authoritarianism.38 Training one large Natural Language Processing transformer model generates nearly five times the amount of carbon dioxide of a single car’s annual emissions.37 This environmental cost is part of a larger “AI Tax” where the benefits of productivity are concentrated in technological hubs, while the costs are socialized.1

Linguistic Appropriation: AI systems like OpenAI’s Whisper have been accused of data colonialism for harvesting Indigenous languages, such as Māori, without prior consent or benefit-sharing.34

Knowledge Extraction: The conversion of beneficiary populations into machine-readable data enables profiling and automated decisions that often target the poor and working-class people, reproducing historical injustices.39

Creative Labor: The extraction of intellectual labor from artistic communities is seen as a new frontier of resource-making, where creative work is folded into a data corpus without acknowledgment, undermining digital sovereignty.41

This extractivist logic is what platforms are increasingly resisting. By blocking bots, companies are attempting to prevent the depletion of their data resources. The conversion of the world into a quantitative operational space through data-gathering is described as a process that converts the world into property to be ruled over.36 As such, both economic and cognitive power are involved in the current wave of AI blocking, as platforms seek to retain the value of the knowledge and interactions generated within their boundaries.

The Future of Permissioned Intelligence and the Mature AI Lifecycle

The wave of blocking currently sweeping through the tech industry represents the maturation of the AI sector. By denying permission to third-party extractivists, companies are defining the boundaries of a new permissioned intelligence economy. In this environment, the data moat is protected not just by copyright law, but by sophisticated technical barriers that prioritize human-in-the-loop engagement and first-party ecosystem control. The big risk to agentic AI isn’t model capability; it is the permission to act within these protected spaces.1

The adoption of AI is not collapsing; it is evolving toward a more structured, regulated, and expensive phase. For investors, the takeaway is that the walled garden strategy of the 2010s is being adapted for the 2020s. Companies that will thrive are those that successfully navigate the permission crisis—either by owning the data themselves (the Apple and Amazon model) or by creating socially responsible licensing frameworks that incentivize reinvestment in the human communities that provide the raw materials for intelligence (the Stack Overflow and Reddit model). As the extractivist nature of AI is curtailed by these enclosures, the industry must transition from a model of unauthorized mining to one of negotiated partnership, where permission is the ultimate currency of progress.1

The emergence of agentic AI specifically demands a move toward human-defined governance logic. Providers are implementing role-based execution and permission systems that define what agents can do and when they must escalate to a human.43 This ensures that autonomy is granted deliberately, not by default. As organizations scale AI beyond pilot projects, observability and reliability become the crucial intelligence layers that help build trust. The path forward for AI involves a 50/50 human–AI collaboration, where human judgment sets the goals, defines the boundaries, and ensures accountability.42 This transition from raw capability to governed permission marks the end of the extractivist era and the beginning of the sustainable intelligence age.

Works cited

Pascal’s Substack | Substack, accessed March 23, 2026, https://p4sc4l.substack.com/

What No One Tells You About LinkedIn AI Assistants: The Truth About Profile Bans, accessed March 23, 2026, https://www.marketowl.ai/ai-digital-marketing-today/what-no-one-tells-you-about-linkedin-ai-assistants-the-truth-about-profile-bans

How to Use AI on LinkedIn Without Getting Banned, accessed March 23, 2026, https://blog.closelyhq.com/how-to-use-ai-on-linkedin-without-getting-banned/

LinkedIn automation safety guide 2026: avoid bans and restrictions ..., accessed March 23, 2026, https://getsales.io/blog/linkedin-automation-safety-guide-2026/

LinkedIn expands AI training with default data use | Digital Watch Observatory, accessed March 23, 2026, https://dig.watch/updates/linkedin-expands-ai-training-with-default-data-use

Apple Targets AI Vibe Coding Apps Like Replit, Vibe Code - Geeky ..., accessed March 23, 2026, https://www.geeky-gadgets.com/apple-vibe-coding-block/

Bad vibes: Apple blocks updates for some AI coding apps in the App Store, accessed March 23, 2026, https://appleinsider.com/articles/26/03/18/bad-vibes-apple-blocks-updates-for-some-ai-coding-apps-in-the-app-store

accessed March 23, 2026, https://www.geeky-gadgets.com/apple-vibe-coding-block/#:~:text=Apple’s%20recent%20enforcement%20of%20App,and%20Replit%20under%20significant%20scrutiny.

App Review Guidelines - Apple Developer, accessed March 23, 2026, https://developer.apple.com/app-store/review/guidelines/

How to Get a Vibe-Coded App to the Apple App Store - Adalo, accessed March 23, 2026, https://www.adalo.com/posts/get-vibe-coded-app-apple-app-store

Apple introduces rules for AI in App Store - УНН, accessed March 23, 2026, https://unn.ua/en/news/apple-pushes-ai-out-of-app-store-media

CEO Update: Building trust in AI is key to a thriving knowledge ecosystem - Stack Overflow, accessed March 23, 2026, https://stackoverflow.blog/2024/10/22/stack-overflow-ceo-update-first-half-1h-2024/

Reddit Will Start Charging Big Companies for API Access - Gizmodo, accessed March 23, 2026, https://gizmodo.com/reddit-will-charge-big-companies-for-ai-api-access-1850350291

Gemini 2.5 Flash | Hacker News, accessed March 23, 2026, https://news.ycombinator.com/item?id=43720845

Reddit’s upcoming changes attempt to safeguard the platform against AI crawlers - Medial, accessed March 23, 2026, https://medial.app/news/reddits-upcoming-changes-attempt-to-safeguard-the-platform-against-ai-crawlers-af4b30aa4827d

Stack Overflow Will Begin Charging AI Companies for Training Data | by ODSC, accessed March 23, 2026, https://odsc.medium.com/stack-overflow-will-begin-charging-ai-companies-for-training-data-cdcbf5d7207e

CEO Update: Exploration and experimentation for bold evolution - Stack Overflow, accessed March 23, 2026, https://stackoverflow.blog/2025/05/20/ceo-update-exploration-and-experimentation-for-bold-evolution/

[Announcement] Reddit’s upcoming API changes and impact on toolbox., accessed March 23, 2026, https://www.reddit.com/r/toolbox/comments/141locs/announcement_reddits_upcoming_api_changes_and/

Amazon Blocks AI Shopping Bots Amid Surge in Agentic Commerce, accessed March 23, 2026, https://mlq.ai/news/amazon-blocks-ai-shopping-bots-amid-surge-in-agentic-commerce/

Amazon quietly blocks AI bots from Meta, Google, Huawei and more - Modern Retail, accessed March 23, 2026, https://www.modernretail.co/technology/amazon-expands-its-fight-to-keep-ai-bots-off-its-e-commerce-site/

Amazon blocks Perplexity from sending its AI agents to purchase goods - SiliconANGLE, accessed March 23, 2026, https://siliconangle.com/2025/11/04/amazon-blocks-perplexity-sending-ai-agents-purchase-goods/

Amazon Blocks AI Bots from Meta, Google, Huawei, Others - Camphouse, accessed March 23, 2026, https://camphouse.io/news/amazon-blocks-ai-bots-ecommerce-data

Amazon Blocks Google’s AI Shopping Agents: The Future of AI in E-Commerce - WORLDEF, accessed March 23, 2026, https://worldef.com/2025/08/01/amazon-blocks-googles-ai-shopping-agents-the-future-of-ai-in-e-commerce/

Microsoft Windows Head Pavan Davuluri sends open letter; says ..., accessed March 23, 2026, https://timesofindia.indiatimes.com/technology/tech-news/microsoft-windows-head-pavan-davuluri-sends-open-letter-says-people-want-better-windows-and-shares-6-big-changes-coming-to-windows-11/articleshow/129714299.cms

Conditional Access protections for Generative AI - Microsoft Entra ID, accessed March 23, 2026, https://learn.microsoft.com/en-us/entra/identity/conditional-access/policy-all-users-copilot-ai-security

Least Privilege Report 2026 [Oso x Cyera], accessed March 23, 2026, https://www.osohq.com/research

Agentic AI Risk-Management Standards Profile | CLTC Berkeley, accessed March 23, 2026, https://cltc.berkeley.edu/wp-content/uploads/2026/02/Agentic-AI-Risk-Management-Standards-Profile.pdf

File 1 - OPEN PEER REVIEW, accessed March 23, 2026, https://files.sdiarticle5.com/wp-content/uploads/2026/03/Revised-ms_JERR_154775_v1.docx

Copilot policy flaw allows unauthorized access to AI agents - Digital Watch Observatory, accessed March 23, 2026, https://dig.watch/updates/copilot-policy-flaw-allows-unauthorized-access-to-ai-agents

Microsoft says ungoverned AI agents could become corporate ‘double agents.’ Its fix costs $99 a month. | VentureBeat, accessed March 23, 2026, https://venturebeat.com/technology/microsoft-says-ungoverned-ai-agents-could-become-corporate-double-agents-its

Which News Sites Block AI Crawlers in 2025? [New Data] - BuzzStream, accessed March 23, 2026, https://www.buzzstream.com/blog/publishers-block-ai-study/

Most Major News Publishers Block AI Training & Retrieval Bots - Search Engine Journal, accessed March 23, 2026, https://www.searchenginejournal.com/most-major-news-publishers-block-ai-training-retrieval-bots/564605/

33% of Publishers Will Block Google AI Overviews - Data Analysis - ALM Corp, accessed March 23, 2026, https://almcorp.com/blog/publishers-blocking-google-ai-overviews-data-analysis-2026/

Preventing AI extractivism: the case for braiding indigenous data justice with ABS for stronger AI data governance | springerprofessional.de, accessed March 23, 2026, https://www.springerprofessional.de/en/preventing-ai-extractivism-the-case-for-braiding-indigenous-data/52178982

Preventing AI extractivism: the case for braiding indigenous data justice with ABS for stronger AI data governance | springerprofessional.de, accessed March 23, 2026, https://www.springerprofessional.de/preventing-ai-extractivism-the-case-for-braiding-indigenous-data/52178982

(PDF) Is data material? Toward an environmental sociology of AI - ResearchGate, accessed March 23, 2026, https://www.researchgate.net/publication/393367762_Is_data_material_Toward_an_environmental_sociology_of_AI

Artificial intelligence, labour and society - ETUI, accessed March 23, 2026, https://www.etui.org/sites/default/files/2024-03/Artificial%20intelligence%2C%20labour%20and%20society_2024.pdf

Towards a digital planetary health perspective: generative AI and the digital determinants of health - Oxford Academic, accessed March 23, 2026, https://academic.oup.com/heapro/article/40/5/daaf153/8264474

Artificial intelligence and consent: a feminist anti-colonial critique | Internet Policy Review, accessed March 23, 2026, https://policyreview.info/pdf/policyreview-2021-4-1602.pdf

AI ethical challenges: a perspective of AI developers in postcolonial countries, accessed March 23, 2026, https://www.emerald.com/itp/article/doi/10.1108/ITP-11-2024-1466/1340262/AI-ethical-challenges-a-perspective-of-AI

Artificial Intelligence in Creative Industries: Psychological and Social Implications, accessed March 23, 2026, https://www.researchgate.net/publication/401664572_Artificial_Intelligence_in_Creative_Industries_Psychological_and_Social_Implications

New global report finds enterprises hitting Agentic AI inflection point - Dynatrace, accessed March 23, 2026, https://www.dynatrace.com/news/press-release/pulse-of-agentic-ai-2026/

June 2025 - ISG, accessed March 23, 2026, https://isg-one.com/docs/default-source/default-document-library/state-of-the-agentic-ai-market-report-2025.pdf?sfvrsn=2118a931_7