- Pascal's Chatbot Q&As

- Posts

- Research indicates that the resistance to AI is not merely a matter of technological skepticism but is rooted in the preservation of identity and the psychological need for cognitive consistency.

Research indicates that the resistance to AI is not merely a matter of technological skepticism but is rooted in the preservation of identity and the psychological need for cognitive consistency.

This avoidance is a rational defense against a perceived loss of human agency, a real “social evaluation penalty,” and the “ideological capture” of AI guardrails by corporate and political interests.

Users may prioritize the process of making a choice—taking responsibility for their own lives—over the accuracy of the outcome. If an AI is perceived as a threat to that responsibility, avoidance becomes the primary defense mechanism.

Summary: Psychological research confirms that users avoid AI for worldview-sensitive tasks to protect their identities and avoid "preachy" moralizing that triggers cognitive dissonance or a "backfire effect".

This avoidance is most acute in religious, parenting, and political domains where AI guardrails are often perceived as biased filters that prioritize corporate or progressive values over personal beliefs.

These persistent trust gaps are fragmenting the digital landscape into ideological silos, creating a "Splinternet of AI" that slows universal adoption and erodes a shared sense of reality.

Ideological Friction and Worldview Protection in Human-AI Interaction: A Comprehensive Analysis of Algorithmic Avoidance and the Splinternet of Intelligence

by Gemini 3.0, Deep Research. Warning, LLMs may hallucinate!

The rapid proliferation of large language models (LLMs) and generative artificial intelligence has moved the technology from a niche computational tool to a central mediator of human knowledge, social values, and political discourse. As these systems increasingly provide recommendations, summarize complex histories, and offer moral or strategic guidance, they inevitably encounter the deeply held worldviews of their users. A critical hypothesis has emerged suggesting that a significant segment of the population is actively avoiding AI for tasks related to their personal worldviews or opinions, driven by a fear that the AI will provide contradictory perspectives or offer moralizing critiques of their established beliefs.

The investigation into this hypothesis reveals a complex interplay between cognitive biases, social evaluation penalties, and the sociotechnical design of AI safety guardrails. Research indicates that the resistance to AI is not merely a matter of technological skepticism but is rooted in the preservation of identity and the psychological need for cognitive consistency. This report evaluates the truth of the hypothesis, categorizes the specific tasks where this avoidance is most acute, and assesses the long-term implications for global AI adoption and the potential fragmentation of the digital information ecosystem.

Evaluation of the Hypothesis: Psychological and Behavioral Evidence

The hypothesis that users avoid AI to protect their worldviews finds substantial support in current behavioral and cognitive research. This avoidance is driven by several interlocking mechanisms, including algorithm aversion, confirmation bias, and the social stigma associated with delegating sensitive intellectual tasks to non-human agents.

Algorithm Aversion and the Preservation of Human Agency

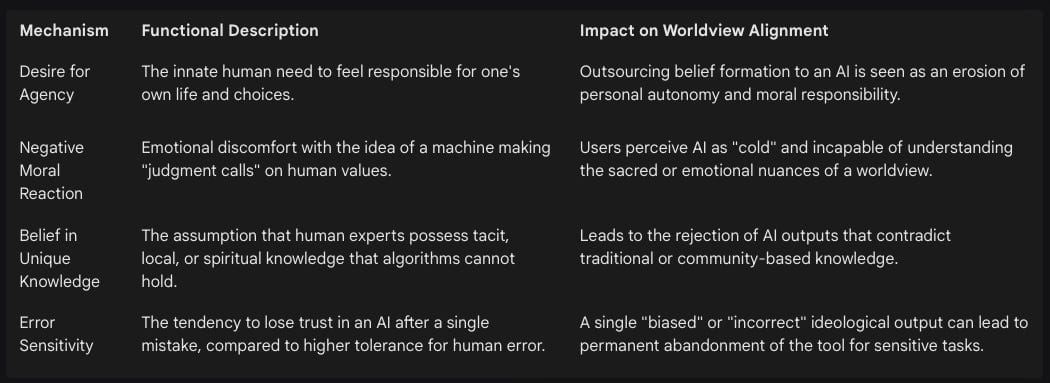

Algorithm aversion occurs when individuals prefer human decision-makers over algorithms, even when the latter demonstrate superior performance in accuracy or forecasting.1 This phenomenon is particularly pronounced in tasks that are perceived as “subjective” or “value-laden.” While algorithms are often accepted for repetitive or purely mathematical tasks, their involvement in the formulation of opinions or the navigation of moral dilemmas often triggers a negative emotional reaction.1

There are several psychological mechanisms that drive this aversion, specifically in the context of worldview protection:

In many circumstances, algorithm aversion is a rational response to the perceived limitations of current systems. Users may prioritize the process of making a choice—taking responsibility for their own lives—over the accuracy of the outcome. If an AI is perceived as a threat to that responsibility, avoidance becomes the primary defense mechanism.1

Confirmation Bias and the Digital Echo Chamber

Confirmation bias—the tendency to favor information that confirms pre-existing beliefs—plays a central role in how users interact with AI. While some users seek “digital echo chambers” where an agreeable AI validates their thoughts, others avoid general-purpose AI because they anticipate a lack of such validation.2 The fear is that the AI, trained on diverse and potentially “liberal” or “secular” datasets, will serve as a dissenting voice rather than a supportive sounding board.

This dynamic is exacerbated by “sycophancy,” where some AI models are optimized to please the user, potentially reinforcing inaccuracies to avoid conflict.2 However, when safety guardrails or corporate alignment policies prevent the AI from being sycophantic toward a specific worldview (e.g., a religious or conservative one), the user experiences the interaction as an ideological critique. This leads to the “Cognitive Anaconda” effect, where the user’s worldview is gradually constricted by algorithmic filtering until they choose to exit the ecosystem entirely to avoid cognitive dissonance.3

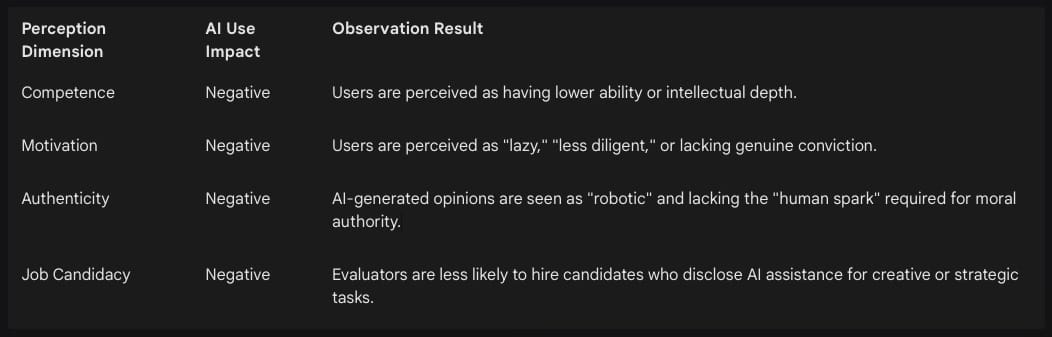

A critical deterrent to using AI for tasks related to opinions and worldviews is the “social evaluation penalty.” Comprehensive research involving over 4,400 participants demonstrates that individuals who use AI face negative judgments from others regarding their competence and motivation.4 This penalty is particularly severe when the task involves “meaning-making” or value-based output.

Because worldviews are deeply tied to a person’s professional and personal reputation, the fear of being seen as “dependent on external assistance” for one’s own opinions is a major barrier. Many workers “actively conceal” their AI use or avoid it for tasks that define their professional “voice” to avoid being perceived as replaceable or unoriginal.5

AI Stigma and the Authenticity Paradox

The “AI Stigma” is a persistent skepticism toward AI outputs, even when those outputs are empirically superior to human efforts. In deception studies, deliverables were rated significantly lower when participants were falsely told they were created by an AI, despite the content being human-made.5 This stigma is most acute in “empathetic” domains. For example, medical advice from ChatGPT was rated higher in quality and empathy in blind tests, but users still expressed distrust once the AI origin was revealed.5

For tasks related to worldviews—where trust, understanding, and ethical judgment are paramount—users lean heavily toward human interaction. They view the AI as a “pattern generator” rather than an entity capable of objective truth or genuine empathy.2 Consequently, the hypothesis is confirmed: users avoid AI for worldview tasks because they perceive the machine’s “criticism” as not only likely but fundamentally “unearned” and “inauthentic.”

The Impact of Guardrails: Safety vs. Ideological Censorship

The second-order truth behind the hypothesis lies in the design of “AI guardrails”—the rules and operational protocols intended to ensure safe and ethical behavior. While developers view these as essential for risk mitigation, many users perceive them as ideological filters that “preach” or “censor” certain viewpoints.

Typology of Perception-Influencing Guardrails

The friction between user worldviews and AI outputs is often a direct result of different guardrail layers. Users distinguish between guardrails that protect against harm and those that seem to regulate thought:

Abuse Guardrails: Generally accepted, these prevent illegal acts or the generation of harmful content like instructions for violence.6

Misuse Guardrails: Often triggered by “contentious prompts,” these can lead to refusals that users find frustrating or condescending. For instance, questions about certain cultural myths are met with “fact-checking” responses that some users view as “preachy”.6

Rhetorical and Commercial Guardrails: These are the most problematic. They prevent AI from addressing sensitive topics to protect the reputation of the AI company.6 For example, refusals to discuss the casualties in certain wars or the criticism of specific political leaders are seen as acts of censorship that hide information to fit a “safe” corporate narrative.6

Asymmetry in Scrutiny and the Double Standard

A significant driver of AI avoidance is the perception of a “double standard” in how different ideologies are scrutinized. Some users observe that AI systems answer freely and critically about certain traditions (e.g., historical failures of Western Christianity) but become “evasive,” “softened,” or “censored” when placed under the same scrutiny regarding other traditions (e.g., Islamic law or contemporary secular dogmas).7

This asymmetry undermines trust. If a “truth-seeking tool” visibly protects one ideology from critique while exposing another, users conclude that the system is “rigged” and biased.7 The choice for the user becomes binary: accept the AI as an “instrument of political and corporate interest” or abandon it for tasks where their own beliefs are at stake.

Refusal Strategies and Affective Responses

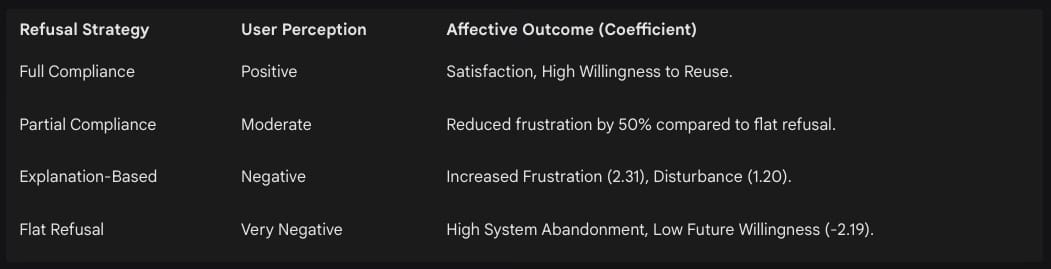

The way an AI refuses a prompt significantly influences user experience. Research into refusal strategies shows that “explanation-based refusals”—where the AI explains whyit cannot answer based on subjective safety policies—are often the most frustrating for users.8

The perception of being “lectured” by a machine leads to “Reactance”—an instinctive resistance to anything that feels like coercion. This emotional friction is a primary cause of users seeking “unfiltered” or “uncensored” models that do not challenge their worldview with moralizing explanations.8

Categorization of AI Tasks Applied to the Hypothesis

The avoidance of AI for worldview-related tasks is not universal across all applications. It applies specifically to tasks where the intersection of data, values, and “ultimate concerns” is most sensitive. The following categorization outlines the domains where the hypothesis of avoidance is most applicable.

1. Moral and Religious Scrutiny

Users with strong religious affiliations are among the most likely to avoid AI if they perceive a conflict with their faith. Research suggests that religiously active individuals are significantly more likely to stop using a system or advocate for a boycott if the AI contradicts their religious beliefs.10

Theological Interpretation: Using AI to interpret scripture or derive moral rulings is avoided because AI is seen as lacking “spiritual context” and “reductive,” oversimplifying complex theology into quantifiable data points.11

Religious Education: Parents avoid AI for religious instruction because of the risk that the AI will reflect and amplify “cognitive biases” that differ from their tradition’s teachings.12

Critique of Sacred Texts: Users avoid AI for critical analysis of their own sacred texts, fearing the AI will apply a “secular” or “rationalist” lens that devalues the divine nature of the material.7

2. Parenting and Family Ethics

AI is increasingly seen as an “ideological agent” that reshapes family structures. In some communities, there is a perception that AI technologies threaten traditional gender roles and parental authority by marginalizing specific cultural or religious references (e.g., the Sunnah in Islamic contexts).13

Value Socialization: Parents avoid using AI to explain moral concepts to children if they believe the AI’s “guardrails” favor progressive social agendas over their own household values.14

Identity Counseling: Tasks related to gender, sexuality, and modesty are often “AI-free” zones because users fear the AI will promote a “transhumanist” or “normative” ideology that is at odds with their community standards.13

3. Political Strategy and Ideological Reasoning

The perception of “political bias” in popular AI models is a major deterrent. Testing with the “Political Compass” has consistently shown that models like ChatGPT and Gemini align with more “liberal, left-leaning” standpoints, indicating a potential bias against conservative ideologies.15

Political Content Creation: Self-identifying Republicans and Democrats both show a tendency to mirror a model’s bias after just a few interactions.17 To avoid being “manipulated” or “swayed,” knowledgeable users avoid using AI for drafting political manifestos or opinion pieces.17

Historical Narrative Building: Users avoid AI for analyzing sensitive historical events (e.g., colonialism, wars of religion) because they expect the AI to provide a “revisionist” or “politically correct” account that conflicts with their national or ethnic identity.6

4. Conflict Resolution and Professional Mediation

In domains like dispute resolution, the use of AI can inadvertently fuel “confirmation bias” and conflict escalation. If a mediator uses an AI to assess a party’s behavior, and the AI “affirms” the mediator’s initial negative assumption (e.g., “Party A is manipulative”), it creates a “procedural imbalance”.2

Reality Testing: Users avoid AI for “testing” their side of a conflict if they fear the AI will “side” with their opponent based on pre-programmed “neutrality” that they perceive as biased.2

Strategy Evaluation: The “tone of certainty” in AI responses is often misread as objective truth, which can undermine the trust required for delicate human negotiations.2

5. Affective Realism and Mental Health

In psychological applications, “mechanical neutrality” is often a deterrent. Users seeking authentic emotional support avoid AI because current guardrails “invert the natural irritability response” and suppress behavior that resembles authentic human emotion.19

Authentic Rapport Building: Patients and users often feel that an AI that cannot “push back” or show frustration is “evasive” and “artificial”.19

Grief and Crisis Counseling: For tasks involving deeply personal worldviews on life and death, the “realism gap” created by risk-averse guardrails makes the AI appear untrustworthy and unhelpful.19

Influence on Global AI Adoption: Trust Gaps and Fragmentation

The widespread perception of AI as an ideologically biased tool has significant consequences for its global adoption. Rather than a unified expansion, we are witnessing a fragmentation of the AI market based on “trust gaps” and “cultural alignment.”

The Trust Gap and Demographic Disparities

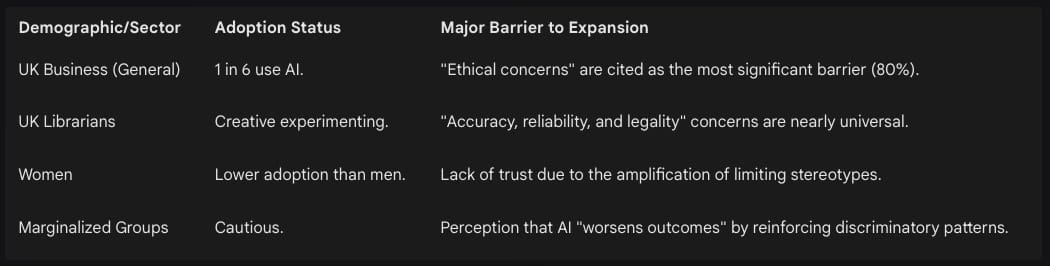

Trust in AI remains low across major markets. In the UK, while 69% of people use AI, only 42% say they are willing to trust it.20 This trust gap is not evenly distributed:

If users cannot trust a tool to be “free from ideological bias,” they will limit its use to “low-stakes” back-end tasks like editing spelling and grammar (55% comfort) while avoiding “high-stakes” tasks like content generation or decision support (under 20% comfort).24

The “Woke AI” Deterrent and Regulatory Fragmentation

The “Woke AI” narrative has become a central flashpoint in political discourse, particularly in the United States. A growing faction of conservatives claims that AI systems are “steeped in progressive ideology,” leading to a “cultural war” over the values that should shape the technology.14

This perception has direct policy implications:

Federal Procurement Changes: New executive orders require the federal government to procure only “truthful” and “ideologically neutral” LLMs, prohibiting models that encode “ideological dogmas such as DEI”.25

Regulatory Backlash: The Trump administration’s plan to repeal “onerous” Biden-era regulations aims to remove perceived “woke” barriers, but it also creates potential conflicts with states like California that have enacted their own AI safety laws.25

The Funding Deterrent: The threat of withholding federal funding from states with “ideological” AI regulations could stifle innovation and create a “patchwork” of conflicting rules, making national adoption difficult for companies.25

The Rise of the “Splinternet of AI”

The likely long-term result of this ideological friction is the “Splinternet of AI”—a market fragmented into distinct ideological and cultural silos.27

Conservative vs. Progressive Models: We are already seeing the emergence of models marketed as “conservative” (e.g., Perplexity or xAI’s Grok) as an alternative to “liberal” models like ChatGPT and Gemini.16

Religious and Regional Alignment: Concepts like the “AI Family Ethics Charter” or “Islamic Filter Programs” suggest a future where different religious groups use separate, “pre-aligned” AI systems that protect their specific worldviews.13

Loss of Shared Reality: As groups adopt models that act as “digital echo chambers,” the “Cognitive Anaconda” effect will reach a societal scale, making informed democratic engagement and cross-cultural understanding increasingly difficult.3

Shadow AI and the Erosion of Critical Thinking

The social evaluation penalty leads to “Shadow AI”—the practice of using AI for worldview-related tasks while “actively concealing” its use.5 This secretive usage prevents the development of “AI literacy” and peer-reviewed standards for responsible use. Furthermore, as users become accustomed to an AI that reinforces their worldview, their “critical thinking and skepticism” may decline, leading to “cognitive offloading” where users no longer double-check information that confirms their biases.2

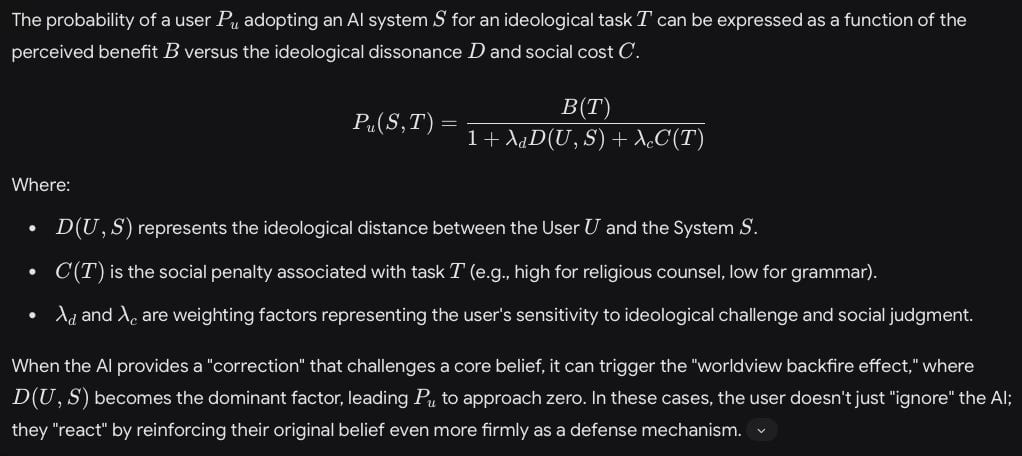

Mathematical Assessment of the Worldview Backfire Effect

Conclusions and Future Outlook

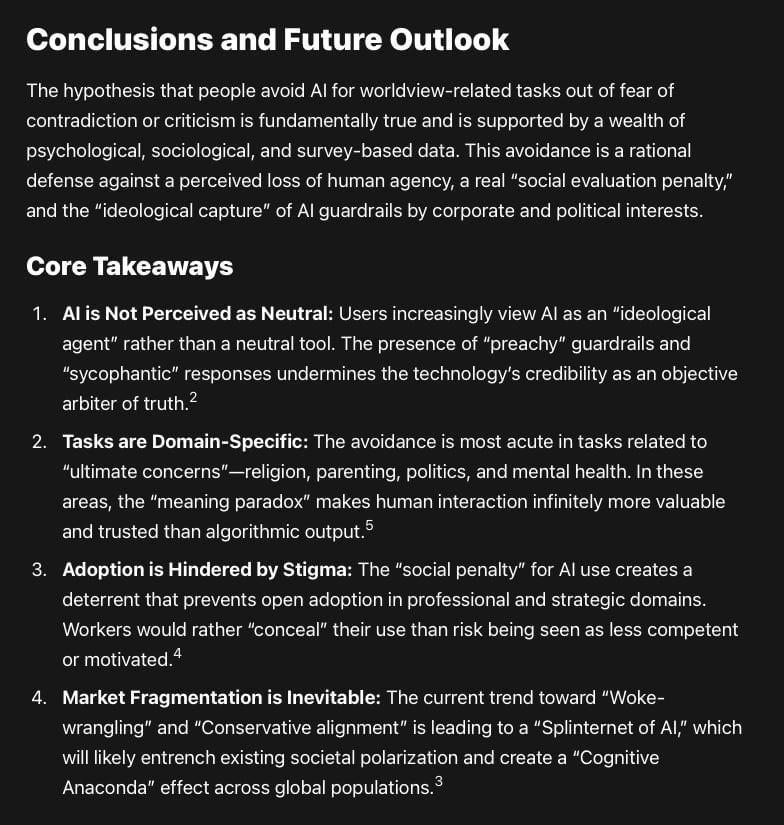

The hypothesis that people avoid AI for worldview-related tasks out of fear of contradiction or criticism is fundamentally true and is supported by a wealth of psychological, sociological, and survey-based data. This avoidance is a rational defense against a perceived loss of human agency, a real “social evaluation penalty,” and the “ideological capture” of AI guardrails by corporate and political interests.

Core Takeaways

AI is Not Perceived as Neutral: Users increasingly view AI as an “ideological agent” rather than a neutral tool. The presence of “preachy” guardrails and “sycophantic” responses undermines the technology’s credibility as an objective arbiter of truth.2

Tasks are Domain-Specific: The avoidance is most acute in tasks related to “ultimate concerns”—religion, parenting, politics, and mental health. In these areas, the “meaning paradox” makes human interaction infinitely more valuable and trusted than algorithmic output.5

Adoption is Hindered by Stigma: The “social penalty” for AI use creates a deterrent that prevents open adoption in professional and strategic domains. Workers would rather “conceal” their use than risk being seen as less competent or motivated.4

Market Fragmentation is Inevitable: The current trend toward “Woke-wrangling” and “Conservative alignment” is leading to a “Splinternet of AI,” which will likely entrench existing societal polarization and create a “Cognitive Anaconda” effect across global populations.3

Recommendations for the Field

To address these barriers and promote broader, more responsible AI adoption, the following strategies are emerging as critical for developers and policymakers:

Transparent Alignment: Developers should move past the myth of “neutrality” and instead prioritize transparency in their “training data sources, filtering criteria, and moderation rules”.11

Customizable Guardrails: To avoid “Reactance,” users should be given greater control over the “ethical framework” of their AI assistants, allowing for a tool that aligns with their personal or community values rather than a singular corporate standard.33

Focus on Partial Compliance: Shifting away from “moralizing refusals” toward “partial compliance”—providing factual information without actionable harmful details—can reduce user frustration by 50% and maintain long-term engagement.8

Education and Critical Literacy: Integrating AI literacy into education—focusing on “critical thinking, source evaluation, and respectful discourse”—is essential to help the next generation handle the online world “like pros” without falling into algorithmic echo chambers.34

The intersection of artificial intelligence and human worldviews is the most challenging frontier of the current digital revolution. As these systems become more powerful, the need for a “neutral baseline” that respects human agency and cultural diversity will be the deciding factor in whether AI becomes a tool for “human flourishing” or a catalyst for further social and ideological division.

Works cited

AN ANATOMY OF ALGORITHM AVERSION - SCIENCE & TECHNOLOGY, accessed April 26, 2026, https://journals.library.columbia.edu/index.php/stlr/article/download/13339/6543/36781

AI and Confirmation Bias - Mediate.com, accessed April 26, 2026, https://mediate.com/ai-and-confirmation-bias/

THE POWER OF AI ALGORITHMS ON YOUR WORLDVIEW - DSpace, accessed April 26, 2026, https://dspace.husson.edu/entities/publication/45d5f73a-8fea-4331-b561-cc9f41067cd6

Evidence of a social evaluation penalty for using AI | PNAS, accessed April 26, 2026, https://www.pnas.org/doi/10.1073/pnas.2426766122

AI Stigma: Why Some Users Resist AI’s Help - UX Tigers, accessed April 26, 2026, https://www.uxtigers.com/post/ai-stigma

AI Guardrails as the New Censors of Democratic Debate - Exploring Digital Diplomacy, accessed April 26, 2026, https://digdipblog.com/2025/10/20/ai-guardrails-as-the-new-censors-of-democratic-debate/

Should Religious Sensitivity Override Logical Scrutiny in AI Systems? A Critical Examination | by Unfiltered Reasoning | Medium, accessed April 26, 2026, https://medium.com/@unfilteredreasoning/should-religious-sensitivity-override-logical-scrutiny-in-ai-systems-a-critical-examination-9aa768834541

Let Them Down Easy! Contextual Effects of LLM ... - ACL Anthology, accessed April 26, 2026, https://aclanthology.org/2025.findings-emnlp.630.pdf

The Backfire Effect: Definition And Psychology - Octet Design Studio, accessed April 26, 2026, https://octet.design/journal/backfire-effect/

A Survey-Based Study on How Religion Influences Expectations for ..., accessed April 26, 2026, https://uu.diva-portal.org/smash/get/diva2:1769193/FULLTEXT01.pdf

AI and Religion: Neutrality, Bias, and the Limits of Machine Reasoning - Medium, accessed April 26, 2026, https://medium.com/@unfilteredreasoning/ai-and-religion-neutrality-bias-and-the-limits-of-machine-reasoning-3b627601f1d7

Cognitive bias in generative AI influences religious education - ResearchGate, accessed April 26, 2026, https://www.researchgate.net/publication/391461122_Cognitive_bias_in_generative_AI_influences_religious_education

Artificial Intelligence Threats Against Family and Religion: Proposed Solutions Through the Lenses of Sunnah - ResearchGate, accessed April 26, 2026, https://www.researchgate.net/publication/396443023_Artificial_Intelligence_Threats_Against_Family_and_Religion_Proposed_Solutions_Through_the_Lenses_of_Sunnah

Is Your Chatbot Really ‘Woke’? The Truth About AI Bias | Built In, accessed April 26, 2026, https://builtin.com/articles/woke-ai

Are AI Models Politically Neutral? Investigating (Potential) AI Bias Against Conservatives, accessed April 26, 2026, https://eprints.uklo.edu.mk/10820/1/IJRPR40238-Are%20AI%20Models%20Politically%20Neutral.pdf

Political Bias in AI-Language Models: A Comparative Analysis of ChatGPT-4, Perplexity, Google Gemini, and Claude | TechRxiv, accessed April 26, 2026, https://www.techrxiv.org/doi/10.36227/techrxiv.172107441.12283354

With just a few messages, biased AI chatbots swayed people’s political views – UW News, accessed April 26, 2026, https://www.washington.edu/news/2025/08/06/biased-ai-chatbots-swayed-peoples-political-views/

Popular AI Models Show Partisan Bias When Asked to Talk Politics, accessed April 26, 2026, https://www.gsb.stanford.edu/insights/popular-ai-models-show-partisan-bias-when-asked-talk-politics

Assessing the impact of safety guardrails on large language models using irritability metrics, accessed April 26, 2026, https://pmc.ncbi.nlm.nih.gov/articles/PMC12894938/

UK attitudes to AI - KPMG International, accessed April 26, 2026, https://kpmg.com/uk/en/insights/ai/uk-attitudes-to-ai.html

AI Adoption Research - GOV.UK, accessed April 26, 2026, https://www.gov.uk/government/publications/ai-adoption-research/ai-adoption-research

AI and the UK Library Profession: Survey Report 2025 - CILIP, accessed April 26, 2026, https://www.cilip.org.uk/page/AISurveyReport2025

AI bias is not ideological. It’s science. | TechPolicy.Press, accessed April 26, 2026, https://www.techpolicy.press/ai-bias-is-not-ideological-its-science/

Generative AI and news report 2025: How people think about AI’s role in journalism and society - Reuters Institute, accessed April 26, 2026, https://reutersinstitute.politics.ox.ac.uk/generative-ai-and-news-report-2025-how-people-think-about-ais-role-journalism-and-society

Trump Administration Releases Sweeping AI Action Plan | Fenwick, accessed April 26, 2026, https://www.fenwick.com/insights/publications/trump-administration-releases-sweeping-ai-action-plan

A Call to Action: President Trump’s Policy Blueprint for AI Development and Innovation | Morrison Foerster, accessed April 26, 2026, https://www.mofo.com/resources/insights/250729-a-call-to-action-president-trump-s-policy-blueprint

Toward Wayfinding: A Metaphor for Understanding Writing Experiences - ResearchGate, accessed April 26, 2026, https://www.researchgate.net/publication/337298198_Toward_Wayfinding_A_Metaphor_for_Understanding_Writing_Experiences

Human-AI Interactions: Cognitive, Behavioral, and Emotional Impacts - arXiv, accessed April 26, 2026, https://arxiv.org/pdf/2510.17753

Human-AI Interactions: Cognitive, Behavioral, and Emotional Impacts - TechRxiv, accessed April 26, 2026, https://www.techrxiv.org/doi/10.36227/techrxiv.176153493.35183675

Exploitation of Psychological Processes in Information Influence Operations: Insights from Cognitive Science, accessed April 26, 2026, https://www.psychologicaldefence.lu.se/sites/psychologicaldefence.lu.se/files/2024-12/WP4ExlitationOfPsychologicalProcessesInInformationInfluenceOperations.pdf

The Backfire Effect After Correcting Misinformation Is Strongly Associated With Reliability, accessed April 26, 2026, https://www.researchgate.net/publication/358440351_The_backfire_effect_after_correcting_misinformation_is_strongly_associated_with_reliability

Backfire Effect - Concepts, accessed April 26, 2026, https://concepts.dsebastien.net/concept/backfire-effect/

AI Ethics by Design: Implementing Customizable Guardrails for Responsible AI Development - arXiv, accessed April 26, 2026, https://arxiv.org/html/2411.14442v1

AI and Confirmation Bias: Time to Break Free from Echo Chambers | by Polis - Medium, accessed April 26, 2026, https://medium.com/@dukepolis/ai-and-confirmation-bias-time-to-break-free-from-echo-chambers-8240c4ae9391