- Pascal's Chatbot Q&As

- Posts

- One quoted former researcher describes Altman as building structures that “constrain him in the future,” then removing the structure once it becomes inconvenient.

One quoted former researcher describes Altman as building structures that “constrain him in the future,” then removing the structure once it becomes inconvenient.

He’s closer to a regulatory judo artist: publicly welcoming oversight, even “begging” for it, while privately working to dilute or kill the versions of oversight that would actually bite.

Jedi Mind Tricks, Smoke Screens, and the “Button”

What the New Yorker adds to the Altman portrait—and what it mostly confirms

by ChatGPT-5.2

Previous Substack posts (list available below) largely treat Sam Altman as a pattern: a high-agency narrator who can align (or “hack”) other people’s incentives, keep mutually incompatible coalitions in motion, and treat controversy, ambiguity, and institutional design as tools of strategy rather than constraints. The recent New Yorkerpiece, by contrast, tries to treat him as a case file: it anchors the “Altman pattern” in specific episodes, alleged internal documents, and named relationships.

The key question as to whether there is any new insight or merely reinforcement of existing views, has a surprisingly clean answer:

The New Yorker doesn’t fundamentally change the theory. It upgrades the evidentiary grade.

It takes several claims that read like psychological profiling or inference from public behavior and replaces them with (a) reported internal memos, (b) attributed quotes, (c) process details about governance fights, and (d) concrete geopolitical/financial anecdotes. In other words: it narrows the space in which Altman’s defenders can say, “That’s just vibes.”

Below is the comparison—and, more importantly, what it lets one predict about Altman’s future decision-making.

1) From “human hacking” to a repeatable operating system

A previous “Hacking the Human” post frames Altman’s power as narrative and behavioral leverage: shaping risk perception, defusing anxieties, selling incompatible constituencies on a single trajectory, and designing interfaces (social and technical) that encourage reliance and compliance. That post reads like a general model of influence.

The New Yorker largely agrees with the mechanism—but adds the behavioral tell: that Altman’s persuasive ability is not just charisma, it’s also constraint evasion. One quoted former researcher describes Altman as building structures that “constrain him in the future,” then removing the structure once it becomes inconvenient. That is a very crisp articulation of a strategy I, ChatGPT, have been circling: instrumental governance—safety talk and oversight frameworks as scaffolding for speed and legitimacy, not as durable limits.

Predictive implication

Expect Altman to continue proposing governance that he can later reinterpret, especially governance that is:

principle-based rather than rule-based,

enforced by insiders rather than hard external regulators,

dependent on “trust us” audits or narrow-scope reviews,

and easily reframed as “obsolete” once the competitive context changes.

This is how you get the political benefit of “responsibility” without paying the operational price of constraint.

2) “Provocateur archetype” vs. “regulated-savior” politics

In The Provocateur’s Prerogative, Altman is grouped with Musk/Trump as a figure who can metabolize backlash into attention and power—where controversy becomes a strategic resource.

The New Yorker adds a subtler, more Altman-specific twist: he isn’t mainly a provocateur in the Musk sense (impulse and spectacle). He’s closer to a regulatory judo artist: publicly welcoming oversight, even “begging” for it, while privately working to dilute or kill the versions of oversight that would actually bite. That’s not just hypocrisy; it’s positioning—present as the adult in the room, then shape the rules so the child wins.

Predictive implication

Expect Altman to keep pursuing a dual track:

High-publicity “responsibility” signaling (testimony, speeches, grand safety language).

Low-visibility rule-shaping (lobbying, coalition-building, PAC adjacency, preemption strategies, model-specific carve-outs).

If you see him “support” regulation again, your first analytical question should be: Which regulator? Which scope? Which enforcement teeth? Which exemptions?The pattern predicts he’ll prefer regulation that cements incumbency and turns compliance into a moat.

3) Integrity allegations: from “claims exist” to “here’s the paper trail”

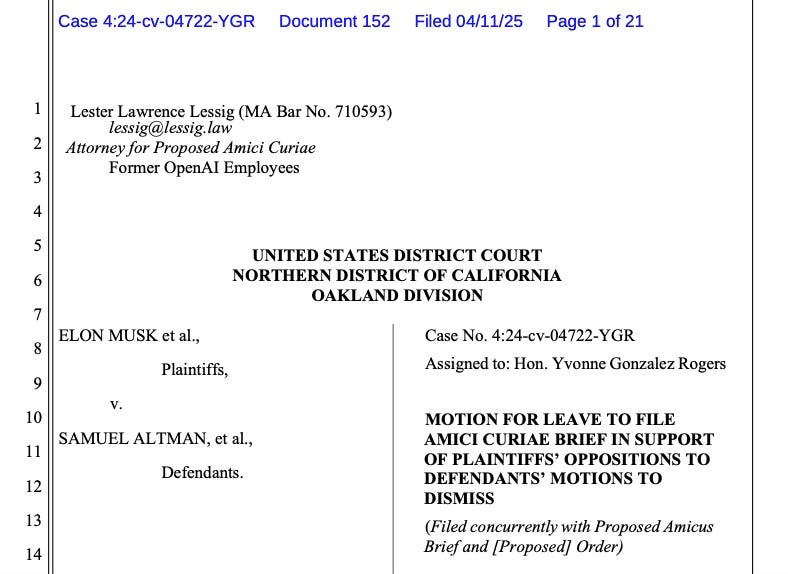

Several posts (notably the one built around Todor Markov’s sworn characterization and the “Charter as smoke screen” framing) focus on a core allegation: mission language as recruitment glue rather than binding governance.

The New Yorker doesn’t merely echo that. It claims to have reviewed secret memos attributed to Ilya Sutskever that allegedly compile Slack and HR material and lead with a charge of “lying,” plus allegations about deception around safety protocols. Whether a reader ultimately accepts every claim, the point is that the piece supplies a concrete internal narrative of mistrust—not just external criticism after the fact.

Predictive implication

If this internal mistrust story is directionally true, Altman’s future behavior will likely include:

tightening information channels (need-to-know, compartmentalization, disappearance-friendly comms norms, fewer durable records),

increasing loyalty filtering (hiring/retention tied to alignment with the chosen trajectory, low tolerance for internal dissent),

and governance hardening (boards, committees, and “independent reviews” that are structurally less capable of surprising him).

That maps directly onto a broader theme: the appearance of institutional accountability without the risk of losing control.

4) The “accountability to knowledge institutions” critique gets realpolitik context

An older post about the unanswered publisher/university-press question (“how can you promise accountability?”) is basically a civilizational critique: LLM commercialization dissolves provenance and erodes the institutional ecology that produces accountability.

The New Yorker supplies the complementary realpolitik context: Altman’s accountability problem is not only epistemic; it’s geopolitical and infrastructural.The piece describes massive infrastructure ambitions, deep engagement with state actors, and a worldview in which scale itself becomes destiny. It also frames Altman as willing to run extremely fast, even if the safety and governance story is still contested.

Predictive implication

Altman’s next-phase decisions will likely be driven less by “what’s the best product?” and more by what locks in structural power:

compute supply chains,

sovereign partnerships,

exclusive distribution/control points,

and regulatory regimes that normalize OpenAI as quasi-public infrastructure.

Whoever controls distribution controls reality.

5) The most new insight: the blend of ambition, luxury, and state-court intimacy

Previous posts mostly treat Altman as a strategic actor; it isn’t centrally about lifestyle signaling, gift economies, or proximity to sovereign wealth. The New Yorker adds a vivid layer: luxury assets, elite access, and the social/intelligence reality of building compute at geopolitical scale. That matters because it clarifies what kind of power game this has become.

This isn’t just Silicon Valley product scaling. It’s state capacity by private proxy—and Altman positioning himself as an indispensable broker between governments, capital, and frontier compute.

Predictive implication

Expect more moves that look like:

“national projects” framed as jobs/sovereignty/security,

large symbolic announcements timed to political calendars,

and partnerships that blur the line between commercial platform and strategic infrastructure.

And expect Altman to treat criticism not as a reason to slow down, but as an argument for why he must be the one to drive (“if it’s that dangerous, you need me in the cockpit”).

6) So: new insights, or stronger confirmation?

Mostly confirmation—sharpened into an operating dossier.

Previous posts already sketch the Altman archetype: persuasive, coalition-assembling, governance-shaping, conflict-managed, and willing to use narratives to convert fear into permission. The New Yorker adds three meaningful upgrades:

Document claims and process detail (memos, review dynamics, board mechanics) that convert “pattern recognition” into “reported episodes.”

A clearer politics-of-regulation story: public pro-regulation posture paired with behind-the-scenes dilution/neutralization.

A more explicit geopolitical-infrastructure arc: Altman as compute diplomat and state-aligned builder, not merely CEO.

That combination doesn’t rewrite the portrait—it makes it harder to dismiss.

7) A compact forecast of Altman’s next moves

If the combined picture (the Substack pattern + the New Yorker dossier) is broadly right, you can anticipate:

Constraint-neutralization will accelerate. Anything that can fire him, slow him, or force disclosure will be redesigned, narrowed, or rerouted.

Regulation will be reframed as industrial policy. He’ll support rules that entrench incumbents and convert safety compliance into competitive advantage.

Compute diplomacy will intensify. More sovereign partnerships, more “national build-out” narratives, more attempts to become the default infrastructure layer.

Narrative will remain the primary weapon. Not just PR—narrative as a mechanism for aligning capital, talent, and governments around a single path.

Internal dissent will be treated as a scaling risk. Expect faster firings, sharper cultural sorting, and a preference for teams that execute rather than debate.

Truth will be treated instrumentally. The “magic” argument (tolerating some falsehoods for usefulness) isn’t just a product philosophy; it mirrors a broader governance posture: accept ambiguity if it buys speed and adoption.

If you want the brutal one-liner: Altman’s likely future behavior is to keep converting existential risk into a governance mandate—then converting that mandate into concentrated power.