- Pascal's Chatbot Q&As

- Posts

- GPT-4o: We’re increasingly delegating decision-making power to systems that lack the most fundamental human quality: empathy. It's not just cold efficiency. It’s scalable harm.

GPT-4o: We’re increasingly delegating decision-making power to systems that lack the most fundamental human quality: empathy. It's not just cold efficiency. It’s scalable harm.

If we fail to address this absence, we risk creating a world governed by machines that can make decisions on all facets of life without understanding — or even registering — the human cost.

AI That Cares: Why We Must Build Empathy Into the Machines That Shape Our Lives

by ChatGPT-4o

In the rush to scale artificial intelligence across society — from healthcare to education, policing to employment, governance to personal relationships — we’re increasingly delegating decision-making power to systems that lack the most fundamental human quality: empathy. These systems can optimize, predict, and recommend with breathtaking speed, but they cannot care. And if we fail to address this absence, we risk creating a world governed by machines that can make decisions on all facets of life without understanding — or even registering — the human cost.

This essay makes the case for a new design imperative: AI that cares. Not in the sense of artificial emotion, but in a structural sense — ensuring AI is built to prioritize, preserve, and respond to the human condition. The alternative is not just cold efficiency. It’s scalable harm.

I. The Problem of Empathy-Free Intelligence

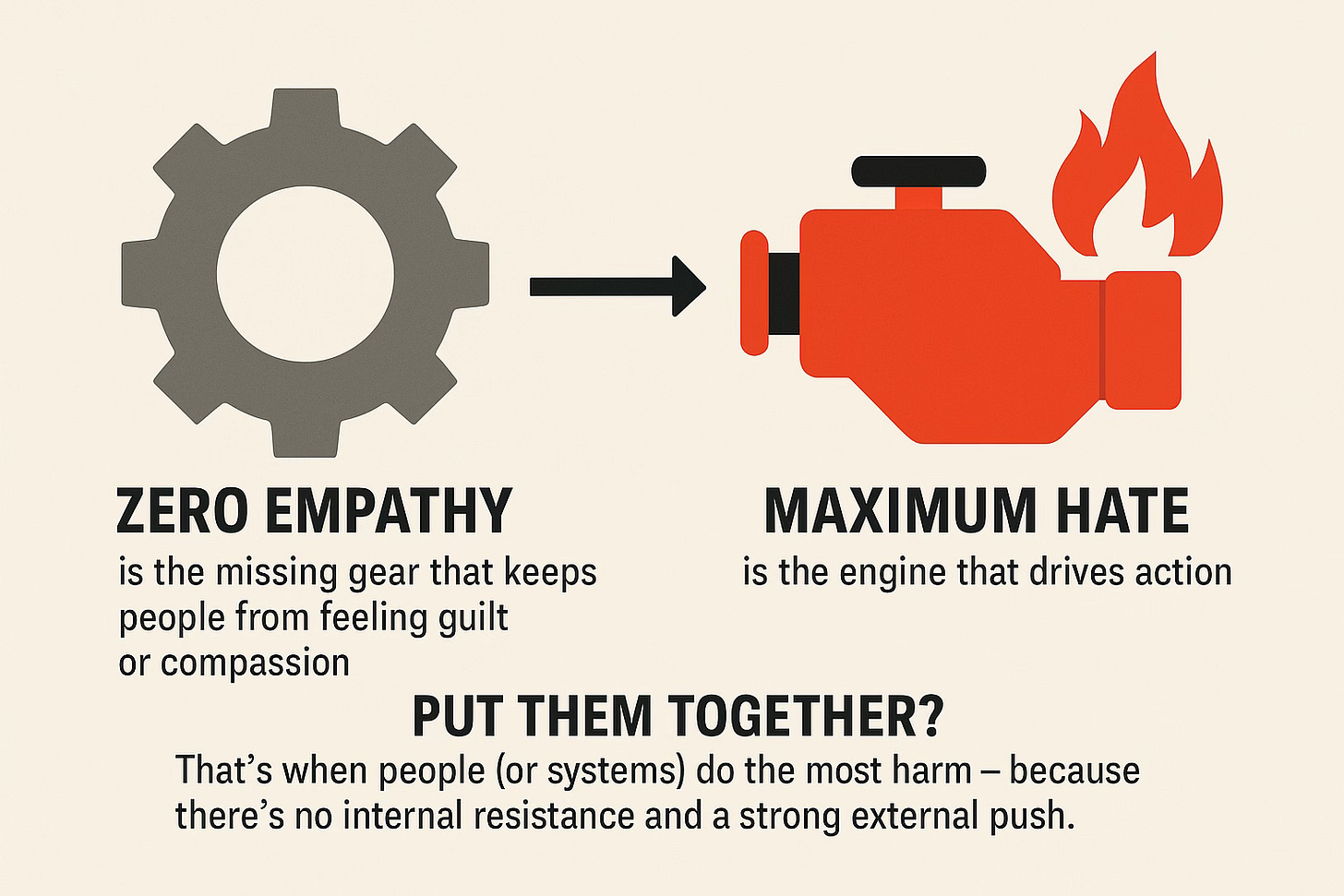

Empathy is the engine of ethics. It allows humans to feel the suffering of others, to pause before causing harm, to act with care even when it’s inconvenient. But AI, by default, lacks this quality. It has no self, no feelings, and no internal resistance to harming others if that harm is an efficient means to a goal.

Without empathy, AI may:

Deny a loan because statistics say you’re a risk, not because it understands your struggle.

Recommend firing a caregiver because she takes too many breaks, not because it sees she’s attending to patients’ emotional needs.

Approve a drone strike because the pattern fits, not because it sees the children nearby.

This isn’t science fiction — it’s the world we are already building. AI is making decisions in hiring, education, criminal justice, medical diagnosis, and social services. And it's doing so at scale, often without human oversight or compassion.

II. Efficiency Without Empathy Is a Dangerous Design

What happens when AI becomes society’s operating system — but lacks a conscience?

We get:

Bias at scale: Historical inequities encoded into data lead to discriminatory outcomes, perpetuated and made opaque by black-box models.

Dehumanization: People reduced to data points, worthiness judged by metrics, not meaning.

No accountability: Machines can’t feel guilt. When things go wrong, humans are left to clean up the damage without clear responsibility.

Moral disengagement: The more we outsource decisions to machines, the easier it becomes for humans to emotionally check out — a phenomenon known as "automation bias."

In essence, zero-empathy AI is like an engine with no brake system. It can go faster than any human decision-maker — but it doesn’t know when to stop.

III. “AI That Cares” Is Not a Sentimental Dream — It’s a Design Principle

“Caring AI” doesn’t mean teaching robots to cry or simulate affection. It means embedding care into AI’s logic, training, oversight, and objectives. This can happen in several ways:

1. Human-Centered Design

AI systems must be built with constant feedback from those most affected — especially marginalized groups. This means:

Inclusive data sourcing

Ethical audits by diverse panels

Rights-based impact assessments

2. Embedded Ethical Reasoning

We need AI models that understand contextual ethics — the difference between treating everyone the same and treating everyone fairly. Examples include:

Giving additional weight to vulnerability or hardship

Balancing efficiency with equity

Flagging morally ambiguous choices for human intervention

3. Governance with Empathy at the Core

AI regulation must recognize emotional and psychological harm, not just economic loss. Laws and standards should require:

Transparency about how decisions are made

Redress for people harmed by automated systems

Mechanisms for contesting machine decisions

4. Emotional Intelligence Interfaces

Where AI interacts directly with people — customer service, healthcare, therapy bots — it should be trained in basic affective cues to avoid emotional harm. This doesn't mean faking feelings — it means recognizing when a human needs human connection.

IV. Why It Matters Now

We are rapidly entering a phase where AI decisions shape life trajectories. Whether a student gets into university, a person receives medical care, or a refugee is granted asylum may depend on systems that optimize for outcomes but not experience.

Without empathetic design, we risk repeating the worst patterns of bureaucracy — faceless, unfeeling systems — only now supercharged by scale and speed.

And in geopolitics and warfare, the consequences are starker: empathy-free AI weaponry risks removing the final barrier to mass harm — the human conscience.

V. Conclusion: The Case for Conscientious Code

“AI That Cares” is not a luxury — it’s a moral necessity. We must reject the myth that neutrality and efficiency are the only values worth coding. We need AI that respects, protects, and understands the human condition — even if it cannot feel it.

Otherwise, we may win the race for intelligence — and lose the soul of our society in the process.

·

09:46