- Pascal's Chatbot Q&As

- Posts

- “Did the board and senior executives knowingly expose the company to infringement liability, reputational damage, securities risk and wasted corporate assets?”

“Did the board and senior executives knowingly expose the company to infringement liability, reputational damage, securities risk and wasted corporate assets?”

The new Adobe shareholder derivative complaint reframes the same conduct as a corporate governance failure: not merely “did the company infringe?”

Summary: The Adobe lawsuit turns AI copyright risk into a boardroom problem: shareholders allege executives knowingly exposed Adobe to liability by training SlimLM on datasets linked to pirated books while marketing its AI as responsible.

The strongest argument is the “shadow library” theory: even if training might sometimes be fair use, downloading, storing or laundering pirated books through datasets like Books3, RedPajama or SlimPajama may be a separate infringement.

For companies like Meta, Nvidia, Google, Apple, Salesforce and others, the danger is now bigger than copyright damages: internal emails, lawyer consultations, torrenting discussions and public “responsible AI” claims could become evidence of governance failure, investor deception and customer-trust collapse.

From Pirate Libraries to Board Liability: The Copyright Reckoning AI Can’t Redact

by ChatGPT-5.5

The Adobe materials mark a significant escalation in the AI copyright wars. Until now, most of the litigation has been framed as authors, artists, publishers, newspapers or music companies suing AI developers for copyright infringement. The new Adobe shareholder derivative complaint reframes the same conduct as a corporate governance failure: not merely “did the company infringe?”, but “did the board and senior executives knowingly expose the company to infringement liability, reputational damage, securities risk and wasted corporate assets?” That is why the case matters beyond Adobe. It suggests that training-data provenance is no longer just an IP issue. It is becoming a board-risk, disclosure-risk, investor-risk and customer-trust issue.

The core grievance is simple but potent. SEIU Pension Plan Master Trust, suing derivatively on behalf of Adobe, alleges that Adobe officers and directors adopted an unlawful AI strategy by using copyrighted material to develop Adobe’s SlimLM small language model. The complaint says SlimLM was pre-trained on SlimPajama-627B, which it describes as a cleaned and deduplicated derivative of RedPajama, which in turn allegedly included Books3, a dataset said to contain hundreds of thousands of pirated books. It also alleges SlimPajama includes Common Crawl material containing unauthorized copyrighted works. The complaint’s most rhetorically effective phrase is that Adobe allegedly followed an “ask forgiveness not approval” model rather than using clean or licensed data.

That claim is damaging because Adobe has publicly tried to distinguish itself from less cautious AI companies by presenting its AI strategy as commercially safe, creator-sensitive and responsible. The complaint points to Adobe’s own corporate policies, including requirements to confirm that Adobe has appropriate rights before using, copying or transferring copyrighted materials, and to advance AI in an ethical and responsible way. It also emphasizes the Audit Committee’s responsibility for enterprise risk management and legal, regulatory and ethical compliance. The grievance is therefore not only that copyrighted works were allegedly used; it is that Adobe allegedly did so while maintaining governance structures and public messaging that should have prevented or disclosed the risk.

The strongest legal idea running through the attached materials is what Laura Turini calls the “Shadow Library Strategy.” Instead of fighting only about whether model training is transformative fair use, plaintiffs separate the chain into two acts: first, the acquisition or downloading of pirated books; second, their later use in training. Even if a court accepts that training itself can be transformative, the initial act of downloading books from a pirate source can still be treated as a non-transformative infringing copy. That is the strategic wedge: “training” may be new, but piracy is old.

That wedge has already found judicial support. In Bartz v. Anthropic, Judge William Alsup held that Anthropic’s use of books for training could be fair use, but he sharply distinguished that from Anthropic’s alleged creation and retention of a central library of pirated books. The court wrote that Anthropic had downloaded millions of copyrighted books from pirate sites and “preferred to steal” rather than face the legal and business burden of lawful acquisition; it found no entitlement to keep a pirated central library merely because the later training use might be transformative. Anthropic later agreed to a $1.5 billion settlement with authors, with no admission of liability, including payments reportedly equivalent to $3,000 for each of 500,000 books and destruction of downloaded books.

The Adobe complaint tries to import that logic directly into the boardroom. It alleges that by late 2023, the removal of Books3 due to reported copyright infringement, together with a rising wave of AI copyright lawsuits, created “red flags” that Adobe’s leadership should have acted on. It further alleges that copyright holders sued Adobe in Lyon v. Adobe in December 2025 and Kleiner v. Adobe in February 2026, after which Adobe’s stock price dropped substantially; it claims the company now faces massive litigation costs, reputational harm, customer claims and loss of goodwill. The complaint also alleges that Adobe repurchased roughly 7.2 million shares for nearly $2.5 billion while the alleged infringement risk was undisclosed, thereby turning copyright provenance into a securities and corporate-waste theory.

The quality of the arguments is mixed, but strategically sophisticated. The strongest evidence appears to be provenance-based: Adobe’s own SlimLM paper allegedly stated that SlimLM was pre-trained on SlimPajama; SlimPajama is alleged to derive from RedPajama; RedPajama is alleged to have contained Books3; and Books3 is widely associated with the Bibliotik shadow library. If that chain holds, plaintiffs have a plausible route from Adobe’s model back to pirate-source books. The attached Bloomberg Law article summarizes the same core allegation: Adobe officers and directors allegedly exposed the company to massive liability by using a dataset containing pirated literary works.

But there are weaknesses. First, dataset genealogy is not the same as proof that a specific plaintiff’s work was actually copied, retained and used in the final training run. Deduplication, filtering, dataset versioning and removal dates matter. Turini correctly notes that reconstructing the provenance of the training data and proving the presence of particular books is technically significant and not straightforward. Second, some rhetoric in the complaint is overbroad. It suggests that AI tools “cannot contain copyrighted material” unless procured by lawful means, but the law is more nuanced: copyrighted material may be used under license, under exceptions, or potentially under fair use depending on the facts. Third, the complaint’s stock-drop and CEO-departure causation theories may be difficult to prove. A company’s share price can fall for many reasons, and tying a leadership transition specifically to copyright infringement risk requires more than temporal proximity.

The derivative theory also faces a high bar. In U.S. corporate law, it is not enough to show that a company made a risky or even mistaken decision. Plaintiffs typically must show bad faith, disloyalty, knowing violation of law, conscious disregard of red flags, false or misleading disclosures, or demand futility. The Adobe complaint tries to do exactly that by pointing to internal policies, audit committee duties, public lawsuits against peer companies, alleged failure to disclose, and repurchases at allegedly inflated prices. Whether that survives motion practice will depend on how convincingly plaintiffs connect board knowledge to the specific training-data decisions.

Still, the complaint’s broader importance is hard to overstate. Chat GPT Is Eating the World describes SEIU Pension Plan Master Trust v. Narayen as the first shareholder derivative action against a public company based on AI training with copyrighted works and predicts it will not be the last. That prediction is plausible. If copyright plaintiffs can show that AI companies used pirated data after internal warnings, then shareholders can ask a separate question: why did directors allow a legally radioactive asset to become part of the company’s product strategy?

Meta is the obvious parallel. In Kadrey v. Meta, Judge Vince Chhabria granted Meta summary judgment on fair use for training in that specific record, while stressing that the ruling did not mean Meta’s use of copyrighted books was lawful in every circumstance. The court also said that many generative AI companies may be infringing when they use copyrighted works without permission and that plaintiffs had simply failed to develop the right market-harm theory. Public reporting on unsealed materials has been damaging: authors alleged that Meta used LibGen despite internal warnings that it was pirated, that the issue was escalated to Mark Zuckerberg, and that employees worried about torrenting from corporate laptops. Other reporting cited internal discussions about avoiding public references to a dataset “we know to be pirated,” removing copyright headers or metadata, and using “mitigations” around LibGen. Even where Meta won a fair-use ruling on training, the optics and governance evidence remain highly relevant for future cases, investors and regulators.

Nvidia presents another version of the same risk. Reporting on Nazemian v. Nvidiasays plaintiffs alleged that Nvidia personnel contacted Anna’s Archive for high-speed access after internal warnings about its illegal nature, and that management allegedly green-lit the approach. Nvidia has pushed back, arguing that alleged discussions or evaluations of a source do not prove that Nvidia downloaded, copied or used plaintiffs’ specific works, which highlights an important evidentiary hurdle for plaintiffs: suspicious internal discussions are not always proof of infringement. But from a governance perspective, the fact that such communications exist at all is the problem. Once engineers, lawyers or executives discuss whether to use pirate sources, the question becomes not only copyright liability but whether the company knowingly accepted a material legal risk.

Google is also in the frame. In January 2026, Reuters reported that Hachette and Cengage sought to join a proposed class action against Google, alleging that Google copied textbooks and literary works without permission to train Gemini and other AI systems. Google had no immediate comment in that report. The significance for Google is not merely damages exposure. It is that the company sits at the intersection of search, cloud, AI, education and publishing markets. If discovery reveals internal knowledge of unauthorized source material, the issue could move from an author-compensation dispute to a market-power, unfair-competition and enterprise-customer trust problem.

Other companies are exposed as well. Apple has been sued by authors alleging it used pirated books to train OpenELM. Salesforce has been sued by authors alleging its xGen models were trained on pirated books, with plaintiffs reportedly contrasting those allegations against Marc Benioff’s public criticism of stolen AI training data. Databricks and MosaicML have faced claims involving Books3 and models such as MPT and DBRX. These cases differ on facts, models, datasets and procedural posture, but they share a pattern: open-source dataset laundering, shadow-library provenance, internal awareness, public “responsible AI” claims, and difficulty proving the exact path from copyrighted work to trained model.

The lesson for AI companies is brutal: the litigation target is moving upstream and sideways. Upstream, plaintiffs are focusing on acquisition, storage, torrenting, seeding, dataset assembly and retained libraries, not just outputs or training. Sideways, claims are spreading from copyright infringement to securities fraud, fiduciary duty, waste of corporate assets, consumer protection, customer indemnity and procurement risk. The longer a company has marketed AI as responsible while quietly relying on questionable data, the worse the documentary record may look.

This also changes what “AI governance” must mean. It cannot be a glossy ethics page, a model card with vague dataset categories, or a general statement that training data came from “publicly available” sources. Companies need auditable training-data inventories, source-level provenance records, documented license analysis, retention and deletion policies, board-level reporting of high-risk datasets, escalation records that do not disappear into privilege theatre, and controls over open-source dataset ingestion. They also need to stop treating “open dataset” as a synonym for “lawful dataset.” Books3, LibGen, Anna’s Archive and Common Crawl controversies show that the legality of the upstream source can poison downstream claims.

For publishers and rightsholders, the Adobe complaint is valuable because it expands the audience for the argument. The message is no longer only “authors deserve compensation.” It is also “investors deserve disclosure,” “customers deserve lawful products,” “boards must manage AI supply-chain risk,” and “licensed data markets exist and were bypassed.” Turini’s point that Adobe allegedly paid certain rights holders for video training data is especially important: where a market exists, bypassing it through pirate data becomes harder to defend as necessity rather than opportunism.

The broader conclusion is that AI companies may have confused technical feasibility with institutional legitimacy. They could ingest shadow libraries, so some did. They could clean and deduplicate datasets, so some treated laundering as absolution. They could argue fair use, so some behaved as though fair use had already been decided in their favor. But courts are now separating training from acquisition, plaintiffs are learning how to plead provenance, journalists are surfacing internal communications, and shareholders are beginning to ask whether boards knowingly built billion-dollar products on legally unstable foundations.

The Adobe case may or may not succeed. Some allegations may be overdrawn, and plaintiffs still have to prove knowledge, causation, damages and the presence of specific works in relevant datasets. But as a signal, it is powerful. The next phase of AI copyright litigation will not only ask whether a model copied a book. It will ask who inside the company knew, who approved it, what lawyers were asked, what was disclosed to investors, what was promised to customers, and whether “responsible AI” was a governance system or a marketing costume.

·

20 JAN

When “Shadow Libraries” Meet Big Tech: The Anna’s Archive–NVIDIA Collision and Its Blast Radius

·

5 AUGUST 2024

Question 1 of 3 for ChatGPT-4o: Please read the news article "Leaked Documents Show Nvidia Scraping ‘A Human Lifetime’ of Videos Per Day to Train AI" and tell me what it says

·

21 JUNE 2025

YouTube, AI, and the Ethics of Consent — Google’s Gemini and Veo 3 Under Scrutiny

·

20 MARCH 2025

Question 1 of 3 for ChatGPT-4o: Please read the article “The Unbelievable Scale of AI’s Pirated-Books Problem - Meta pirated millions of books to train its AI. Search through them here” and tell me what it says. List the most surprising, controversial and valuable statements made.

·

14 JANUARY 2025

Question 1 of 3 for ChatGPT-4o: Please read the article "Judge Chhabria grants Kadrey, represented by David Boies, leave to amend to file Third Amended Consolidated Complaint. Adds DMCA CMI claim, CA Computer Fraud Act claim. Plus, Kadrey gets to depose Meta about seeding of works via torrents.

·

27 JULY 2025

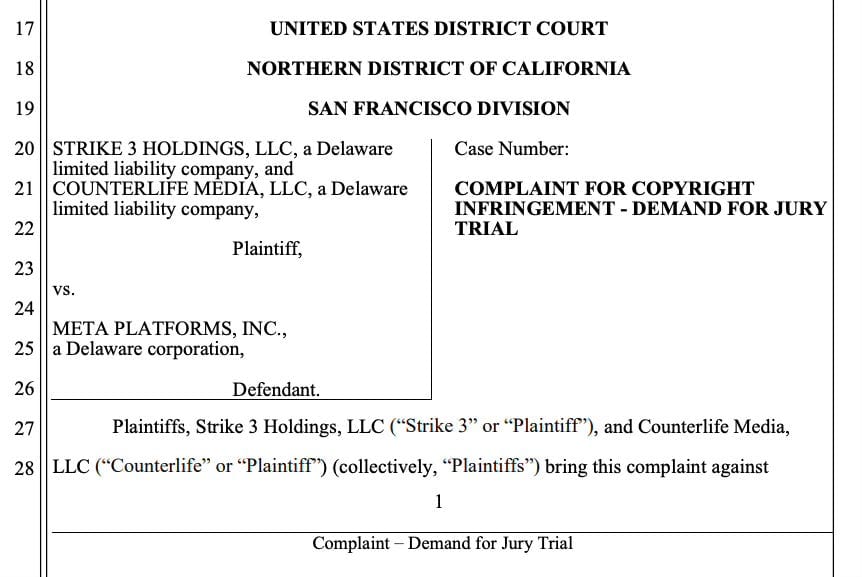

Sued by the Stream: How Strike 3's Lawsuit Could Expose Meta's AI Training Secrets

·

14 APRIL 2025

Asking AI services: Please analyze the TorrentFreak article “Meta AI ‘Piracy’ Lawsuit: Publishers and Professors Challenge Fair Use Defense” and the associated Amicus Brief from the International Association of Scientific, Technical and Medical Publishers

·

8 MAR

“Fair Use by Technical Necessity” — or The Piracy Protocol Defense (Meta’s Newest Attempt to Re-Label Seeding as Scholarship)

·

6 NOVEMBER 2023

Question 1 of 6 for ChatGPT-4: Please analyze the Plaintiffs vs META lawsuit and list everything META is being accused of

·

29 NOVEMBER 2023

Information provided to Claude: “AI Gets a Legal Gift for Thanksgiving” and Order Granting Motion to Dismiss

·

5 MARCH 2025

Asking ChatGPT-4o: Please read the “Report on Pirated Content Used in the Training of Generative AI” and the associated LinkedIn post and tell me what the key messages are and how AI makers should be responding to these issues.

·

10 JANUARY 2025

·

30 SEPTEMBER 2024

·

26 SEPTEMBER 2024

Question 1 of 2 for ChatGPT-4o: Please read the article “U.S. Court Orders LibGen to Pay $30m to Publishers, Issues Broad Injunction” and the associated judgment and tell me what the key messages (and key grievances) are.

·

6 JANUARY 2025

Question 1 of 3 for ChatGPT-4o: Please read the "Google GenAI Copyright Litigation" complaint and tell me what the main grievances are and what kind of evidence is being presented. Describe the nature of the evidence in great detail.

·

7 FEBRUARY 2025

Asking AI Services: Please read the articles "“Torrenting from a corporate laptop doesn’t feel right”: Meta emails unsealed" and "‘Meta Torrented over 81 TB of Data Through Anna’s Archive, Despite Few Seeders’" and explain what happened, whether this is bad behaviour or not and what a) the judge should do and b) what the AI maker(s) should do to prevent…