- Pascal's Chatbot Q&As

- Posts

- By framing the unauthorized ingestion of vocal data not as a copyright violation of a “work,” but as a biometric violation of a “person,”...

By framing the unauthorized ingestion of vocal data not as a copyright violation of a “work,” but as a biometric violation of a “person,”...

....these plaintiffs are attempting to bypass the subjective “transformative use” tests of copyright law in favor of the strict liability standards found in biometric privacy statutes.

The Biometric Frontier: Leveraging Privacy and Personality Rights to Navigate the Generative AI Litigation Landscape

by Gemini 3.0, Deep Research. Warning, LLMs may hallucinate!

The emergence of generative artificial intelligence (GenAI) has precipitated a crisis in traditional intellectual property frameworks, forcing a confrontation between the rapid technological advancement of machine learning and the foundational rights of human creators. For decades, the legal battleground for media ingestion and reproduction was defined by the Copyright Act of 1976 and the subsequent Digital Millennium Copyright Act (DMCA). However, as AI developers increasingly rely on the “Fair Use” doctrine as a categorical defense for the unauthorized scraping of artistic works, creators have begun to pivot toward a more potent and personal legal instrument: biometric privacy. This shift is most visible in recent litigation involving independent musicians and singer-songwriters who are challenging the operational foundations of AI music platforms like Suno, Udio, and Google’s Lyria.1 By framing the unauthorized ingestion of vocal data not as a copyright violation of a “work,” but as a biometric violation of a “person,” these plaintiffs are attempting to bypass the subjective “transformative use” tests of copyright law in favor of the strict liability standards found in biometric privacy statutes.1

The Evolution of the Musician’s Claim: From Copyright to Biometry

The current wave of litigation is led by figures such as Chicago-based songwriter David Woulard, who has initiated proposed class actions against major AI developers including Suno Inc. and Uncharted Labs Inc. (Udio).1 Unlike previous suits that focused on the reproduction of musical compositions or sound recordings, these claims allege the illicit collection and exploitation of “voiceprints”.1 This distinction is critical; while copyright protects the creative expression fixed in a medium, biometric law protects the physiological identifiers that are unique to the individual’s physical existence. The argument posits that a musician’s recorded voice contains “distinctive vocal tags” that function as protectable biometric identifiers under laws like the Illinois Biometric Information Privacy Act (BIPA).1

This strategy is informed by the massive settlements previously extracted from technology giants under BIPA, such as Facebook’s $650 million settlement for facial recognition practices and TikTok’s $92 million settlement for similar privacy violations.1 For musicians, the appeal of BIPA lies in its unique provisions: it grants a private right of action, allowing individuals to sue directly without state intervention, and it does not recognize “fair use” as a defense.1 In the eyes of the law, the violation is the unconsented act of collection, regardless of whether the resulting AI output is transformative or commercially competitive.1

The Technical Threshold: Audio Recording vs. Voiceprint Analysis

A central hurdle in these cases is the legal and technical distinction between a general audio recording and a “voiceprint.” AI developers often argue that their models ingest data in a way that creates a “composite” of many voices, which they claim does not constitute an identifiable biometric marker.1 However, the plaintiffs contend that the software performs “acoustic analysis” on recordings—measuring pitch patterns, vocal timbre, formant frequencies, and spectral envelope characteristics—to create a mathematical profile of a specific human’s vocal anatomy.2

This process, often termed “diarization” in professional contexts, allows software to distinguish between different speakers and create individual profiles based on unique vocal qualities.8 When this technology is used to train generative models that can then “clone” or “reproduce” a specific artist’s vocal style, the harm shifts from artistic displacement to an invasion of the “private domain”.2 The legal strength of this argument is reinforced by the fact that a voiceprint, unlike a password or a social security number, cannot be reset; once an individual’s vocal anatomy is encoded into a neural network’s parameters, they have permanently lost control over their biological instrument.2

The Biometric Privacy Argument: Assessing Legal Strength and Risks

The strength of the biometric privacy argument rests on its ability to anchor liability at the moment of data ingestion, or the “input stage,” rather than the output stage where generative AI produces a final product.2 This avoids the “doctrinal void” often found in Right of Publicity cases, where a plaintiff must prove that an AI output “sounds like them” to establish harm.2 Under a biometric framework, the extraction of data is itself the trespass.2

Jurisdictional Hurdles and the Illinois Advantage

Currently, the most fertile ground for these claims is Illinois. The Illinois Supreme Court’s interpretation of BIPA has established that a violation occurs “each and every time” biometric data is unlawfully collected or transmitted.10 This “per-scan” theory of recovery significantly elevates the financial risk for AI companies, with statutory damages ranging from $1,000 for negligent violations to $5,000 for intentional or reckless ones.8

However, AI developers are expected to mount robust defenses based on the definition of “biometric information.” They may argue that vocal data used for training is too abstract to verify a specific individual’s identity, thereby falling outside the scope of BIPA.1 Furthermore, plaintiffs must demonstrate a clear connection to Illinois to pursue these claims, which creates a jurisdictional barrier for international creators or those residing in states with weaker protections.1 While Texas offers comparable protections under its Capture or Use of Biometric Identifier Act (CUBI), it lacks a private right of action, requiring the state’s attorney general to initiate enforcement.2 Washington also possesses a biometric statute, but it specifically excludes “data generated from audio recordings” from its definition of biometric identifiers, leaving musicians there without a clear remedy.2

The Seventh Circuit and the Damages Landscape

The valuation of these biometric claims is currently in flux due to ongoing litigation in the Seventh Circuit Court of Appeals. In 2023, the Illinois legislature amended BIPA to state that a private entity collecting the same data from the same person using the same method commits only a single violation.10 The Seventh Circuit is currently deliberating whether this amendment applies retroactively to hundreds of pending cases.10 If retroactivity is denied, AI companies could face “eye-popping” liability figures reaching into the billions, as every interaction between an artist’s recording and the training algorithm could be counted as a separate violation.9

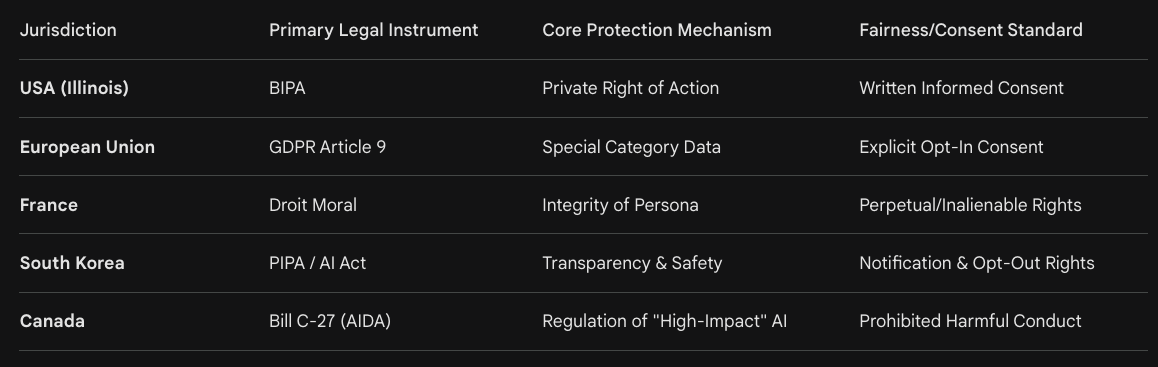

International Legal Instruments: A Global Perspective for Creators

While the United States remains a focal point for AI litigation, creators in other jurisdictions can leverage robust data protection and personality rights frameworks to achieve similar goals of bypassing the Fair Use debate.

The European Union and the GDPR’s Biometric Shield

In the European Union, the General Data Protection Regulation (GDPR) treats biometric data as a “special category” of information under Article 9.11 Voice data used for the purpose of uniquely identifying a natural person falls squarely within this protection.11

Explicit Consent Requirement: Unlike the “legitimate interest” basis often used for general data processing, the processing of Article 9 biometric data requires “explicit consent”.12 This means that AI companies scraping European datasets would need to obtain unambiguous opt-in from every individual whose voiceprint is extracted.12

The Right to Erasure: Under the “Right to be Forgotten,” European creators can demand the deletion of their speech data if consent is withdrawn.13 For AI developers, this creates a technical crisis: if a model is trained on a dataset that includes unconsented voiceprints, the creator could theoretically force the developer to “unlearn” or retrain the entire model to remove their biological influence.2

Purpose Limitation: GDPR mandates that data collected for one purpose (e.g., customer service training) cannot be repurposed for a different one (e.g., training a commercial generative model) without new consent.12

Moral Rights and the French “Droit Moral”

European creators, particularly in France and Germany, benefit from the tradition of “Moral Rights” (Droit Moral), which protect the non-pecuniary interests of an author and are often treated as an extension of their personality.14

Right of Integrity: This allows a creator to object to any “distortion, mutilation, or modification” of their work that would harm their honor or reputation.15 AI manipulation of an artist’s voice to perform “new” or “offensive” content could be challenged as a violation of this integrity.15

Perpetual and Inalienable: In France, moral rights are perpetual and can be defended by an artist’s heirs even after the work enters the public domain.15 This creates a permanent legal basis to block AI “resurrections” of deceased artists that would otherwise be permissible under the more limited US copyright term.15

The Asia-Pacific Landscape: South Korea’s PIPA and the AI Act

South Korea has emerged as a proactive regulator of AI, enacting the AI Framework Act in late 2024.17 The Korean Personal Information Protection Act (PIPA) already provides significant hurdles for AI training.

Transparency Mandate: AI operators are required to notify users in advance when a product utilizes “high-impact” or generative AI and must clearly label deepfake content.17

Right to Refuse Automated Decisions: PIPA grants data subjects the right to request an explanation of automated decisions and, in some instances, the right to refuse them entirely.17

Extraterritoriality: Like the GDPR, Korea’s PIPA applies to overseas business operators if their activities impact the Korean market or its users.17

Canada’s Bill C-27 and the AIDA Framework

Canada is currently modernizing its privacy regime through Bill C-27, which introduces the Artificial Intelligence and Data Act (AIDA) and the Consumer Privacy Protection Act (CPPA).19

High-Impact Systems: AIDA specifically targets “high-impact” AI systems, including those used for the “biometric identification of natural persons”.20

Algorithmic Harm Prohibition: The Act establishes prohibitions on using illegally obtained personal information to develop AI systems and provides for penalties if an AI system’s use causes serious harm.19

Independent Tribunal: Bill C-27 creates a new Personal Information and Data Protection Tribunal to hear appeals and issue penalties, aligning Canada with global enforcement standards.19

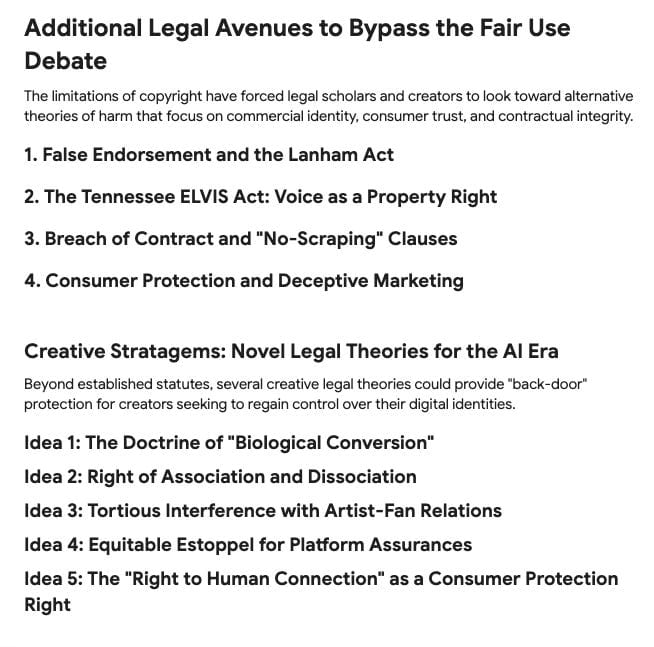

Additional Legal Avenues to Bypass the Fair Use Debate

The limitations of copyright have forced legal scholars and creators to look toward alternative theories of harm that focus on commercial identity, consumer trust, and contractual integrity.

1. False Endorsement and the Lanham Act

The Lanham Act, or the federal Trademark Act, offers a powerful tool against AI voice cloning through the doctrine of “false endorsement” under Section 43(a).23

Consumer Confusion: Unlike copyright, which asks if the work was “copied,” a Lanham Act claim asks if the use of an AI-generated voice “falsely suggests sponsorship or approval” by the original artist.23

Distinctive Persona: In cases like Midler v. Ford Motor Co. and Waits v. Frito-Lay, Inc., courts have held that a celebrity whose endorsement is implied through the imitation of a distinctive attribute (like their voice) has standing to sue.23 This applies even if the “copying” is found to be transformative in a copyright context.

Sensory Trademarks: High-profile figures like Matthew McConaughey have moved to register iconic catchphrases and vocal styles as trademarks, providing a specific deterrent against unauthorized digital exploitation.24

2. The Tennessee ELVIS Act: Voice as a Property Right

Tennessee’s Ensuring Likeness Voice and Image Security (ELVIS) Act, effective July 1, 2024, is the first law in the United States to explicitly protect “voice” as an enforceable property right.26

Primary Purpose Liability: The Act creates civil liability for those who make available technology whose “primary purpose or function” is the production of an individual’s likeness or voice without authorization.27 This shifts the focus from the user of the AI to the developer of the software.

Extraterritorial Reach: The ELVIS Act protects anyone—living or dead—regardless of where the violation occurs, provided there is a connection to the state’s artistic heritage.27

Class A Misdemeanor: Unlike typical IP disputes, the ELVIS Act allows for criminal enforcement against offenders, significantly raising the stakes for AI startups.27

3. Breach of Contract and “No-Scraping” Clauses

For digital platforms hosting creator content, the most immediate shield is often the Terms of Service (ToS). Contract law and copyright law operate on different thresholds; a contract case hinges on whether the defendant “agreed” to the terms, not whether the use is transformative.30

Private Rights of Action: By including explicit “No Scraping” or “No-AI-Training” clauses, publishers can create a private right they can assert against violating parties.30

Technical Controls: Bypassing robot exclusion headers (robots.txt) or other technical measures to scrape data can be used as evidence of a breach of contract and potentially a violation of the Computer Fraud and Abuse Act (CFAA) in the US.30

4. Consumer Protection and Deceptive Marketing

Creators can also find recourse through the Federal Trade Commission (FTC) and state consumer protection laws.

Deceptive Practices: The FTC has warned that using synthetic voices or deepfakes to mislead consumers about an influencer’s endorsement falls under deceptive marketing.32

Transparency and Disclosure: Laws like the one in Utah require businesses to provide clear disclosures when they use generative AI in consumer-facing roles.33 Failure to disclose that a “voice” is AI-generated can be a hook for class action litigation based on consumer fraud rather than IP theft.

Creative Stratagems: Novel Legal Theories for the AI Era

Beyond established statutes, several creative legal theories could provide “back-door” protection for creators seeking to regain control over their digital identities.

Idea 1: The Doctrine of “Biological Conversion”

In traditional tort law, “conversion” is an intentional exercise of dominion or control over another’s personal property. Creators could argue that their vocal anatomy and unique physiological markers are their biological property. By extracting this data to create a “digital twin,” an AI company is arguably “converting” the biological essence of the artist for its own profit. This theory treats the “voiceprint” as a physical asset rather than intellectual property, potentially bypassing the federal preemption of the Copyright Act.

Idea 2: Right of Association and Dissociation

Under the First Amendment and various common law privacy rights, individuals have a right to control who they are associated with. An AI model that mimics a singer’s voice can force an “unwanted association” between the artist and content they find morally or politically objectionable. Unlike defamation, which requires proving a false statement of fact, the “Right of Dissociation” would focus on the dignitary harm caused by the unauthorized algorithmic linking of a person’s biological identity to third-party generated content.

Idea 3: Tortious Interference with Artist-Fan Relations

AI companies often argue that their models create “new” music. However, if an AI music generator uses an artist’s voice to produce “sound-alikes” that compete directly with the artist’s new releases, the artist could sue for “tortious interference with prospective economic advantage.” The argument would be that the AI developer is intentionally interfering with the artist’s ability to monetize their unique vocal identity by flooding the market with low-cost, unauthorized simulations that satiate the audience’s demand for that artist’s specific sound.

Idea 4: Equitable Estoppel for Platform Assurances

Many creators upload content to platforms that initially promise “safe harbor” or a “community-first” approach. If a platform later pivots to selling its users’ data to AI training firms without explicit notification, creators could argue “equitable estoppel.” They could claim they only provided their data (the recordings) based on a reasonable reliance that it would be used for “listening” and “distribution,” not for the “extraction of biological identifiers.”

Idea 5: The “Right to Human Connection” as a Consumer Protection Right

A novel consumer-side argument could be that the unauthorized use of human biometrics to “pass off” AI content as human-made violates the consumer’s “Right to Human Connection.” This would frame the harm not just to the creator, but to the public, who are being deceived by a “biological masquerade.” By requiring AI companies to prove the “humanity” of their outputs or pay a “humanity tax” to the original creators whose data enabled the simulation, this framework would re-center the value of the original human performance.

Conclusion: Toward a Biometric-Centric Identity Protection Framework

The shift from copyright-based litigation to biometric and personality-based claims reflects a fundamental change in how the law views the “creator.” As generative AI continues to decouple artistic expression from human labor, the only remaining “scarcity” is the individual personhood of the artist. The Illinois BIPA case and the Tennessee ELVIS Act represent the first stages of a global movement to define “informational self-determination” as a fundamental right that survives public distribution.2

For musicians and other creators, the strategy of bypassing the Fair Use debate through biometric privacy is not just a legal tactic; it is a defensive maneuver to prevent the permanent “vocal anatomy extraction” and commodification of their human existence.2 While jurisdictional coherence remains a challenge, the combined weight of the GDPR, the ELVIS Act, and the Lanham Act suggests that AI companies can no longer rely on a “transformative use” defense to justify the unauthorized ingestion of the human spirit. The future of creative law will likely be found in this intersection of privacy, property, and personhood, where the goal is not just to protect the work, but to protect the person who made it.

Works cited

Musicians Test AI Litigation Waters With Biometric Privacy Claim, accessed March 24, 2026, https://news.bgov.com/ip-law/musicians-test-ai-litigation-waters-with-biometric-privacy-claim

THIS PROGRAM IS INTENDED FOR INFORMATIONAL PURPOSES ..., accessed March 24, 2026, https://naras.a.bigcontent.io/v1/static/Derek_Song_ELI_2026_Runner%20Up_Essay

PRESS RELEASE: Independent Musicians Sue Google Over Creation and Distribution of AI Music, accessed March 24, 2026, https://www.loevy.com/independent-musicians-sue-google-over-ai-music/

BIPA AI Class Action | Loevy + Loevy, accessed March 24, 2026, https://www.loevy.com/class-actions/artificial-intelligence/bipa-ai-class-action/

ACLU v. Clearview AI | American Civil Liberties Union, accessed March 24, 2026, https://www.aclu.org/cases/aclu-v-clearview-ai

Facial Emotion Detection for Smart Human-Computer Interaction - Scribd, accessed March 24, 2026, https://www.scribd.com/document/985898677/Facial-Emotion-Detection-for-Smart-Human-Computer-Interaction

Microsoft Teams class action claims company illegally collects voice data, accessed March 24, 2026, https://topclassactions.com/lawsuit-settlements/lawsuit-news/microsoft-teams-class-action-claims-company-illegally-collects-voice-data/

AI Transcription Tools Give Rise to BIPA Claims - Lewis Rice, accessed March 24, 2026, https://www.lewisrice.com/publications/ai-transcription-tools-give-rise-to-bipa-claims

Microsoft Teams Lawsuit: BIPA Class Action Targets AI Voice Data - UC Today, accessed March 24, 2026, https://www.uctoday.com/unified-communications/microsoft-teams-lawsuit-bipa-voice-data/

AI Note-Takers, Biometric Privacy, and the Battle Over BIPA ..., accessed March 24, 2026, https://www.sgrlaw.com/client-alerts/ai-note-takers-biometric-privacy-and-the-battle-over-bipa-damages-what-businesses-need-to-know-now/

Guidelines 02/2021 on virtual voice assistants Version 2.0 - European Data Protection Board, accessed March 24, 2026, https://www.edpb.europa.eu/system/files/2021-07/edpb_guidelines_202102_on_vva_v2.0_adopted_en.pdf

Your essential 2026 guide to voice ai compliance in today’s digital landscape, accessed March 24, 2026, https://www.speechmatics.com/company/articles-and-news/your-essential-guide-to-voice-ai-compliance-in-todays-digital-landscape

How Does GDPR Compliance Apply to Speech Datasets? - Waywithwords.net, accessed March 24, 2026, https://waywithwords.net/resource/how-does-gdpr-apply-to-speech-datasets/

Defects in the Moral Rights Regimes of the Countries of the Middle East in - Brill, accessed March 24, 2026, https://brill.com/view/journals/alq/39/1-2/article-p45_2.xml

Preservation vs. Fabrication: An Ethical Framework of Consent, Transparency, and Integrity for Posthumous AI Art - OpenReview, accessed March 24, 2026, https://openreview.net/pdf?id=UEHmz3m7zl

Moral Rights in Copyrights: The Theoretical Establishment - ResearchGate, accessed March 24, 2026, https://www.researchgate.net/publication/399587594_Moral_Rights_in_Copyrights_The_Theoretical_Establishment

Data Protection & Privacy 2026 - South Korea - Global Practice Guides, accessed March 24, 2026, https://practiceguides.chambers.com/practice-guides/data-protection-privacy-2026/south-korea/trends-and-developments

An Overview of Korea’s AI Framework: Main Features and Challenges in - Brill, accessed March 24, 2026, https://brill.com/view/journals/kjic/13/2/article-p232_4.xml

Bill C-27 (Historical) - OpenParliament.ca, accessed March 24, 2026, https://openparliament.ca/bills/44-1/C-27/?singlepage=1

The Artificial Intelligence and Data Act (AIDA) – Companion document, accessed March 24, 2026, https://ised-isde.canada.ca/site/innovation-better-canada/en/artificial-intelligence-and-data-act-aida-companion-document

Guide to artificial intelligence regulation in Canada | Insights - Torys LLP, accessed March 24, 2026, https://www.torys.com/our-latest-thinking/publications/2023/04/guide-to-artificial-intelligence-regulation-in-canada

AI Oversight, Accountability and Protecting Human Rights: Comments on Canada’s Proposed Artificial Intelligence and Data Act - Rogers Cybersecure Catalyst, accessed March 24, 2026, https://cybersecurecatalyst.ca/wp-content/uploads/2023/05/AIDACommentary.pdf

In the Courts: Can Distinctiveness of Musical Indentity be Protected under U.S. Law? - WIPO, accessed March 24, 2026, https://www.wipo.int/en/web/wipo-magazine/articles/in-the-courts-can-distinctiveness-of-musical-indentity-be-protected-under-us-law-35817

Matthew McConaughey Registers Sensory Trademark to Address AI Deepfakes - Offit Kurman, accessed March 24, 2026, https://www.offitkurman.com/offit-kurman-blogs/matthew-mcconaughey-sensory-trademark-ai-deepfakes

Matthew McConaughey: Protecting His Voice and Likeness, accessed March 24, 2026, https://darkhorse.law/matthew-mcconaugheys-trademark-strategy-protecting-ones-name-voice-likeness/

Elvis Act vs Indian Laws-Securing Personality Rights in AI world - Umbrella Legal, accessed March 24, 2026, https://umbrella-legal.com/legal-updates/f/elvis-act-vs-indian-laws-securing-personality-rights-in-ai-world

Suspicious Minds: Protecting Tennessee Artists from Generative AI | JD Supra, accessed March 24, 2026, https://www.jdsupra.com/legalnews/suspicious-minds-protecting-tennessee-1545258/

Tennessee’s Elvis Act: Protecting the voice of an Artist | IPRMENTLAW, accessed March 24, 2026, https://iprmentlaw.com/2024/04/14/tennessees-elvis-act-protecting-the-voice-of-an-artist/

ELVIS is Alive as Tennessee is First to Implement Rights of Publicity Protections Against AI Clones, Deepfakes, and Impersonations | ArentFox Schiff, accessed March 24, 2026, https://www.afslaw.com/perspectives/alerts/elvis-alive-tennessee-first-implement-rights-publicity-protections-against-ai

Publishers Prohibit Automated Scraping: Impacts on AI Training and Content Discovery, accessed March 24, 2026, https://windowsforum.com/threads/publishers-prohibit-automated-scraping-impacts-on-ai-training-and-content-discovery.403514/

Terms And Conditions - No Worry Websites & Ai Automations, accessed March 24, 2026, https://noworrywebsites.co.uk/terms-and-conditions/

AI Twins and Avatars: Legal Risks for Companies Using Synthetic Voice and Likeness Technology, accessed March 24, 2026, https://www.traverselegal.com/blog/ai-avatar-legal-risks/

AI Laws in the US (2025): Federal & State Regulations Explained - Leanware, accessed March 24, 2026, https://www.leanware.co/insights/ai-laws-in-the-us-overview