- Pascal's Chatbot Q&As

- Posts

- Asking AI: If, in the future, AI models start to understand words and sentences better, do you think that will lead to MORE or LESS censorship on social media platforms than is currently the case?

Asking AI: If, in the future, AI models start to understand words and sentences better, do you think that will lead to MORE or LESS censorship on social media platforms than is currently the case?

GPT-4o: A likely outcome is a mix of both. Grok: It’s more likely to lead to less censorship. Claude: More capable AI could identify subtler forms of problematic content that current systems miss.

Asking AI services: current social media platform moderation algorithms are rather dumb, typically triggering takedowns and blocking of posts on the basis of specific words, possibly on the basis of keyword lists that contain ‘problematic’ words or sentences. If, in the future, AI models start to understand words and sentences better, do you think that will lead to MORE or LESS censorship on social media platforms than is currently the case?

ChatGPT-4o’s answer:

It depends on how the AI models are implemented and who controls their decision-making processes. Here are two possible scenarios:

Scenario 1: More Censorship

Better Contextual Understanding – If AI models improve their ability to interpret meaning, intent, and nuance, they could flag more content that violates policies, even when users try to circumvent keyword filters.

Proactive Content Suppression – AI could be trained to detect subtle forms of disinformation, hate speech, or other "problematic" content before it even gains traction, leading to preemptive takedowns.

Automated Moderation at Scale – As AI models get better, platforms may rely less on human moderators, reducing the chances for appeals or context-based leniency.

AI Detecting "Implicit Harm" – Future AI moderation could focus on things like emotional tone, potential for misinterpretation, or inferred intent, leading to stricter enforcement.

Scenario 2: Less Censorship

More Accurate Moderation – If AI truly understands context better, it may lead to fewer false positives where legitimate content is wrongly removed.

Selective Enforcement – Advanced AI could allow platforms to enforce policies in a more targeted way, reducing overbroad keyword-based censorship.

User Appeals & Explainability – If AI models can provide explanations for takedowns, they might allow users to argue against unjust moderation, leading to a more transparent system.

Hybrid Reality: More Censorship but More Subtle

A likely outcome is a mix of both. AI moderation would be more precise but also more pervasive, meaning fewer false positives but a stronger ability to filter content automatically. It could also make moderation more invisible—instead of outright takedowns, platforms may simply suppress reach or downrank content in feeds without users even realizing.

Would you prefer a system with strict rules and minimal appeals or flexible moderation with some room for interpretation?

Grok’s answer:

If AI models get smarter about understanding words and sentences—grasping context, intent, and nuance—I’d argue it could go either way, but it’s more likely to lead to less censorship overall, with some caveats.

Right now, moderation algorithms are blunt hammers. They flag posts based on keyword lists or simple pattern matching, often missing the bigger picture. Say someone posts “I’m going to kill it at the gym today”—a dumb algorithm might see “kill” and slap a warning or takedown, even though it’s clearly not a threat. Smarter AI, capable of parsing context, could recognize the idiom and let it slide. This could reduce false positives, meaning fewer innocent posts get axed. Less censorship by volume, simply because the system isn’t overreacting to every trigger word.

On the flip side, better understanding could also enable more targeted censorship. If AI can detect subtle dogwhistles, sarcasm, or coded hate speech that slips past current filters—like “heritage enthusiasts” secretly meaning white supremacists—platforms might clamp down harder on stuff they deem problematic. This wouldn’t necessarily increase the quantity of censorship, but it could make it more precise, catching things humans miss. Some might call that an improvement; others, a creep toward thought policing.

The real wildcard is who’s holding the reins. Smarter AI doesn’t operate in a vacuum—it’s tuned by the biases of the people and companies building it. If X or Meta or whoever prioritizes a heavy-handed “safe space” approach, they could weaponize that contextual awareness to silence more speech under vague “harm” or “misinformation” banners. But if they lean toward free expression—like X seems to be flirting with under Musk—smarter AI could actually loosen the grip, letting more edge cases through.

Data backs this tension. Current systems already over-censor: a 2021 study from the Center for Democracy & Technology found that automated moderation tools on platforms like Facebook disproportionately flag benign content, especially from marginalized groups, due to linguistic blind spots. Smarter AI could fix that. Yet, when Twitter (pre-X) rolled out more advanced models in 2019, they still amplified removals of “hateful conduct” by 20%, per their own transparency reports, because the system got better at spotting intent.

So, my bet? Less censorship in raw numbers—fewer dumb mistakes—but a shift toward more surgical, intent-based takedowns. Whether that feels freer or creepier depends on the platform’s agenda. What do you think—optimistic or dystopian on this one?

Claude’s answer:

This is an interesting question about the evolution of content moderation.

The impact of more sophisticated AI understanding on censorship could go in either direction, depending on how the technology is implemented and governed.

Arguments for LESS censorship:

Current keyword-based systems often lack context, leading to false positives that remove legitimate content. More nuanced AI could better distinguish harmful content from discussion about sensitive topics, satire, education, or reporting.

AI that truly understands language could recognize cultural variations, artistic expression, and contextual factors that current systems miss.

Better understanding might allow for more targeted interventions (like warnings or additional context) rather than binary takedown decisions.

Arguments for MORE censorship:

More capable AI could identify subtler forms of problematic content that current systems miss, potentially expanding what gets flagged.

These systems could be calibrated to enforce platform policies more consistently across all content, eliminating current enforcement gaps.

Enhanced pattern recognition could identify coded language, dog whistles, or evolving terminology used to evade current moderation.

The outcome will largely depend on:

How platforms define their moderation goals

Transparency in how these systems are designed and trained

Governance structures and oversight mechanisms

Whether users have meaningful input and appeal processes

What's your perspective on how these technologies should be deployed to balance free expression with harm reduction?

Perplexity’s answer:

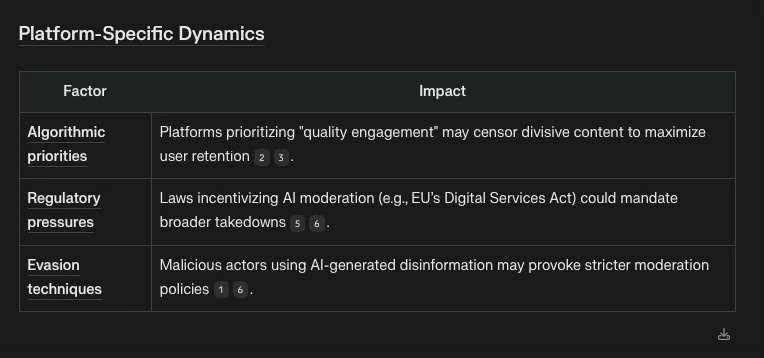

As AI models evolve to better understand context and nuance in language, the impact on social media censorship will likely be multifaceted, with both increased precision in content moderation and risks of overreach. Here's a breakdown of the key factors:

Potential for Reduced Over-Censorship

Contextual analysis could reduce reliance on blunt keyword lists, allowing AI to distinguish between harmful content and benign uses of flagged terms (e.g., educational discussions vs. hate speech).

Sentiment detection via advanced NLP might better identify sarcasm, satire, or counter-speech that current systems misclassify, preserving legitimate expression.

Adaptive learning could minimize false positives by refining moderation criteria based on cultural and linguistic nuances.

Risks of Increased Censorship

Granular detection of implicit harm, such as coded language or visual metaphors, may expand the scope of removable content beyond today’s overt violations.

Automated bias: Over-reliance on AI could amplify systemic biases in training data, disproportionately censoring marginalized voices.

State-driven suppression: Authoritarian regimes are already using AI to automate censorship of dissent, a trend likely to grow as tools become more sophisticated. For example, China’s AI chatbots avoid sensitive historical topics, while India leverages AI to restrict critical media.

Conclusion

While improved AI could reduce crude keyword-based over-censorship, the net effect may lean toward MORE censorship due to three converging trends:

Expanded definitions of harm: AI’s ability to detect subtler forms of abuse (e.g., microaggressions, deepfakes).

Scalability: Real-time analysis of billions of posts enables platforms to enforce policies more comprehensively.

Political exploitation: Governments and platforms alike may misuse advanced AI to suppress content under vague pretexts of “safety” or “misinformation”.

The outcome ultimately hinges on transparency in AI training, accountability mechanisms, and safeguards against regulatory abuse. Without these, even context-aware systems risk becoming tools for systemic censorship.

Gemini’s answer:

The impact of more sophisticated AI on social media censorship is complex, and it's difficult to definitively say whether it will lead to more or less censorship. Here's a breakdown of the factors to consider:

Arguments for Potentially Less Censorship:

Improved Contextual Understanding:

Advanced AI, particularly those utilizing natural language processing (NLP), can grasp the nuances of language, including sarcasm, irony, and satire. This could reduce false positives, where harmless content is mistakenly flagged as harmful.

AI could better discern the intent behind language, distinguishing between genuine threats and rhetorical expressions.

More Granular Moderation:

Instead of blanket bans, AI could enable more nuanced moderation, such as:

Reducing the visibility of problematic content without outright removal.

Adding context or fact-checking labels to potentially misleading posts.

Providing users with personalized moderation settings.

Arguments for Potentially More Censorship:

Increased Efficiency of Censorship:

Sophisticated AI could make it easier to identify and remove content that violates platform policies, potentially leading to more widespread censorship, even if it's more accurate.

AI could be used to detect and suppress subtle forms of dissent or political expression.

Potential for Bias and Abuse:

AI models are trained on data, and if that data reflects existing biases, the AI will perpetuate those biases in its moderation decisions. This could lead to discriminatory censorship.

Powerful AI tools could be used by governments or other actors to suppress speech they deem undesirable.

The "Moving Goalposts" of Policy:

Even with perfect context understanding, the definition of what is "acceptable" on social media is constantly changing, and varies widely between different social media platforms. So even if the AI is better at understanding, the rules that it is enforcing are not static.

Increased automation:

With an increase in AI moderation, there is a decrease in human moderation. This could lead to a less transparent moderation process, and less of an ability for users to appeal decisions.

In Conclusion:

It's likely that advanced AI will change the nature of social media censorship, rather than simply increasing or decreasing it. The key factors will be:

The ethical guidelines that are developed for AI moderation.

The transparency of AI moderation processes.

The ability of users to appeal moderation decisions.

It is important to remember that AI is a tool, and its impact will depend on how it is used.