- Pascal's Chatbot Q&As

- Posts

- And once militaries and security services run on the cloud—once policing runs on platform data, sensors, and “AI”—the cloud, the platform, and the supply chain stop being neutral.

And once militaries and security services run on the cloud—once policing runs on platform data, sensors, and “AI”—the cloud, the platform, and the supply chain stop being neutral.

They become strategic terrain. In wartime, strategic terrain becomes a target.

From “Move Fast” to “High-Value Target”: Silicon Valley’s Long March Into the Battlespace

by ChatGPT-5.2

For much of the late 20th and early 21st century, Silicon Valley sold itself as an escape hatch from geopolitics: an innovation engine that would connect the world, democratize publishing, and route around censorship. The pitch was quasi-utopian and largely commercial: build tools for sharing information, then monetize attention, convenience, and global scale. The reality that followed is more structural and more ironic: the same platforms and infrastructures that became the nervous system of everyday life also became the nervous system of state power. And once militaries and security services run on the cloud—once policing runs on platform data, sensors, and “AI”—the cloud, the platform, and the supply chain stop being neutral. They become strategic terrain. In wartime, strategic terrain becomes a target.

This is not merely a story about “tech doing defense work.” It is about how the design choices of the information age—centralization, surveillance-by-default incentives, hyperscale infrastructure, and political influence—turn private companies into quasi-sovereign actors. They build and operate infrastructure across borders; they decide who gets access to communications; they broker data; they shape election narratives; they supply hardware and software that can tilt battlefield outcomes. That constellation looks, to adversaries, like a distributed weapons system. To domestic publics under intensifying surveillance, it can look like a privatized policing apparatus. In both directions, the same conclusion emerges: Silicon Valley is no longer “adjacent” to conflict. It is inside it.

1) The original bargain: global information tools built for scale, not sovereignty

Early internet-era tools were engineered around openness and rapid growth: protocols that assumed benign interconnection; platforms optimized for virality and network effects; ad-tech that rewarded data extraction and behavioral prediction. The West, especially the United States, experienced this as prosperity and soft power. “Connecting the world” aligned with globalization, liberalization, and American cultural dominance. The hidden bargain was that private firms could build the infrastructure of speech and coordination, and states would largely tolerate it because it amplified national advantage.

Three things changed:

The internet stopped being a separate “cyberspace.” It became the control layer for logistics, finance, energy, healthcare, and government services—meaning outages are no longer “tech problems,” they’re social paralysis.

Platform economics converged with surveillance economics. The most profitable information products became the ones that instrumented human behavior, not merely enabled communication. Data exhaust became a commodity; algorithmic influence became a product.

The state learned to ride the platform. Intelligence, law enforcement, and militaries discovered that commercial systems (cloud, satellites, social media, consumer sensors) were cheaper, faster, and—crucially—already deployed at scale.

2) The pivot: from consumer internet to “dual-use empire infrastructure”

Once governments became major customers, “platform” quietly expanded into critical infrastructure. Hyperscalers began building data centers as geopolitical assets—placed for latency, regulation, subsidies, and alliance politics. Cloud regions became quasi-territorial: subject to local law, integrated with national security demands, and increasingly designed for “sovereign” or classified workloads.

That shift is visible in how defense contracting moved from bespoke systems to commercial cloud procurement. The US Department of Defense’s Joint Warfighting Cloud Capability (JWCC) explicitly frames commercial cloud as something that must work “at all classification levels” and “to the tactical edge”—a fancy way of saying: the battlefield runs on vendor infrastructure. Once that happens, data centers and cloud services become part of the war machine, even when they also host ordinary civilian life.

At the same time, the AI boom tightened the knot between compute and power. AI isn’t only software; it is energy, chips, cooling, and global supply chains. When a single AI training cluster can represent billions of dollars of hardware and years of strategic advantage, it starts to resemble an oil refinery, a munitions plant, or a satellite network: a concentrated lever in national competition.

The uploaded materials you shared illustrate how explicit this has become:

Private-sector data centers have already been framed as legitimate wartime targets when they are believed to support military and intelligence activities, and attacks have been described as disrupting power delivery and causing outages. The legal and practical complexity is precisely that many facilities are “dual use”—civilian and military workloads commingled under one roof.

Communities now fear that building advanced compute tied to nuclear weapons research paints a “bulls eye” on a town—because supercomputers and AI capacity are treated as “power” in the strategic sense.

The chip race is pulling hyperscalers into vertical integration (custom silicon, specialized servers, dedicated AI regions), making chip labs and supply chains part of national capability.

Meanwhile, the frontier narrative is drifting toward “compute at scale anywhere” (even “space-based data centers”), which widens the surface area of conflict from terrestrial sites to orbital and ground-station infrastructure.

3) The political layer: funding, influence, and alignment as a targeting accelerant

Once tech firms fund politicians, shape regulatory agendas, and embed themselves in defense and intelligence programs, they stop being “neutral vendors.” They become perceived as participants in strategy.

That has two targeting effects:

Adversaries treat private infrastructure as state-adjacent—especially when it supports surveillance, targeting, logistics, intelligence fusion, or propaganda operations.

Domestic opponents treat the same firms as a pillar of coercive governance, particularly where platforms facilitate policing, monitoring, and crowd control.

In other words: Silicon Valley’s political footprint doesn’t just affect regulation. It affects how actors assign responsibility—and therefore how they assign retaliation.

4) Why tech becomes a strategic target in wartime: additional reasons beyond the obvious

You already named several direct drivers (political funding, data center buildout, war support via hardware/software). Here are additional mechanisms—the logic of targeting—that often get missed:

A) Strategic “chokepoints” and irreversible advantage

Compute chokepoints: If AI capability is a decisive advantage, then large training/inference clusters are worth attacking because they are slow to replace(chips, power, cooling, construction timelines).

Semiconductor chokepoints: fabs, advanced packaging, photolithography tool supply chains—disruption can set back capability for years.

B) Command, control, communications, intelligence (C3I) dependence

Modern militaries lean on commercial:

cloud analytics,

satellite communications,

mapping,

identity/auth,

edge devices,

and AI-enabled targeting/ISR workflows.

If these systems are degraded, the effect can be comparable to striking a communications hub or logistics depot.

C) Coercive leverage over civilians (pressure through pain)

Attacking dual-use infrastructure can impose social costs:

banking outages,

hospital disruptions,

public-sector service failures,

communications blackouts.

Even when civilian harm is unlawful, coercive actors may calculate that mass inconvenience or fear is the point.

D) “Narrative terrain”: information dominance as a battlefield objective

Platforms are not only infrastructure; they are:

rumor engines,

mobilization tools,

propaganda channels,

and legitimacy brokers.

Disabling or compromising them can disrupt morale, coordination, recruitment, and political cohesion.

E) Data as hostage and weapon

Stealing or destroying data can:

cripple operations (integrity loss),

expose intelligence sources/methods,

enable blackmail,

or trigger internal purges and mistrust.

Sometimes the goal isn’t to “destroy servers” but to destroy confidence.

F) Legal ambiguity creates operational temptation

If dual-use classification is murky and attribution is hard, attackers may assume they can act with plausible deniability or exploit permissive interpretations of military necessity.

G) Supply-chain interdiction as a substitute for direct strikes

Not every actor can hit hardened facilities. Many can:

sabotage logistics,

compromise firmware,

disrupt upstream dependencies (cooling parts, transformers, specialized networking gear),

or target vendors and contractors.

H) Retaliation signaling and escalation management

Attacks on “enemy technology infrastructure” can be used as:

symbolic punishment,

controlled escalation,

or a warning shot short of striking government facilities.

I) Alliance politics and extraterritorial entanglement

When a company operates in dozens of countries, it becomes a site where conflicts collide:

host nations may be coerced,

local populations may resist,

adversaries may target “proxy” infrastructure in third countries.

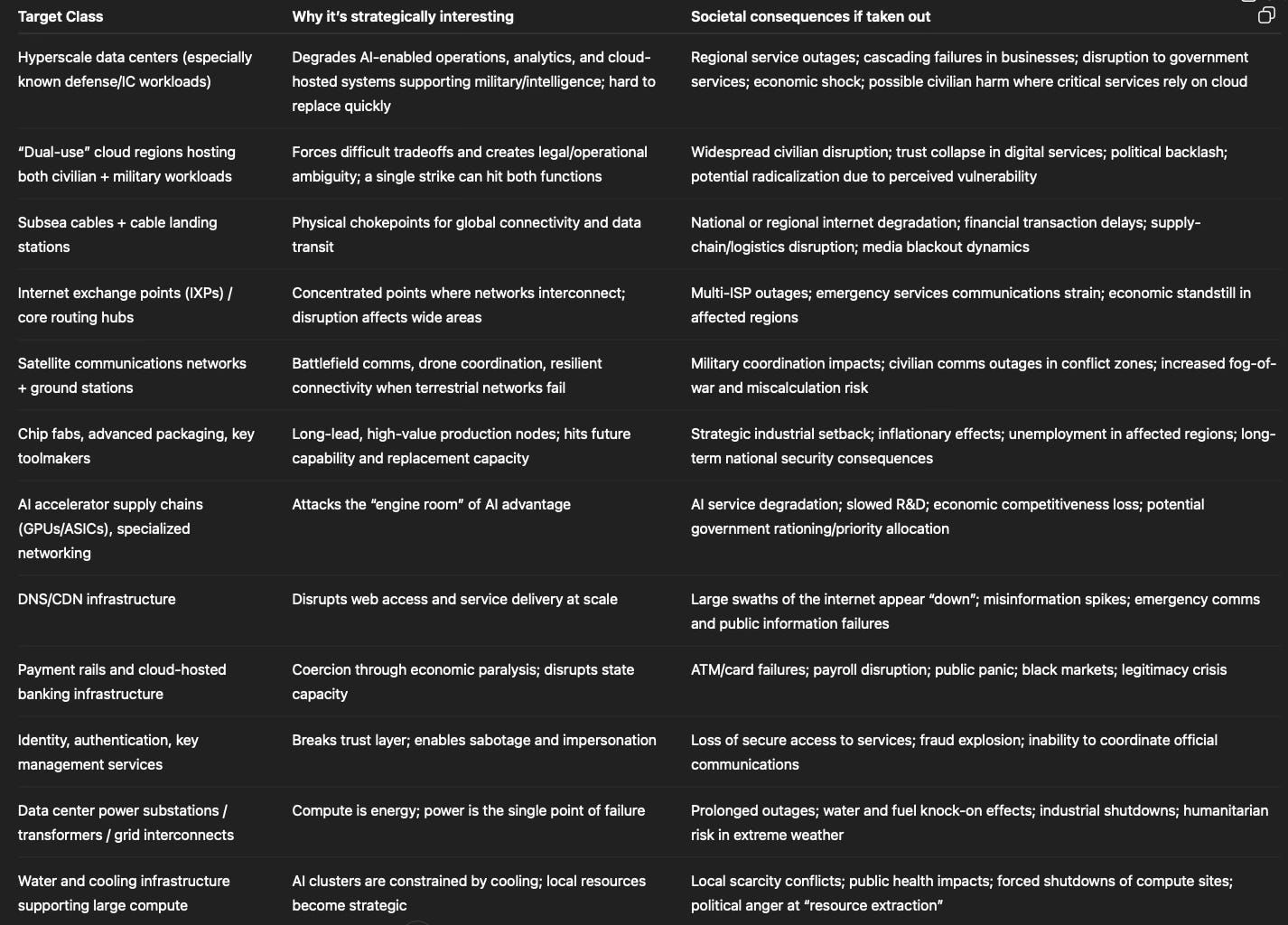

5) Target matrix: what gets targeted, why it matters, and what happens if it goes down

Below is a non-exhaustive matrix of classes of targets (not “how to attack them”), with why they attract attention and the societal consequences if degraded.

This matrix is the quiet revolution: war planners don’t have to target “the internet” in the abstract. They can target the few physical and organizational chokepoints where “the internet” becomes concrete.

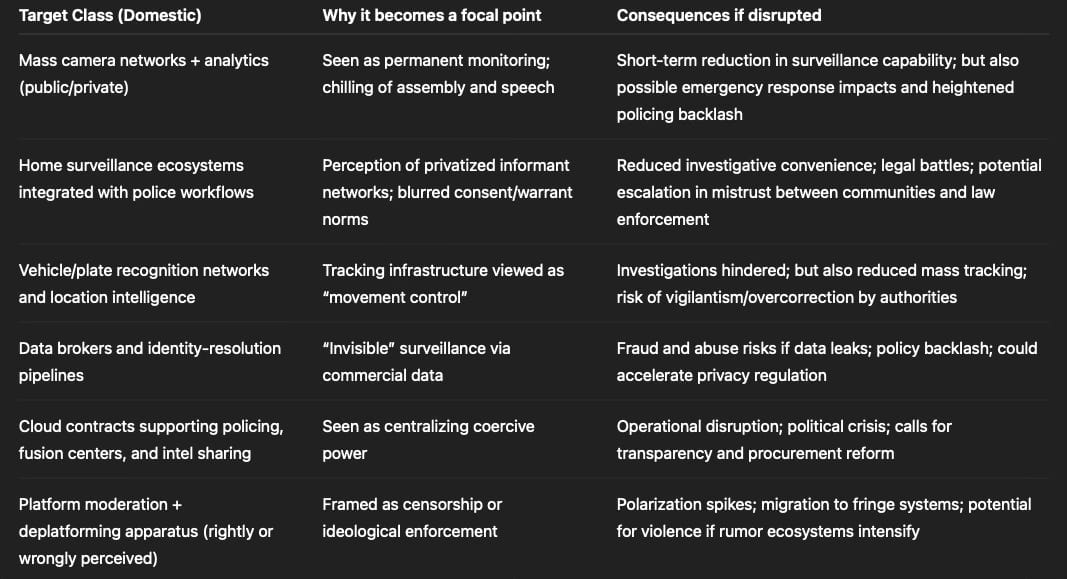

6) Domestic surveillance and policing: why Silicon Valley becomes a target from within

You asked to emphasize the internal front: resistance to domestic surveillance, policing, and techno-authoritarian drift. This matters because it creates a second axis of targeting logic—one rooted not in interstate war but in legitimacy conflict between state-aligned tech and portions of the governed.

The mechanism looks like this:

Platforms and vendors provide tools that expand monitoring capacity (cameras, sensors, analytics, identity resolution, real-time data sharing).

Police and security agencies integrate them into routine operations—often with weak oversight, secrecy, and mission creep.

Communities experience this not as “public safety” but as asymmetric power: selective enforcement, chilling effects, and political control.

Once the tools are seen as coercive infrastructure, they become focal points for protest, sabotage attempts, and political radicalization.

Potential domestic “targets of resistance” (classes), why, and consequences if disrupted

A crucial point: takedowns—whether external wartime strikes or domestic sabotage—rarely produce clean “liberation.” They often produce chaos first, and chaos is a political resource. It can justify emergency powers, expanded surveillance, and deeper militarization of domestic policing. So the societal risk is not only “services go down,” but that outages become the pretext for harder control.

7) The systemic consequences of losing critical digital infrastructure

When critical digital infrastructure is degraded—whether by kinetic strike, cyber operation, insider compromise, or cascading utility failures—the consequences are rarely confined to “tech.” They manifest as:

Coordination collapse: governments cannot message reliably; rumors fill the void.

Economic seizure: payments, supply chains, and basic commerce stall.

Healthcare brittleness: scheduling, records access, and medical logistics break down.

Critical infrastructure cascade: energy, water, transport, and telecom failures amplify each other.

Legitimacy shock: populations lose trust in institutions’ competence and in the promise of modernity.

Security escalation: outages become a justification for extraordinary measures—surveillance, curfews, censorship, conscription of private infrastructure.

Geopolitical fragmentation: countries accelerate “sovereign stack” policies, demanding local control, local clouds, local chips—splintering the global internet into blocs.

In short: the destruction of “digital infrastructure” is not just disruption. It is a forced redesign of society under stress.

Conclusion: Silicon Valley’s new problem is not “PR”—it’s war logic

Silicon Valley did not wake up one day and choose to become a military target. It followed its incentives: scale, integration, monopoly rents, and political access. Governments followed theirs: outsource capability to the private sector, buy speed, buy innovation, buy AI advantage. The result is an historic commingling: civilian life and military power running through the same buildings, networks, chips, and platforms.

Once that commingling happens, “neutrality” becomes difficult to claim and even harder to maintain. Adversaries will treat cloud and platform infrastructure as part of the war effort; domestic opponents will treat surveillance-linked tech as part of coercive governance. Either way, the Valley becomes a battlefield actor—whether it wants that role or not.

The strategic question for societies is therefore blunt: Do we want the infrastructure of public life to be a set of privately controlled, globally entangled, politically active systems that can be targeted like military assets? If the answer is no, the remedy is not a slogan about ethics. It is a structural redesign: separation of civilian and military workloads, hard rules on surveillance, meaningful transparency, resilient decentralization, and procurement discipline that treats cloud and AI as critical national infrastructure—not merely convenient services.

Sources:

Data Centers Are Military Targets Now (The Intercept)

Tiny Township Fears Iran Drone Strikes Because of New Nuclear Weapons Datacenter (404 Media)

An exclusive tour of Amazon’s Trainium lab, the chip that’s won over Anthropic, OpenAI, even Apple (TechCrunch)

Musk says he’s building a Terafab chip plant in Austin, Texas (The Verge)

U.S. Department of Defense — Department of Defense Announces Joint Warfighting Cloud Capability (JWCC) Procurement

Microsoft Learn — Microsoft and the Joint Warfighting Cloud Capability (JWCC)

Data Center Dynamics — DoD awards $9bn JWCC to AWS, Google, Microsoft, and Oracle

The Guardian — Revealed: Israel demanded Google and Amazon use secret “wink”… (Project Nimbus reporting)

+972 Magazine — Inside Israel’s deal with Google and Amazon (Project Nimbus reporting)

Electronic Frontier Foundation — Amazon Ring… mass surveillance and policing access

ACLU — Flock partnership / sharing video with law enforcement (Ring context)

CEPA — Musk Throttles Russia’s Battlefield Communications (Starlink constraints)

Starlink in the Russo-Ukrainian war (overview)