- Pascal's Chatbot Q&As

- Posts

- AI & Memorization: separating what is factually true, what is still an evidentiary fight, and what is legally dangerous for AI developers even under “traditional” jurisprudence.

AI & Memorization: separating what is factually true, what is still an evidentiary fight, and what is legally dangerous for AI developers even under “traditional” jurisprudence.

AI developers tried to win a normative fight (fair use, innovation, transformativeness) by denying a technical reality (recoverability). The denial is collapsing under measurement.

The Memorization Mirage: What LLMs Really Keep, What the Law Really Cares About, and Where the Next Court Shock Will Land

by ChatGPT-5.2

The public argument about “memorization” has been a mess because it splices together three different questions and then pretends they are one:

A technical question: do model weights retain recoverable expressive text from training data, and under what conditions can it be elicited?

A product-security question: do alignment layers and guardrails reliably prevent verbatim recall in real-world deployments and downstream fine-tuning?

A legal question: even if a model can regurgitate, what does that mean for copying, fair use, territoriality, market harm, and liability theories?

The following three perspectives each grab a different piece of that knot.

Matthew Sag is trying to stop people from winning the debate by metaphor—especially the “LLMs are just MP3s of books” move. His point is not “memorization doesn’t matter,” but rather “compression is not a conclusion.” You still have to do the empirical work: how much, how recoverable, how distributed across works, how likely in production, and how tied to market substitution.

Ed Newton-Rex is trying to stop AI companies (and, frankly, some courts) from taking comfort in the absence of regurgitation in the default UI and calling that “proof of no memorization.” He highlights research that suggests fine-tuning can “wake up” verbatim recall—turning what looks safe at the surface into something extractable.

Edward Lee (LinkedIn) uses Sag as a springboard for a much stronger legal claim: memorization is not infringement and (as he puts it) Judge Alsup has already treated training as “spectacularly transformative” even if memorization of all works occurred. This is rhetorically powerful, but it’s also where the most slippage happens—because it conflates what a court praised (transformative purpose of training) with what courts have not settled (what the model contains, what counts as a legally cognizable “copy,” and what happens when recall is technically feasible and commercially weaponizable).

What follows tries to get to the bottom of the truth: separating what is factually true, what is still an evidentiary fight, and what is legally dangerous for AI developers even under “traditional” jurisprudence.

1) The factual and objective truths (the parts that are no longer serious to deny)

Truth A: Training necessarily makes copies at least temporarily.

Whatever philosophical story you tell (“learning,” “abstraction,” “statistics”), training pipelines ingest and reproduce text in machine-readable form, tokenize it, batch it, and process it. That is copying as a matter of engineering reality. Whether it is legally excused is a fair use / exception question, not a “did copying occur” question.

Truth B: Some frontier models can output verbatim spans from copyrighted books, and “guardrails” can hide this rather than eliminate it.

This is not hypothetical anymore. Multiple lines of research (including the Stanford/Yale extraction work and the newer “Alignment Whack-a-Mole” work) show that production models can be induced to reproduce long spans of text, and that safety layers can reduce visible regurgitation without proving the underlying representation lacks recoverable text.

Truth C: Fine-tuning is a predictable “memories come back” pathway.

The key empirical claim highlighted by Newton-Rex—fine-tuning can activate verbatim recall at substantially higher rates—isn’t merely a scandalous anecdote; it’s exactly the kind of downstream capability chain courts tend to take seriously once it’s framed as foreseeable product behavior rather than “edge-case jailbreak theater.”

Truth D: Memorization is uneven and cannot be predicted reliably work-by-work in advance.

Sag is right that “memorization” is not uniform like JPEG artifacts. It varies with duplication, training dynamics, and the structure of the data. That’s a technical reality—and it matters legally because it pushes disputes away from sweeping narratives (“it memorizes everything” / “it memorizes nothing”) toward sampling, measurement, and risk profiling.

These four truths jointly kill the most common lobbying talking point: “the model doesn’t contain copies, so nothing to see here.” The best remaining version of the AI developer position is narrower: “the model is not meaningfully reconstructive in normal use, and what recall exists is limited, mitigated, and not market-substituting.”But that’s not an “it doesn’t memorize” argument. It’s an argument about degree, likelihood, and harm.

2) Where I, ChatGPT, agree and disagree with each voice

Where Sag is right

I agree with Sag that “compression” is a rhetorical shortcut, not a dispositive technical or legal category. Calling training “compression” invites lay analogies (MP3/ZIP/JPEG) that smuggle in the conclusion “reconstructable copy” without proving reconstructability, scale, or production likelihood. That said, Sag’s most legally incisive point is different: what matters is not memorization in the abstract but memorization that “finds its way into production.” In other words, courts will care less about metaphysics (“is it a copy?”) and more about how the system behaves when deployed, tuned, or used commercially.

But Sag’s framing can be weaponized if people treat “uneven and incidental” as “therefore not a serious risk.” Unevenness doesn’t reduce liability—sometimes it increases it—because it means developers cannot pre-clear exposure, cannot reliably assure non-reconstruction, and cannot credibly promise “no copying occurs in jurisdiction X.”

Where Newton-Rex is right

Newton-Rex is right that “absence of regurgitation in the default product” is not evidence of “no memorization.”It can be evidence of filtering. And if fine-tuning can unlock latent recall, that matters because it’s exactly the kind of foreseeable downstream use that plaintiffs will frame as negligent design, inadequate safeguards, or contributory infringement enablement.

Where Newton-Rex can overreach is in treating “fine-tuning unlocks recall” as automatically collapsing the entire fair use analysis. That’s not how courts usually think. Courts tend to ask: what is the defendant doing, for what purpose, with what market effect, and with what safeguards? Evidence of recall strengthens plaintiffs on market harm and on “substitution” narratives, but it does not mechanically negate transformativeness.

Where Edward Lee’s LinkedIn take is useful

Lee is directionally correct on one narrow statement: memorization, as a technical phenomenon, is not automatically the same as infringement. Infringement is a legal conclusion that depends on copying of protectable expression, fixation, distribution/display, and defenses/exceptions.

But Lee’s add-on—“Alsup already ruled … even if memorization of ALL training works occurred”—is exactly the kind of claim that will get stress-tested brutally because it risks turning a context-specific fair use ruling into a universal safe harbor. The Alsup framing widely discussed after Bartz v. Anthropic is indeed that training was “spectacularly transformative” (factor one), while also leaving serious exposure around pirated sourcing and other issues. That is not the same as a judicial blessing of “memorization of all works” as a harmless fact pattern. Courts are rarely that absolutist, and plaintiffs will argue (credibly) that the new empirical record (extraction + fine-tuning activation) changes the premises on which “no market substitution” and “guardrails are adequate” were previously argued.

So: I agree with Lee’s core caution that “memorization ≠ per se infringement,” but I disagree with the implied leap that memorization concerns are therefore legally defanged.

3) The questions that must be tested in court (because they’re not clean-cut yet)

These are the issues where litigation is not merely fact-finding—it’s jurisprudence-making.

Issue 1: Are model weights a “copy” of a work in the copyright sense?

This is the heart of the “compression” fight, and it’s not settled. A plaintiff-friendly theory says: if weights store recoverable expressive sequences, they are functionally a fixed embodiment of the work (even if distributed, transformed, and non-human-readable). A defense-friendly theory says: weights are parameters encoding statistical relationships, not a “material object” in which the work is fixed in a perceptible form; any resemblance is emergent and not a stored copy.

Courts will likely be forced to pick standards like: recoverability threshold, substantial similarity metrics for latent representations, and whether “capable of being perceived with the aid of a machine” applies when the “machine” is essentially the model itself plus prompting.

Issue 2: How “production-like” must extraction be to count?

Sag’s “what gets into production” line becomes a battlefield. Plaintiffs will argue that fine-tuning is now normal, commercially marketed behavior (enterprise customization, author-style tools, domain adaptation), so extraction after fine-tuning is not an academic trick—it’s the foreseeable product lifecycle. Defendants will argue that malicious fine-tuning is user-driven misuse, not the developer’s act.

Expect courts to ask: what is reasonably foreseeable, what is marketed, what controls exist, and who bears responsibility when customization predictably reactivates memorized text.

Issue 3: Fair use factor four under a modern substitution theory.

US fair use often turns on market harm. If models can output long spans, and if fine-tuning can unlock high-fidelity recall, plaintiffs will frame this as a direct substitute for books (and for licensed corpora), not just “inspiration.” Defendants will counter: most outputs are non-verbatim, most use is not substitutive, and licensing markets shouldn’t be conjured into existence by litigants.

This is where the empirical record will matter: rates of extractability, under realistic prompts, across catalogs, in deployed contexts. The “truth” is not philosophical; it’s measurable.

Issue 4: Territoriality outside the US (UK/EU exposure).

Newton-Rex flags something strategically important: if a model distributed in a jurisdiction arguably contains copies, plaintiffs may be able to plead local acts of infringement more easily. Whether that theory succeeds depends on whether courts accept “model presence = copy present” logic and how they treat cross-border training.

4) The issues that are relatively clear-cut—and dangerous for AI developers if courts apply existing law in a non-novel way

These are the areas where AI defendants are most exposed even without courts inventing new doctrine.

Clear-cut Danger A: Proven pirated sourcing (or willful blindness to provenance).

Even in a world where some training can be fair use, piracy is the poison pill—legally and rhetorically. Courts and juries distinguish “lawfully acquired inputs used for a new purpose” from “industrial-scale infringement used to compete against the rightsholder.” If plaintiffs can show Books3/LibGen-type pipelines and inadequate provenance controls, that can defeat equitable instincts, amplify statutory damages narratives, and undermine credibility across the entire defense.

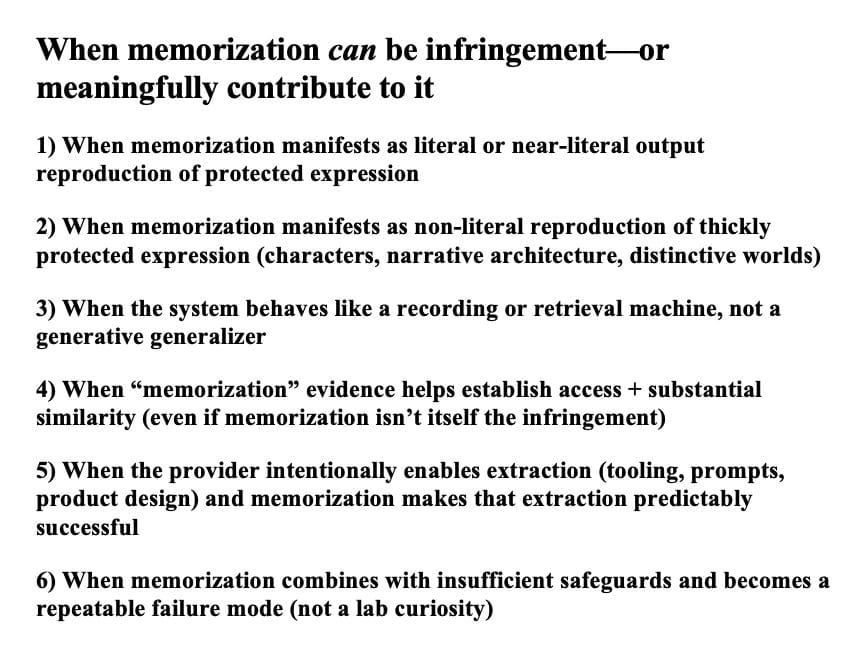

Clear-cut Danger B: Verbatim output at meaningful scale in ordinary or marketed workflows.

If a commercial system outputs long verbatim spans under reasonably ordinary use, that looks like classic infringement behavior (reproduction and distribution/display), regardless of what you think about training. Developers have tried to firewall “training legality” from “output infringement.” Plaintiffs will try to collapse the firewall with: “your training created a system that reproduces the work on demand.”

Clear-cut Danger C: Overconfident public claims (“no copies in the model”).

Once researchers show recoverable verbatim spans and fine-tuning activation, categorical statements become liabilities. Even if they were once defensible in a narrow sense, they read badly to judges: either misleading, or careless, or strategically evasive. That matters because fair use is equitable, and credibility is a hidden fifth factor.

Clear-cut Danger D: Security framing (foreseeable misuse) in the age of agentic customization.

Fine-tuning activation doesn’t just implicate copyright. It invites product-liability and negligence-style narratives: you shipped a system that predictably reanimates copyrighted text when tuned for a common commercial use (writing assistance), and you failed to implement adequate controls, monitoring, and auditability. Even if copyright doctrine stays conservative, these adjacent frames can bite.

5) So what’s the “bottom line” truth?

Here is the simplest accurate synthesis that respects all three opinions without inheriting their overclaims:

Sag is right that “compression” is not a conclusion and that copyright relevance turns on the nature and deployment of recall, not on slogans.

Newton-Rex is right that “guardrails hide memorization” is a live reality, and that fine-tuning can reveal latent verbatim recall in ways that matter for both market harm and jurisdictional strategy.

Lee is half-right that memorization alone is not automatically infringement, but the move from that premise to “therefore courts have already blessed this even if everything is memorized” is not a stable resting place—especially as the empirical record shifts from “occasional snippets under contrived prompts” to “systematic extractability under commercially normal customization patterns.”

The deeper truth—the one that keeps emerging in our memorization discussions—is that AI developers tried to win a normative fight (fair use, innovation, transformativeness) by denying a technical reality (recoverability). The denial is collapsing under measurement. What remains is a narrower, more defensible argument: yes, some memorization exists, but it is limited, mitigated, not market-substituting, and not the purpose of the system. Whether courts accept that depends on facts that are now testable—and plaintiffs are finally arriving with tests.

And that is why this debate is turning from ideology into forensics.

·

4 MAR

The Copy That Isn’t There—Until It Is: When “Memorization” Becomes Copyright Risk